I’m very pleased with the way this image turned out, so I’ve decided to make it available for readers at it’s full 1024×768 pixel size. Just click on the smaller image to open the big one in a new tab!

It’s been a while since I’ve done a straight pseudo-physics article, but as I write this I’m in the final preparations for the Zener Gate campaign*, and as a result the subject has been on my mind.

In fact, the campaign is due to have it’s second session next week. Nevertheless, this will all be new stuff to my players; their characters aren’t in a position to learn about the game physics yet, they are still gathering the raw data upon which that physics will be based (and trying to survive in the meantime). Even then, none of this is necessary information for playing the campaign; this is purely backstory.

Foundations

For this campaign, I’m deliberately starting from first principles, and making only two assumptions: the physics is based on the real world as I understand it, complete with things that aren’t understood yet that might or might not be ‘correct’, like string theory; and two, parallel worlds exist, and can be reached by the Zener Gate technology. Those choices need to be explained in order to set my terms of reference.

Let’s be clear: the campaign is not going to be about the physics of the game world, certainly not to the same extent that, say, my superhero campaign is. I will have no qualms with having a tech that is based on one theory in one world and having that theory be ‘disproven’ in the following session, even though the technology might still work. I see absolutely no need for the physics to be consistent in it’s finer details; instead, it will be driven by plot and the need to generate adventure.

That deliberate ignoring of consistency immediately devalues the game physics as a campaign structural element, mandating the lack of physics emphasis within the campaign. Game physics will thus be a series of plot devices pulled out when necessary.

The easiest answer would have been to simply use the same physics as my superhero campaign, because both the players and I are very familiar with it, it is highly developed and refined, and works very well. But it’s also a bit too comfortable and predictable. That game’s physics was constructed by assuming that transitions through time are subject to analogues of the physical forces and concepts that describe traveling through space, and that the combination cancels out a number of beliefs that are inconvenient when it comes to the superhero trope, like the speed of light limit.

Since starting from the same point and making the same assumptions can only lead to the same place if the initial logic is correct, that approach is ruled out. Starting from scratch is the only real alternative.

There’s a second, equally-valid reason, too: if the game physics of the Zener Gate campaign is too similar to that which I have used before, it could lead to confusion between the two, if and when small but important differences in assumptions arise. So I’m looking for something that’s reasonably robust but still fairly flexible and definitely a bit more superficial – small enough to fit into a single blog post, when all is said and done.

Sidebar: Session Limits & implications

I took half an hour, a week or so ago, and came up with no less than 20 adventures. Some may take 2-3 game sessions (of 3-4 hours play) to complete, most will take a single game session, and some will take less, sometimes much less. Since the goal is a zero-prep campaign beyond the basic idea and improvising from it – with perhaps a few minutes to generate names – the variable length doesn’t bother me.

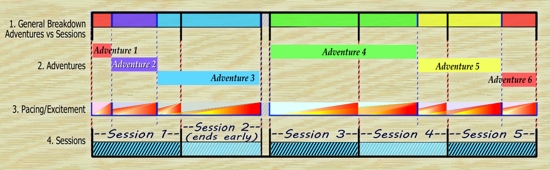

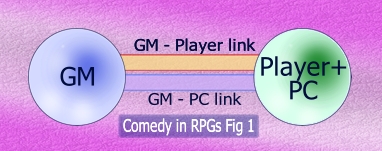

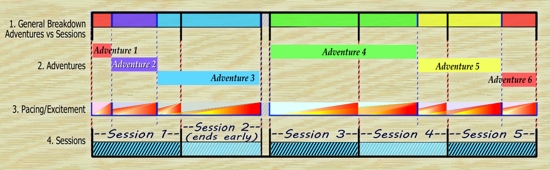

But it does have an impact on the game in a practical sense, particularly when the principles of running a good campaign are applied. The diagram below illustrates some of the complexities.

The top row divides time into game sessions and shows adventures within those sessions by color. The second row removes the sessions and splits the adventures onto different lines. Note that I already know that adventure #1 won’t last more than an hour or two (Actually, I stretched it to about 2 1/2) and that adventure #2 won’t be enough to fill a whole session of play unless it finishes early. The third line builds in everything I’ve written about adventure pacing, showing that pacing is a function of both session lengths and adventure length. Finally, row 4 is all about the sessions, and shows that session length is determined by adventure length and not vice-versa within this structure – session two is shown as ending 15-20 minutes early because there isn’t enough time to get adventure #4 to a decent start before the session clock runs out. Sessions are defined as ending when either the adventure reaches (1) a conclusion, or (2) a cliffhanger point, without enough time to reach the next adventure-conclusion or mid-adventure cliffhanger point.

This model shows that the campaign is neither strictly serial or episodic in nature, but a complex hybrid. It mandates that the last hour or so of play has to either be headed for a conclusion that can be reached in time or that builds to a cliffhanger finish for the day.

Most of my campaigns are far more strongly serial in nature, and contain mechanisms in the form of subplots that permit the day’s play to be padded out or cut short as necessary so that adventure conclusions almost always end the day’s play.

The main point of relevance here is that there isn’t a lot of time to long-winded discussions of game physics in most sessions. In fact, the only adventures shown that might be suitable are adventures 3 (mid-way through, about an hour into session 2) and 4 (early in session 3).

Parallel Worlds

All of the adventures that I came up with in that 30-minute creative blast fit this model, but none of them actually come out and announce “we’re in a parallel world”. They are sufficiently varied in temporal setting that it won’t be clear whether or not the universe is a single timeline (which may be more historically complex than the history books acknowledge), or if the PCs are bouncing around between parallel worlds.

That’s deliberate on my part. But there is one very good reason for being up-front here, even though the characters in the game won’t have any idea of the situation in this respect: it provides a facility for new players/new characters to enter the game. It’s already known by the players that they will start the game with 2 PCs and 1 NPC, and that the NPC-leader will be (was!) taken away from them fairly quickly, leaving the two PCs to manage their own lives. The game mechanics don’t permit them to pick up additional team members from the local time-frame, but there will be a way for them to pick up an additional PC if that person is already a Zener Gate traveler. Since all such travelers that come from the PCs’ timeline are accounted for, that only leaves someone coming from somewhere else – and that means a parallel world.

Adventure policy will still be to keep the question open as often as possible and as much as possible. At every given point of intervention, there has to be the doubt and insecurity that this could be their own history that they are messing with. And sometimes, it will be!

Events

My view of the structure of time for this campaign revolves around events. An Event is defined as “an outcome that can be changed”.

Events come in three flavors: Statistical, Probabilistic, and Human.

Statistical Events

Statistical events include things like radioactive decay and pair production at any given moment and place and other such quantum-scale phenomena.

Most of the time, these make no macroscopic difference whatsoever. In a radioactive substance, a given number of atoms will undergo subatomic changes over any given time-span. Whether one particular atom does or doesn’t is not particularly relevant.

And yet, they can compound and cascade and chain-react and domino-drop, to the point at which timeline A at a given instant is measurably different from timeline B – dividing a radioactive sample in half and finding that one sample contains more decay products than the other, for example, indicating that more of the atoms in the first have decayed than in the second.

It’s only when looking at statistically-rare events at a subatomic level that these things matter, and there aren’t many of those. Nevertheless, the instant any such difference, anywhere in history, becomes measurable, the timelines have demonstrably diverged, and what was a timeline indistinguishable from “base reality” has become one that is now distinguishable from that reality – if you know where to look.

Chaotic Phenomena

Some physical phenomena are so sensitive to changes in input conditions that they amplify the consequences of any such change to the macro level, i.e. the human scale or bigger. The most famous example is the weather, but even these systems are relatively resistant to quantum level deviations; a single butterfly flapping it’s wings somewhere might be enough to change the weather three days later in a completely different part of the world, but the changes that it is introducing are way more substantial than quantum-level changes.

This demonstrates a dividing line – any event too small to trigger macroscopic differences in chaotic phenomena outcomes is a “statistical event”, any event larger than that is either “human” or “Probabilistic” in nature.

Statistical Noise

The net effect is that statistical events create “noise” when plotting those events over time. This can easily be enough to push an event over a threshold, triggering a chaotic change, but only when there was already an underlying trend towards that change event.

Consider a pot of water heating up on a stove. Heat is transferred from the source to the water via the metal of the pot. This heat increases the molecular movement of the water, raising its temperature. Molecules bounce into each other more and more energetically. When that happens, the transfer of kinetic energy is not easily predictable; the total will be the same afterwards, but the distribution of that energy is more complex. Sometimes, it will be very equal, but more frequently, one of the two molecules will exit the interaction with more energy than it had before, while the other has less. But the less energy that a given molecule has, the more of a ‘sitting duck’ it is for a subsequent interaction, so it’s energy state will not stay low for very long. You therefore have two mechanisms, one evening out the energy distribution, and the other concentrating it.

It’s at the top of the column of water within the pot that really interesting things are happening. Deep in the column, a highly energetic water molecule will almost certainly hit another one and the energy will be dispersed again. At the top of the water, it can actually break free of the surface tension holding the water together and fly off, taking the energy with it – if not stopped by a lid of some kind. That’s why water boils much faster when you put a lid on the saucepan. Eventually, energy is being released from the water at the same rate as it is being added, and the water can no longer increase in temperature; adding more energy simply increases the release, without increasing the overall temperature of the water (unless you enclose it to put it under pressure, which is an entirely different situation).

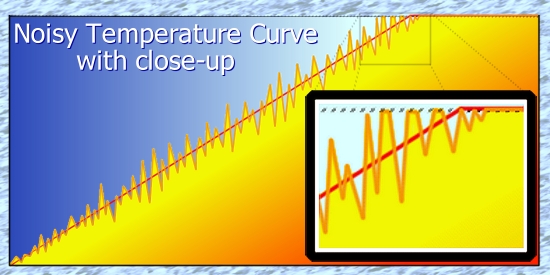

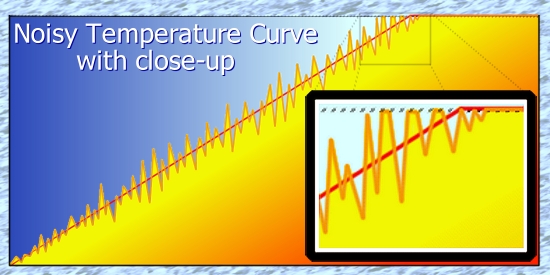

If you were to graph the temperature of the water over time, you would pretty much get a straight line until boiling point is reached, and a flat line beyond that. But if you were to graph the temperature at the very top of the water, you would find that it was a very noisy line with a trend, or mean, that matched the overall temperature line.

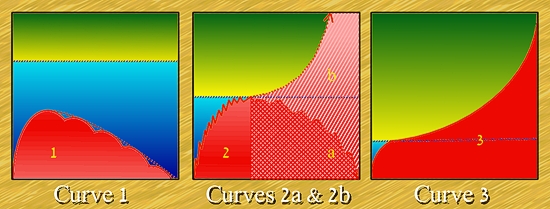

You still can’t exceed the boiling point, though, so the tops of the spikes would get cut off. If you produced such a graph, it would look something like this:

This is one example of a change at the “statistical” level producing a quantifiable change at the macro scale, and it happens because the statistical “noise” adds to the trend, sometimes decreasing it (when an especially energetic water molecule carries its energy away) and sometimes increasing it, momentarily bringing a small spot on the surface to boiling point, producing steam..

The same pot of water offers a second example, as well: the bottom of the pot is not going to be perfectly smooth. There will be pits, and those pits can result in a small sub-population of the water molecules receiving heat from multiple directions, not just from beneath. The result is that those water molecules turn to steam before the heat can be distributed through the water, and the resulting bubble grows until it overcomes the force of surface tension holding it in place, at which point it bubbles to the surface and bursts open, releasing it’s contents. These imperfections can be microscopic in size, but they have a much larger and more visible effect.

Parallel World application

Whenever you have an outcome that can produce a measurable difference, you are creating an alternate timeline. In most cases, that difference will be unnoticeable, and the world indistinguishable from the base timeline; but when such variations in quantum “noise” are taken into consideration, it becomes apparent that there are real-world phenomena that would distinguish one world from another if you looked in exactly the right place. In timeline A, a bubble was just reaching the top of it’s travel through the not-yet-boiling water while in timeline B, it was still a fraction of an inch short of doing so – or had done so a fraction of a second sooner.

Because this noise is completely random in it’s occurrence, if you aggregate the results of many different parallel worlds, you end up with a flat probability curve – any given result is equally likely, provided only that there is no underlying trend. Statistically, the noise evens out leaving only those underlying trends. If you start a pot of water of the same size heating up on multiple worlds at the same physical location within the world, they will all come to the boil pretty close to simultaneously.

With untold millions upon millions upon millions of such events in which random “noise” pushes some condition over a threshold – or not – at any given instant, there must be an equal number of parallel worlds that are indistinguishable from the base reality of comparison unless you happen to examine exactly the right place at the instant of divergence between the two timelines, or the measurable event triggers another difference, and then another, and then another, in a chain reaction, or a cascade.

It follows that there is going to be a slow drifting apart of such worlds in terms of their uniformity. It will be glacially slow, even non-existent to all intents and purposes, until suddenly a cascade takes place in a chain reaction – and then, quite suddenly, they are no longer exactly the same.

Even then, the change might not be noticeable. Let’s say that in timeline A, a star ignites in the 3,975,675,253rd most-distant galaxy from the Milky way on a given date – the trend is for that to happen, but it got pushed over the edge by a statistically-random event; in timeline B, it took three or four more days to occur. Who in the milky way would notice this change? Hear that hollow echo…

Even cascades are restricted in effect by the square of the distance removed from the point of observation plus the time required for the cascade’s effects to become measurable at that point. The change described in this example is so far away that we would have to wait billions of years to have even a vanishingly-small chance to see it.

If a new star happened to ignite in the Pleiades, a known stellar nursery within the Milky Way (some 410 light-years away), it would almost certainly be noticed within a day or two of that 410-year interval. Astronomers watch it regularly for the very reason that there’s a chance of new star appearing there just about anytime, and the process itself is of acute interest.

So significance divided by distance yields the probability of a noticeable difference. Adding time into the equation means that however low the result, it will eventually amount to a significant difference. The oldest, most different, divergent timelines would have very different stars and planets in the sky. You could even measure that statistically to “index” the degree of drift away from the base timeline.

Probabilistic Events

It was once believed that the planets were fixed and predictable in their orbits. In the later 20th century, that belief was demolished as an illusion, except over a relatively short term.

The problem is that every mass in the universe affects the motion of every other mass, though this impact grows smaller with the square of distance. Nevertheless, the effect is real enough that observed discrepancies in the orbits of the various gas giants predicted the location of Pluto. When the latter was considered a planet, that was impressive enough – but now that Pluto has had it’s status so controversially downgraded, it becomes even more so.

With every body that you add into consideration, orbital calculations become exponentially more complicated. Even a small change in position of a mass that is rendered immeasurably insignificant by distance accumulates over time until it isn’t insignificant any more. If we had precise measurements of the masses, positions, and motions of every body in the solar system, all the way out to the Oort Cloud, and effectively infinite time to make the calculations, then we could accurately predict orbits forever. Take away any one of those requirements, however, and you start introducing errors into the equations – small ones, but they will add up.

Now, some of those errors will cancel out others, while some will not. But the end result is the same: it might take 1000 years, or ten thousand, or 100 thousand, but sooner or later the accumulated errors will overwhelm any reasonable certainty of where any given planet will be in the sky.

Of course, the bigger the object, the more difficult it is to perturb it’s predictability; momentum tends to keep it on track. So Jupiter almost certainly has the most predictable planetary orbit in the solar system. It perturbs others far more than it is perturbed.

Some stellar objects like asteroids and comets are small enough and come close enough to these unknowns that predictability windows are a lot smaller. Last year, an Asteroid came close enough to the earth that for a while there were some very sweaty astronomer’s palms – this was potentially a city-killer, and could have impacted anywhere, if it impacted at all. It flew between the Earth and the Moon, and – in this case – kept right on going. It’s orbit was such that it’s not something we have to worry about for 200 to 2000 years, depending on which astronomer was being quoted at the time. (Most worrying, it was only 3 days away when discovered, and there have been others spotted only after they have passed us!)

But it’s always possible that at some future point, something we haven’t spotted before will be in the right place at the right time to perturb that orbit, just a little. How much is significant?

Well, the moon is about 384,000 km away. It was closer than that, so let’s say it missed by about 200,000km. The Earth is about 149.596 million km from the sun, so the diameter of it’s orbit must be roughly twice that – 300,000,000km. So to get that rock to shift it’s course by 200,000km requires a net perturbation of about 200,000 x 100 / 300,000,000 = 2 / 30 % = about 0.067%. That’s not very much, so people will certainly be keeping an eye out as best they can.

And, of course, you can divide that perturbation by the length of time that it has to become a factor – so if it were to happen right now, and would result in a direct threat in 199 years, 0.000335% would do it.

Orbital mechanics being what they are, there will be times when the perturbation needed will be a heck of a lot bigger – when it’s headed away from the earth, as it is now, for example. To have it effectively turn around and come back to strike us next year would require such a tremendous change that it’s simply not going to happen – and if it did, it would be the least of our problems. In fact, it’s still not going to happen, because anything massive enough to have that sort of effect would already be perturbing other orbits, like those of the GPS satellites, by more than enough to ring alarm bells.

The calculated values are the sort of perturbations needed to alter the trajectory enough that in however many orbital periods, it will be in a dangerous state. If it has a 50-year orbit, the soonest that it can pose a threat is in 50 years, give or take a small margin of error.

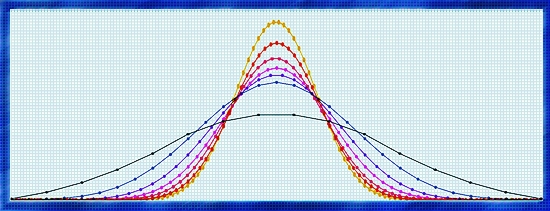

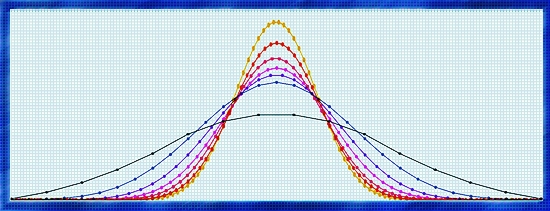

It follows that orbital predictions are actually something that would be familiar to almost any roleplayer, a bell-shaped curve, with the peak probability being exactly where the object is currently predicted to be. The more time you allow, the more likely it is that some external influence will pull reality to one side of the curve or the other – but the greatest likelihood is that it won’t be enough to make a huge difference except over a very long period of time.

If you start with an almost-flat probability curve and keep adding itself to itself, time and time again, it becomes steeper and steeper, meaning that a small shift to one side or another is enough to drop you from a likely event to an unlikely event very suddenly:

Given enough time, almost anything becomes possible – but, in the short term, the outcome is VERY predictable. And, of course, measurements are continually being checked and updated, especially for delicate and dangerous things like the orbits of earth-grazing asteroids.

Weather

It’s the same deal – though on a far smaller scale – with the weather. With modern forecasts, a meteorologist is almost certain to be pretty close to exactly right about tomorrow, is probably pretty right about the day after, is likely to have the right general prediction for the day after that, might be generally correct about four days from now – but check again in a day or so – and has some vague idea of what things might possibly be like five-to-seven days from now, but two times in three that forecast will change between now and then, except in the most general terms – and sometimes, even then.

So weather is a LOT more chaotic than asteroid orbits, but still subject to reliable prediction – in the short term. Predictability has also improved markedly over the last 50 years or so; when I was a child, there was a 50-50 chance that tomorrow’s forecast would only be correct in a general sort of way, and the day after that? Forget it.

And yet, in more general terms, while the weather on any specific day might not be predictable, the seasonal weather patterns overall are sufficiently consistent to be given a whole different name – climate. That doesn’t mean that you can even predict the January average temperature and rainfall for a specific location with complete accuracy – but you can state that it will probably fall in a certain range, and that there will probably be X number of rainy days, and so on. Barring any long-term changes, the more time you spend aggregating results, the closer to reliability you will get.

That’s why these phenomena are labeled “Probabilistic” – within a certain margin of error, they can be predicted, with that margin of error growing over time relative to the current point in time.

These sources of error – be they rogue planetoids or rampant butterflies – can only be combated by taking fresh measurements and updating forecasts regularly.

Parallel Worlds Impact

When one timeline separates from another, the more sensitive a prediction system is to such errors, the more quickly that timeline will diverge from its baseline. Using modern weather forecasts, for example, tomorrow will probably bring almost-identical weather in both timelines. The day after, most places will have the same weather, but a few locations may be slightly different. Three days after separation, and the weather will be noticeably different in perhaps 1 in 3 locations. Four days after separation, and 1/2 of places will have noticeably different weather, Five days and it’s 2/3, six days and all bets are off.

And yet, if you were to aggregate and average the results of many different parallel worlds, you would still end up with the same climatic results, and the more parallel worlds that you consulted, the closer to that result you will come. The climate is a far more stable value than the weather.

Again, if you were to plot those results, you would get a bell-curve – with the peak of probability being the climate-derived value. Any given result from any given world might be anywhere on the curve – but viewed as a statistical aggregate, there is predictability.

Two sides of the same coin?

It’s no accident that a Cascade of random events yields an outcome so similar to a Probabilistic event – because they are, in effect, one and the same thing. The probability of a Cascade occurring at any given instant, plus time for the effects to become noticeable, can be calculated and plotted, and will yield a — yes, a bell curve. That’s because of the underlying trend, just like the heating of the water. Logically, it means that if you broke your measurements into sufficiently small intervals of time, the water would boil at different instants on the different parallel worlds – but the error is swamped by the typical scale of measurement.

Which is why I thought the “noisy temperature curve” significant enough to include in this article!

Human Events

All this gives a framework for the understanding of events of the third variety – Human events. Human actions, human decisions, human moments of inspiration, humans yielding to emotional frailties – these can all be assigned a significance, they can all cascade to produce measurably different outcomes, but the probability of significant divergence is relative to both the significance and the observability of the event.

In timeline A, someone forgets their car keys until they get into the vehicle, and has to go back to get them before leaving for work, in some small out-of-the way place in, say, Oklahoma. The likelihood that this small change will ever be noticeable in another small town in, say Scotland, is vanishingly small. Only if there is a domino effect, and sufficient time for the consequences to propagate, would such a random event produce a substantial impact.

Some events are more critical, because they significantly affect a greater number of people, or have a persistent effect. Most of these involve underlying trends, however – it’s rare for the unpredictable left-field event to affect sufficient numbers.

That means that even a significant event is not likely to change significantly from one timeline to another. There would be a resilience to change in most cases, but how that resistance work is not yet obvious.

Significance & Criticality

Take, for example, a decision that would ultimately affect almost 1/3 of the US workforce – JFK’s decision to go to the moon. Does it really matter if he made that decision in one particular moment or ten minutes later? Not at all. It’s a significant event, but not an especially critical one; he has until he writes the speech in which he announces the decision to make up his mind.

Significant events tend to be coupled with entirely separate Critical Points, and a change needs to persist across that entire temporal range before it can cascade.

Even if he started writing a quite different speech and then changed his mind, it probably wouldn’t have a critical effect. But the polish on the speech would eventually suffer, and that might (in turn) alter the level of commitment that he is able to evoke. Or not; once announced, it wasn’t all that long before “Space Fever” gripped a huge share of the population. Congress might have started out unconvinced only to get caught up in the hoopla and get on the bandwagon a little later. The net result might be that Armstrong gets to the moon a month later than he did. And, while that’s a measurable difference, you still have to look in the right place and time for it; here in 2017, it wouldn’t have all that much impact.

It can be argued that a more critical point followed the Apollo I fire, when there were real fears of the program being shut down or scaled back. Because of the many technological improvements that derived from the space race, some of which – but not all – had been completed at that point, it is entirely possible that this would domino into a far more significant change to history, delaying weather satellite technology for ten years, for example – or bringing it forward by three-to-five. Accurate weather forecasting saves lives every year, even without considering natural disasters like Hurricanes. The accumulated impacts of the changes to those lives would almost certainly Cascade.

Would they impact the natural social trends? Who knows? They might, but they probably would not. Impacts are first contained geographically and then dispersed, losing significance with every step.

Only if a Cascading Event were to impact the life of a Significant Individual in a critical way – FDR’s decision to run for an unprecedented third term, for example – would there be the potential for subsequent history to be irrevocably changed.

Criticality Threshold

This implies that all Critical events have a Criticality Susceptibility Threshold that must be reached or exceeded by the Significance of a change before that change can cause a widespread difference in outcome, and that the scale of the consequences are related in part to that threshold.

Should a change in an event not have the required Significance to achieve this Threshold, it will have minimal non-local impact and will dampen out over time. Should the change in an event exceed the required threshold, it begins a chain reaction that produces measurable changes – at least for a while.

Consider: how much difference would the substitution of one Roman Viceroy over another have had on modern-day London? Regardless of the immense significance that this change might have held in Imperial Roman times, from the moment the Roman Empire begins to fall, the Significance of that event also begins to wane. It’s possible that one consequence before it has had time to vanish completely from relevance might trigger a new Cascade, and the more Significant the change, the longer it will remain a consideration, and the more opportunities it will therefore have to do so; but that is no certainty that it will occur.

In theory, it is also possible for an event to have exactly enough Significance to neither exceed the Criticality Threshold nor to fade away. But, in practice, this is another “noisy curve” – sooner or later, random chance will tip such a delicate balance one way or the other. In fact, you can define the threshold as being the exact amount of Significance required to overcome the resistance to change posed by the Threshold such that random chance determines the outcome.

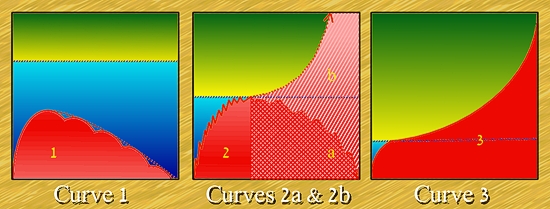

I have done rough diagrams of the three possible Significance outcomes relative to the Criticality Threshold, below.

These three curves show the same event with different criticality thresholds. Noise has been ignored in (1) and (3) because it is only significant in the case of (2). The first curve shows an Event whose Significance never achieves the Threshold of Criticality; although it generates local domino effects for a while, the significance diminishes over time. The second curve is shown in two forms, (2a) and (2b). In the case of (2a), noise diminishes the Significance just as it reaches the Threshold of Criticality, leading to a diminishing pattern similar to curve 1. In (2b), the noise pushed the Significance momentarily above the threshold of criticality, Any noise is rapidly overwhelmed by the Cascade of events, which rapidly escalate. This is similar to Curve (3), where the initial event carried enough Significance to achieve the relatively low Threshold shown. Once again, a pattern of escalation ensues.

Another way to look at these results is that two timelines look the same – until they quite suddenly and seemingly from out of the blue, they don’t.

It should also be noted that there is no indication of scale on these diagrams. They are just valid in describing changes rippling out beyond the confines of an immediate family as they are, consequences escaping the confines of a small town or local region to impact a state, or a major city to impact a nation, or a single nation to affect a significant portion of the world. The smallest change in the wrong place at the wrong time (“For lack of a nail…”) can be devastating; the most massive change in a safe place and time will barely be noticed. Ancient Pompeii might have been visited by Aliens the morning of Vesuvius’ eruption, and it would not have changed history one iota.

Technological Impact

Technology might seem, at first glance, to embody one of those underlying trends toward change, and to some extent, it does, but to an even greater extent, it does not. If you were to carry the designs of a modern laptop computer back to 1850, it would make no sense to anyone, and even if they could reason out the meaning (with a little guidance) of what they could see, the infrastructure, materials, and manufacturing capacity doesn’t exist to even make the tools needed to replicate it.

When it’s time to railroad, all sorts of people will invent it; THAT is an underlying trend, it means that the “state of the art” has achieved the standard needed for that technological development and random inspiration will show any number of people with the right educational qualifications how to go about it.

The Consequences Of Brilliance

“Ah, but some men are brilliant thinkers, able to absorb, understand, and utilize abstract concepts far beyond the current capabilities,” replies a would-be changer of history. Sadly, it is not so. There have been many occasions in the past where an idea was given birth too soon; the maverick genius who did so usually lost his shirt. The people who developed and controlled the necessary forebears of the required technology squashed the potential competition, ridiculed the inventor, saw no future in it, or worse, saw a future inimical to their interests and ruthlessly suppressed it. When it’s not time to railroad, even giving someone the gift of a working steam engine will not produce a locomotive and tracks.

Space-time Travelers

But those topics, by virtue of the examples offered, provides a natural segue into questions of time travel, and the subjects of Consequence and Paradox.

When someone arrives via Zener Gate, their arrival is accompanied by a release of energy, mostly in the form of radiation. There may be times when that is directly significant, most of the time exposure is so attenuated by dispersal through the surrounding volume of space that it does not.

But some of the energy will be in the form of sound, which may cause people to change what they are doing and investigate the source – an unprompted Change that may or may not exceed the Critical Threshold – and some will be in the form of light (same), and some will be in the form of heat, and where there was “empty” air, now there is a solid being doing the equivalent of flapping a butterfly’s wings.

The weather will immediately begin changing, though the impact won’t manifest (in all likelihood) for a number of days. Other such Chaotic systems may be affected – the earth weighs infinitesimally more because the mass of a whole new human being has just been added. This will immediately have a minuscule effect on the orbit of the moon – again, the significance of the change may not be apparent for millennia, but eventually the trend so set in motion will accumulate beyond the Criticality Threshold, and the resulting error will mean that the moon is thereafter in a slightly different place relative to a timeline in which the space-time travelers did not arrive.

Consequently, the very presence of a Space-time Traveler will have an impact on the timeline in the form of Statistical and Probabilistic events, even if they do not interact with anything.

Consequences

It follows that every interaction between such Travelers and the local inhabitants will qualify as a Significant Event because those locals are NOT doing whatever they would otherwise be doing. This does not mean that history is irrevocably changed, however; even showing up in the middle of a battle and bringing superior firepower to bear might not substantially alter the outcome, and hence achieve the Threshold Of Criticality. Except in rare cases, the natural resilience of History will dampen the impact.

If a significant interaction takes place, i.e. one with a low Threshold of Criticality – such as telling FDR a few days after Pearl Harbor what the course of the war will be – the timeline may be irrevocably changed. This runs the risk of creating a Paradox.

Paradox

Except that there’s no such thing. The consequence of arrival immediately creates a branch-point in reality because there will always be a version of the timeline in which the Travelers did not arrive. History is therefore sandboxed against paradoxes. Even if one of the Travelers kills one of his ancestors before the next genealogical link towards the traveler’s existence is conceived, that simply means that there will be no analogue of that particular Traveler deriving from that particular timeline. The traveler’s own personal timeline is not affected.

By definition, the traveler can do nothing that would create a paradox in his own personal timeline, or so it seems – at least initially.

The reality is a little more complicated. A traveler from timeline A might not be able to create a Paradox in timeline A, but there is nothing – at first glance – to prevent a traveler from Timeline B making such a change and creating a Paradox for traveler A.

Again, it’s not that easy. The only way to prevent a branching of the timeline is for Traveler B to make the transformation of history completely inevitable from the instant of his arrival. Any scope for any alternative to occur will result immediately in the creation of a branch of time in which that alternative does occur – and hence, Traveler A’s timeline is protected from change.

In fact, logic shows that the only timelines which can never be changed with time travel are those in which time travel is discovered!

But the logic is incomplete. Does it really make a Significant Difference if it is traveler A who departs from his native timeline or if he ceases to exist and Traveler C takes his place? Even a Curve 3 change has a limited half-life, beyond which there may have been a Historical Change that has no practical impact on daily existence. There is still a Threshold Of Criticality that protects Time from casual changes. A time-traveler may have a lower Threshold relative to someone who never travels in Space-time, but he has a threshold nevertheless, and while that Threshold may be breached sufficiently to trigger a local change, that doesn’t mean that it will automatically trigger one on the next scale.

Quantum Time

What at first seemed to be a single Critical Threshold is now shown to be a nested series of such Thresholds, each affecting a greater geographic area, and each a more difficult target to achieve than the last. A change, no matter how substantial, will eventually fail to exceed the required Threshold, and the Event Significance will peter out, as in Curve 1.

No matter how substantially human history is altered, it is unlikely to have much effect in the 3,975,675,253rd most-distant galaxy from the Milky….

The Upshot

The PCs can do whatever they like and the only consequences of any substance will be the effects on them. All else will eventually fade away, or will generate a new set of timelines for the consequences to Cascade in.

From the moment you accept, as fundamental, the concept of Parallel worlds, History is secure.

Motivations

But wait – what then motivates the PCs to get involved in whatever the GM has planned?

There is this stat called KARMA. Experience points pay directly into Karma, which can be used in all sorts of good ways to benefit the PC who has it, and to benefit any companion. But you only get Karma for engaging in the Adventure in an appropriate manner. Sitting back and doing nothing produces no Karma. Trying to take advantage of a historical situation may produce wealth, or resources, but it produces no Karma – and if you don’t have the Karma to protect a resource, you can’t pay points to obtain it, and the GM is not only free to relieve you of that resource, he is obligated to do so by any means necessary.

That leaves the PCs with little choice. Whatever the situation put before them, they have to do their best to solve it.

Changing a Changed Future

It’s also worth pointing out that there is absolutely nothing protecting a changed timeline from being visited at some future point in its history by either the same Travelers or Different travelers. The consequences of mistakes or malfeasance are always at the GM’s fingertips, and the PCs can always be too clever for their own good, and subsequently hoist by their own petards.

Predestiny & Prophecy, or ‘What is Karma that you should be mindful of it?’

Those are Metagame concepts, regarding the interplay between the Game Mechanics and the in-game behavior that they engender. How do these things manifest at an in-game level?

If a time-traveler enters a timeline at time C, learns what he did in time B within that timeline, and then communicates that information to an earlier version of himself at time A – and there’s no game rules against that – does that trap the character into a Destiny over which he has no freedom whatsoever? Expanding this concept, does that mean that everything the character did prior to time A on his personal timeline was predestined to occur?

In other words, can you bootstrap your future?

The very term, Karma, implies a continuity between past actions and future consequences. Where and when these consequences come into force must be consistent and correct with reference to a traveler’s personal timeline, but nothing is said about the matter in respect of other times and other timelines.

Every PC choice either matters or it doesn’t. If it matters, if it makes any form of substantial difference now or in the PC’s personal future, there is a causality connection along that personal timeline, and hence exceeds the extremely low Threshold required to create an alternate timeline in which the PC makes a different choice.

This places the GM in a position to play Cosmic Censor. Any attempt to bootstrap a character in this sort of way puts him in the position of either railroading the campaign to ensure that events play out in the manner described by the bootstrap, or of permitting random temporal noise to alter that outcome, or of simply telling the players that their characters are now deriving from a personal history that does not include the Bootstrapping.

Me, I like option B. Should the PCs attempt such a bootstrap, they can only communicate with the past selves that have not yet played through the events – but, since no such communication was received by them at that time, they are clearly already in a different timeline that has no obligation to follow the events of the past as they have experienced them. So such attempts represent an expenditure of Karma to the benefit not of themselves, but of some NPC analogues of themselves – from the PCs point of view, a waste of Karma.

This provides an escape clause for the GM to prevent a bad decision on the part of the PCs from completely destroying the campaign – if I foresee such an outcome, I can pull out the “warning from your future selves” bootstrap and throw it in the PCs direction. And then penalize the PCs at some future point (i.e. the end of the adventure) by forcing the expenditure of Karma to send off that message.

Predestiny? Poppycock.

Prophecy? Only if it is beneficial to the Campaign – and it places the GM under no burden of fulfillment.

Bootstrapping? Only if the GM uses it as a tool to preserve the Campaign.

The only time the game system bites the PCs on the tail is if they attempt to cheat it. Time travel campaigns may be about changing the shape of the future – but the only future that gets inevitably altered by this Time-travel campaign (in the long term) is the personal future of the PCs themselves. Everything else rearranges itself as necessary to generate adventure. Almost as though it were designed that way! Oh wait, it was!

And That is the significance of today’s article for everyone else out there.