Pieces Of Creation is an occasional recurring column at Campaign Mastery in which Mike offers game reference and other materials that he has created for his own campaigns.

All images used to illustrate this article are public-domain works hosted by Wikipedia, Wikipedia Commons, or derivations of such works, except as noted.

This article is a work of fiction and no endorsement of the content should be attributed to any of the individuals or institutions named, photographed, or credited.

Author’s Notes: This Alternate History continues right from where it left off last time. The Civil Service has become a milstone around the metaphoric neck of the Empire, and the Empress has embarked on a plan to regain control of her Empire that is composed of equal parts inspiration, determination, and desperation…

1987

The new offensive against the Peerage bore unexpected fruit one year into the Empress’ four-year plan. By forcing businesses to focus on environmental issues, expenses began to rise dramatically, eating into profits, as expected. This reduced the stock value of the companies affected, leaving them ripe for hostile takeovers by the New Entrepreneurs, also as expected. Politically, this reduced the desirability of the Civil Service/Peerage/Big Business path, and reduced their ability to sway public opinion, also as expected, while elected politicians gained in power and influence, also as expected. As the peerage lost their grip on elected officials, so the Empress regained lost ground – all according to plan. In her political planning, she anticipated that the Lower House would eventually become representative not of the peerage who had previously funded their election campaigns but of the New Entrepreneurs who would be funding them henceforth – so that her decrees would only be blocked on those few issues on which both agreed. She had a whole raft of civil service reforms prepared and ready to go as soon as they became viable.

The movement of the Dow Jones showing the effect of Black Monday; image by Edward.

Black Monday

But on October 19th, the lesson of History that the Empress had not taken into account produced a shattering reminder of it’s importance, as a series of profit-estimate revisions in “blue chip” stocks brought about a massive fall in the Dow Jones index – some 23 per cent – creating a panic that threw the Empire into recession. Hundreds of thousands of jobs were placed in immediate jeopardy across the globe as businesses reacted sharply to their loss of value. Interest rates rose sharply as Banks tried to minimize the risks they faced when issuing loans, putting further pressure on business profitability. Many went under, further driving up unemployment, increasing the perceived risk of other loans, prompting further rises in Interest Rates.

The Derailing Of Reform

Instead of being able to concentrate on her agenda for civil service reform, the Empress, politicians and employers alike combined to try and restore sanity to the economy, while the unions began bitter wars for redundancy benefits, retraining, and other reforms. In order to prevent starvation, the government was forced to introduce welfare payments on a massive scale; to prevent a total collapse of the health-care system, they were forced to provide a medical rebate scheme providing free health care. All of this meant that the government was spending money that didn’t exist in comparison to economic growth. That produced massive inflation levels, driving prices up – and weakening the value of every pound the Government was providing. For every pound of expenditure, by years end, the government was providing only 89p of value – or, more accurately, for every pound of value that had to be provided to maintain a minimum, marginal, existence for those affected, the government had to spend £1.13. The Government had unintentionally become the Empire’s greatest “employer” – paying people to do nothing more than look for work – and the Civil Service was more entrenched than ever.

So massive were the consequences that they overshadowed all other news that year – not that there was much to tell. There were the usual assortments of calamities, calumnities, and catastrophes; the usual pointless bloodshed continued with nothing gained on any side; and so on. None of it mattered very much by year’s end, though it seemed important enough at the time.

1988

The Political balance within the Empire had changed as a result of the Empress’ manipulations, though, and she had regained much of the throne’s ability to rule by decree. There was an ongoing momentum toward change, which she was able to harness. Although she had not been able to achieve her long-term goals of civil service reform, she was at least able to influence events. She started by dismissing for incompetence the senior civil servants of the Empire, and promoting younger public servants to positions of high authority – people with fresh ideas, who had not yet been fully indoctrinated into the culture of the Peerage. Using the New Years Day honors list, the Empress demonstrated clearly that the game of Imperial Rule had changed, and that the player whom many thought defeated was staging a thunderous re-emergence.

David Copperfield, noted magician, in a publicity photograph for his 1977 Television Special 'The Magic Of ABC Featuring David Copperfield', photo by ABC Television. Copyright may persist in some countries.

The Honor List of 1988

For many years, the awarding of honors had been under the control of senior civil servants. They were the ones who drew up the lists of potential honorees, who controlled the choices (frequently through a “magician’s force”, where two choices were offered for each post, the one the peerage wanted and one who was patently unsuitable). While there had been the scope for the occasional “extra” to recognize some common citizen who had achieved extraordinary things on behalf of the Empire, these titles were ceremonial and titular, conferring no authority within the peerage.

With the New Year’s Honors of 1988, that changed. Not having had the chance to be taught all the long-established tricks of the trade, many of the new Department Heads had provided two reasonable candidates; where they had not, it was immediately apparent, and the Empress was able to refuse to accept the nominations, insisting on two viable candidates. The result was a weakening of the influence of the conservatives even within the Peerage; another 10 years of the same, and some sort of equilibrium would be reached, though that was hoping for too much, and the Empress knew it, as shown by her memoirs (posthumously published in 2025).

A window of urgency

The Empress knew that visible change was a political necessity, and very quickly. Firstly, there was the need to restore some optimism in the future of the Empire, to end the economic distress that was an unintentional by-product of her power struggle with the peerage. Secondly, there was the need to recapture public confidence in the ability of the Government to improve the welfare of the common man, lest the rioting and endless succession of coups continue. Thirdly, while the new “Big Businesses” were currently progressive in the attitudes, time and growth would make them increasingly conservative as the political and economic landscape grew to their liking. They would support changes they perceived as being in their best interests, but once those were achieved they would want to maintain the new status quo as strongly as the previous crop had done, so her window of opportunity was limited. And finally, the senior civil servants she had dismissed were still around, as were their predecessors; given time, the new Heads of the Civil Service would learn from them all the old tricks; only the headlong rush of events had left them floundering so far. Having pushed them off balance, she had won a short span of time in which to make changes; if it were squandered, she would soon find that nothing but the names had changed.

So this would inevitably be a year of transition and rapid changes within the Empire.

Symbol of the League of Nations, original image by Mysid and refined by others. Symbol may be trademarked.

100 days of Chess moves

The Empress started by ending the military farce in Afghanistan. The invasion had never come close to achieving its goals, and by providing an ongoing reason for hostility amongst the Arabian population, had encouraged acts of terrorism.

She then rearranged the Civil Service, creating a number of new bureaus and departments to deal with the new technologies and their applications.

She decreed tax benefits for the ongoing training of employees, especially those who would otherwise be retrenched, and changed the priorities of the Space Programme to a more pro- environmental stance.

Finally, she established a new organization, the League Of Nations, to provide a political counterpoint to one of the Peerage’s more subtle but far-reaching advantages, the “Family” network.

The League Of Nations

The latter requires some further description of the political structure of the Empire, as it had evolved over time.

The Empire had a monarch, an elected lower house, an appointed upper house, a civil service, and a military force – the latter mostly consisting of forces contributed by each member nation.

Each member nation also had a monarch, an elected lower house, an appointed upper house, a civil service, and a military force, as described in earlier sections of this history.

In theory, the latter were restricted to dealing with internal issues, and were subservient to their Imperial Equivalents. In practice, there had been unification, over the years, of the Peerages and Civil Services. The Civil Service of Spain, for example, could be considered nothing more than the “local branch” of the Imperial Civil Service. In particular, intermarriage amongst the traditional peerage meant that any given member could trace some sort of relationship to almost any other member; they were effectively one large family, related by blood to the Empress (The exceptions being the peerages of Africa and the Middle East, who had shown considerable disinclination to marry outside of ethnic bounds).

This of course totally broke down the theoretical independence of the various national peerages, and shows quite clearly why the same problems were experienced virtually simultaneously in all corners of the Empire. It hadn’t mattered much before the rise of modern communications, but coordination of strategies, policies, and planning had become increasingly easy as technology developed.

The elected politicians had no equivalent relationships, a significant contribution towards their ineffectualness.

The “League Of Nations” was intended to rectify that lack and to provide a new channel for diplomatic coordination amongst the member nations. Furthermore, it was inherently self-limiting; if politicians assumed positions of real power over their nations, they would inevitably develop agendas which favored their nation over others, which would generally lead to the development of opposing power blocs within the League.

The Avalanche Of Reforms

Many of the Empress’ moves in the 100 days of reform had been designed to dissolve – or at least, disrupt – the uniformity of structure in the peerage and the Civil Service. The real coup came with a reform of the Peerage.

Elizabeth decreed that the membership of the Peerage should be restricted in number according to the growth of industry within the Empire, rather than by population and socio-economic regionality.

At the same time, she changed the structures of the civil services in many of the key members, on the pretext of trialing various solutions to the problems facing the Empire to determine the best one – but “inadvertently” making them harder to relate to one another except through the Imperial Civil Service. While this shifted power from the locals to the overall Civil Service, it also meant that the flow of information through those offices rose by an even greater ratio. Of course, in a time of economic distress, it was easy to refuse any requests for the expansion of the civil service.

The net effect was to ensure that the Imperial Civil Service had no time to use their theoretically-greater powers – unless they neglected their primary tasks. Any Civil Servant who exercised their authority thus became eligible for dismissal on the grounds of incompetence. The theory was that over time, as the National civil services came to recognize that they had greater effective powers than their Imperial Counterparts, they would begin to assert that authority to their own benefit over those in rival nations, fracturing the overall unity of the Civil Service.

They’re all Domestic Issues

In the meantime, the National politicians were using the disarray of their local peerages to effect their own changes.

The South African government announced harsh new restrictions to the Apartheid policy, clearly making the first step in abandoning it altogether.

Ethiopia and Somalia used the avenue provided by the newly-formed League Of Nations to arrive at a peace settlement arbitrated by Denmark, who were seen by both as having absolutely no stake in the outcome, and hence as being as impartial as it was possible to get. This ended 11 years of ongoing border disputes.

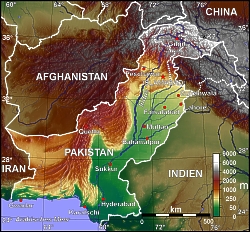

The military forces released by the Afghan withdrawal undertook a series of lightning strikes in the Middle East, designed to force various hostilities into a pause for reflection, and began to blockade various nations in the region whose politics were opposed to that of the Empire – Iran and Iraq, Israel, Afghanistan, Syria, and so on.

This caused an immediate escalation of the ongoing Oil shortages, but those nations suffered immediate economic collapse. Within months, peace talks were underway between previously intractable opponents. At the end of the year, PLO leader Yassar Arafat renounced terrorism as an effective means of instituting change and recognized the state of Israel in a speech to the League, in a largely-successful attempt to win political support in the new venue for his claim to “Dispossessed Nation” status.

The emblam of the 'Empire Games' (Commonwealth Games) until supplanted by the Five Olympic Rings. Image by Bill William Copton.

The Last Empire Games: Symbol Of Change

The Empire games of 1988 were especially significant, not only for the sudden wave of optimism that was sweeping the world as a consequence of these changes, but because of a new participant. For the first time, Chinese athletes participated, at the personal invitation of the Empress. Although not overwhelmingly competitive in the events, the Chinese nevertheless began discovering common ground with the population of the rest of the world, and the event was adjudged a magnificent success. Certainly, the Mao reacted to the humiliation they experienced with a determination to succeed that would ensure the process of bridge-building would continue.

This was the last Empire Games per se to be held; at the conclusion of the event, it was announced that it would be renamed the Olympic Games thereafter, and that all nations who were willing to attend the Barcelona Games in four years time would be welcome. India, Pakistan, Central America, Japan, and many others could resume the international community. This was viewed as potentially the greatest step in achieving peaceful relations with the Mao in decades.

Only one occurrence marred the Games; Canadian Ben Johnson won the 100m, but was subsequently disqualified for taking steroids. Although prompting global outrage, this occurrence was not seen for the ominous portent that it would eventually prove….

The Exxon Valdez, three days after the vessel ran aground and shortly before the fateful storm. Photo by the US National Oceanic and Atmospheric Administration.

1989

The peerage rallied, as predicted, in 1989. Their chosen battleground was a legal challenge to the reported likelyhood of an ecological catastrophe, alleging that the campaign was designed to restrain trade and force them into unprofitable business practices.

This was an unfortunate, because 2 months after the lawsuit was announced, the Exxon Valdez, a fully-laden oil tanker, ran aground, spilling more than 40 million liters of oil along the Alaskan coastline. While mathematicians pointed out that probability just measures how unlikely something was to happen, what little public support they had wilted, and many of their allies chose the better part of discretion and abandoned them. No-one was fooled; the timing may have forced the traditional businesses further onto their back foot, but they would wait a while and regroup.

OPEC Headquarters Building, Vienna, Austria. Photo by Priwo.

The Coming Of OPEC

The Middle East continued to slowly edge towards a fragile peace. In June, one of the most divisive figures in the region, the Ayatollah Khomeini, died in Iran. Beloved of the secular hard-liners, even his many enemies conceded that the man fought for what he believed in – usually, they added, to the point of obsession. No matter how earnest his beliefs, it was his unwillingness to compromise – and his willingness to treat any who did as an enemy – that had been the cause of failure of many initiatives intended to heal the wounds. Ultimately, others would take his place as clerical spokesman and intractable fundamentalist, but without his political authority.

The change of government brought about a domino effect, leading the oil-producing nations of the region into a trade coalition designed to regulate oil prices and availability – OPEC. This was the biggest indicator to date that the Arabian nations were serious about peace; in order to be effective, OPEC pretty much presumed a lack of hostilities.

Frederik de Klerk at the annual meeting of the World Economic Forum, January, 1992. Photo and copyright by World Economic Forum.

The End Of Apartheid

Nor was this the only government to change direction completely in response to the changes in global political atmosphere. The next to fall was the Botha administration in South Africa; although they had moved towards reform in the course of 1988, the Botha administration had not moved far enough, fast enough, to satisfy the advocates for change who were growing in authority under the reform umbrella. He was succeeded by F. W. de Klerk, who immediately set about dismantling Apartheid and ending years of political repression.

Imperial Prime Minister John Major in 1996, Photo by PFC Tracey L. Hall-Leahy, Courtesy US Department Of Defense

The Winds Of Change

The same sort of thing was happening all over. Poland, Germany, Hungary, Yugoslavia, Bulgaria, Czechoslovakia, Romania, and Russia all moved away from previously hard-line conservative governments towards more progressive representatives. At year’s end came the ultimate expression of the reform movement, as sufficient changes were made in the different member nations governments to trigger a change of government at the Imperial level. John Major was suddenly the Prime Minister of the Empire – right in line with the decreasing average age of politicians, and ending decades of conservative administration.

1990

The nineties felt like a new beginning, in a lot of ways. The problems of the past were falling away, one after another, and in their place, new conundrums were emerging to trouble the policymakers. The three iconic figures of the 80s were now at their lowest ebb to date; Michael Jackson was a recluse haunted by media allegations of strange lifestyles and pedophilia; Sir Bob Geldof was virtually penniless, divorced, and an often-forgotten man; and the Princess Diana, while still a public favorite, was beginning to experience the bloodthirsty downside of what was generally known as the “media circus” or “paparazzi”, as her marriage to Prince Charles began its public disintegration.

Economic Recovery

The economy had staggered back to its feet after the blows dealt it in the 80s and the same mood of cautious optimism was pervading the stock markets and boardrooms, driven more by colorful entrepreneurs than by faceless men in corporate grey.

There was something of the underdog about these flashy moneymen, battling with the corporate greed of the peerage, which lent many of them public support and market share that they would otherwise not have captured. The 90s would reveal the economic consequences of this new economy and the fates of a second generation of new entrepreneurs, but at the time, they were riding the crest of a wave, a looming boom and groundswell of confidence not seen since the end of the Third Global War.†

†That’s WWII in our history.

The Middle East: Musical Ideologies

The Middle East continued to experience a transition that had seemed unthinkable only a decade earlier, as the architects of much of the violence moved closer to a moderate position, while nations who had become members of unstable alliances against them reacted by becoming more extremist and distant from the Empire. In particular, Iraq and Israel would start the decade as staunch members of the Empire and end it as mistrusted agitators, not far removed from hostilities. The first part of this transition had become a clear trend in the late 80s, 1990 saw the introduction of the second.

Saddam Hussein as Prime Minister of Iraq, photo by Iraqi State Television.

Iraq: A disintegrating friendship

The concern was all about weapons of mass destruction under the control of nations in an unstable region, and had been since the Libyan flirtation with a nuclear weapons programme in the late 70s. It had been known for years that Iraq had built up vast stockpiles of nerve and biological agents, flouting the Imperial treaty with the Mao, but because they had no delivery systems for these weapons, because the weapons had never been used, and because the Empire needed staunch allies so badly in the region, there had been no urgency in addressing the situation. Furthermore, one of the reasons for the Iraqi regime’s ability to be such a staunch ally was the security that having these weapons under their control conferred. In 1990, that began to change. As peace continued to grow within the region, the Empire’s need for Iraqi support eased; but that alone was insufficient to prompt military opposition to Prime Minister Saddam Hussein, now entering his 12th year as supreme political power in Iraq.

In 1990 the second “great excuse” – lack of a delivery system with sufficient range – vanished. In April, Imperial Security agents intercepted components of a “supergun” bound for the regime. Subsequent investigation revealed that the Iraqis had been quietly acquiring Russian-made SCUD missiles for much of the last decade; these potentially had ranges roughly double those of the Nazi V2 of the Third Global War, which is to say that London was just inside their theoretical range. The SCUDs were conventional high-explosive devices, but a review of intelligence revealed that they could be modified to carry any warhead desired – and that the greatest concentration of the relevant expertise was Iraqi in nature.

None of these were newly-discovered facts; the failure was not one of intelligence gathering, but one of analysis, as different departments of the Civil Service attempted to protect their sources of information and gain an advantage over the heads of rival departments. The Ministry Of Trade knew of the purchase of the missiles, but because Iraq was a member in good standing and one of the few nations in the region allied to the Empire, they knew of no reason to bring the purchases to the attention of the Intelligence Department; the Ministry of Science knew that the expertise in converting SCUD missiles to alternate payloads was Iraqi, but because they had no missiles, saw no need to stress the fact to the Intelligence analysts; and so on.

All this put a new context on a number of side-comments made in speeches and in diplomatic talks with Saddam over the previous decade, in which the Iraqi Leader had repeatedly emphasized the “rich rewards” that would follow from the national support of the Empire without enumerating exactly what those rewards were. Analysts had dismissed this as rhetoric, or had assumed that the rewards in question were the same ones that the Empire foresaw – peace and prosperity, stability and trade. The question of what rewards Saddam believed would be forthcoming had never been asked, let alone answered. Now for the first time, intelligence analysts focused their attention not on the enemies of the Empire in the region, but on one of their allies, and they did not like what they found.

Saddam Hussein had privately expressed the opinion at one point that the conflicts of the Middle East would not be resolved until the region was brought under the control of a single political force; this had been interpreted at the time as meaning “Imperial Control”, but now it was speculated that he believed that Iraq would be rewarded for its support of the Empire by being given control of the dissident nations. He had similarly suggested that placing the control of the disputed Palestinian West Bank into the hands of a third party might be the most viable solution to the problems there – again, not mentioning Iraq specifically, but in this new context, the implications were clear. Saddam had been expecting to receive his “rewards” – but with peace looming without the conquest of rogue nations being necessary, he was preparing to take what he considered his due, with or without Imperial dispensation.

USK Navy F-14A Tomcat over the burning Kuwaiti oil fields - DN-SC-04-15221, photo provided by US Department Of Defense. Click on the thumbnail to see the full-sized image.

The Kuwait Invasion

By the time all this had been uncovered, it was late July. Even as Imperial Intelligence was reporting to an emergency joint session of the upper and lower Houses of Government, Iraqi military forces were staging. On August 2nd, 3 days after the realization of what was forthcoming, and long before a response had been determined, Iraq invaded Kuwait, and within a week had conquered the neighboring nation. Furthermore, the successful conquest had utilized nerve gas on both military and civilian targets. Iraqi forces were lining up to invade Saudi Arabia even as the Empire was being briefed on Iraq’s Kuwait campaign.

Upping The Ante

From out of nowhere (literally), a new factor strode into the centre of Imperial deliberations on a response, as a representative of the Mao appeared before the Imperial Parliament to convey a message from his government: ‘it now stands clearly revealed that a faction of your Empire has foresworn the agreements held between us. At the time of these treaty violations, they were considered good and faithful servants of your Empress. The Empire Of Greater Britain is clearly in breach of the agreements between our states. You now brand this faction as rebellious, but have taken no action to curb this rebellion. We will generously grant you a brief span of time in which to address this situation, before we declare your default a formal violation of the treaties between us, grounds for immediate action on our part. Understand that our agreements do not recognize individual prerogatives; they are treaties between cultures, and cultures do not change. Should you fail in this most reasonable request, your Empire will henceforth be considered untrustworthy, all agreements between us shall stand as void, and we will undertake whatever actions are required to eradicate any dangers posed by the Empire Of Greater Britain. Your Empire will forever be considered false and foresworn by ours, and we shall eradicate it without quarter or clemency. This is our first, last, and only warning.”

Having issued what amounted to a declaration of War, to be rescinded only if the Empire acted immediately against Iraq, the Mao representative vanished as suddenly as he had appeared.

This put events in Iraq into a whole new context. Within the next 72 hours, the Empire military was beginning a full mobilization, a complete embargo and blockade of Iraq had been decreed, and the Empire was officially in a state of Civil War. On August 7th, Imperial troops began to arrive in Saudi Arabia. A deadline of Jan 16th, 1991 was announced for the complete withdrawal of Iraq’s military to their former borders even as the military buildup for Operation Desert Storm continued. Imperial policies made it clear to Iraq – if chemical or biological weapons were employed against Imperial citizens, a Nuclear response would be forthcoming, with no further warning.

1991

Iraq again dominated the news in the early part of the year. January 16th came and went with no attempt to meet the Empire’s deadline; accordingly, on January 17th, hostilities commenced. It was afterwards determined that Hussein was so convinced of his sense of entitlement that he found it impossible to believe that anyone would seriously oppose him, and that reports of the Mao intervention – which left the Empire with no choice in the matter – were discounted as propaganda within Iraq.

This was the first “modern” war, unlike the Afghanistan campaign which had been fought along traditional lines, and the various civil wars that utilized whatever weaponry was on hand. Ironically, it had been the former relationship between Empire and Iraq that had left the former with a state-of-the-art military apparatus.

The conflict began with exchanges of missile barrages. While Imperial anti-missile technology proved very effective at stopping the majority of the somewhat out-of-date SCUD missiles*, the Iraqi interceptors had considerably less success at dealing with the latest generation of Smart Missiles launched by the Empire. Within 48 hours, the Iraqi airfields were severely damaged and their Air Force crippled, clearing the way for precision bombing runs over key defensive emplacements. 48 hours after these commenced, the ground invasion began, and on the fifth day after the commencement of hostilities, the Iraqis were in retreat, falling back to planned positions – only to find that those positions had been destroyed by the Imperial Air Force. After 5 weeks of military action, Kuwait had been liberated, though the departing Iraqis, in an act of economic barbarism akin to the temper tantrum of a child, had torched hundreds of Kuwaiti oil wells. On Feb 27th, Kuwait City was liberated and the Iraqis defeated.

*It emerged, two decades later, that the criteria used to define a ‘successful interception’ were generous beyond belief – simply launching a missile when an incoming launch was detected wasn’t quite enough to be called a successful interception, but having that anti-missile missile head in the general direction of the attack was. Nevertheless, the outcome was a clear victory for the Imperial forces.

The Dictation Of Terms

Politically, the conduct of the war with Iraq had been dictated by outside forces. Having achieved the objectives that the Empire had set, and unwilling to sustain the high numbers of casualties that would have resulted if Saddam had been forced to employ his weapons of mass destruction, the politicians of the Empire determined that diplomatic and trade pressures would be a more acceptable method of dealing with Iraq.

An ultimatum was issued – the Empire would not invade with the intention of deposing Saddam, provided that his regime immediately acted to destroy their stockpiles of chemical and biological weapons and any facilities capable of manufacturing more, and submitted to Imperial inspection and verification procedures.

There was widespread demand for the reclassification of Iraq’s Imperial Membership status, but that option was restricted to dealing with an inability to administer a nation effectively. Instead, trade sanctions and military isolation zones were established, essentially locking up the entire country within its own borders. It was widely anticipated that a civil war would soon depose Saddam without the need for the Empire to dirty its hands by violating its own charter and principles, especially given that Iraq was dependant on Imperial grain shipments.

The Aftermath Of Victory

News emerging through March suggested that this would indeed be the case, as the people reacted to their defeat and its consequences. The Iraqis responded in various ways; some blaming the Empire for turning against its own, others blaming their leadership for being so foolish as to challenge the might of the Empire, a fight that they were never going to win; some shouldered a burning resentment against whoever they considered ultimately responsible, others responded more actively, and a few looked around for someone to lash out at – their attention falling on the repressed Kurdish minority. Word of the resulting atrocities slowly began to filter out from the Iraqi borders even through the censorship imposed by Saddam, and in April the Imperial forces established to enforce the blockade created a number of safe havens for Kurdish refugees fleeing the regime.

Publicly, Saddam had agreed to the terms of the Imperial Ultimatum, but almost immediately he began playing games with the Imperial inspectors, hamstringing their abilities to pursue their mandate through bureaucratic interference and refusing to permit access to religious sites and his personal palaces. The Empire, for its part, was wary of any military buildup and ready to respond at once to any actual use of the banned weapons, but was otherwise prepared to starve Saddam out.

Map of The war in Yugoslavia, 1993, by Pawel Goleniowski. Click on the thumbnail to see the full-sized image.

An unstable stability

1991 also saw a civil war in Yugoslavia, as Croatia and Slovenia sought independence within the Empire. There was further violence aimed at achieving the same goal in Northern Ireland. Terrorism continued to evolve; where once the goal had been a perpetual wave of small attacks, the trend now was toward fewer acts of greater impact. And the first member of the Peerage fell victim to the economic climate, as Earl Robert Maxwell died under mysterious circumstances; his business & publishing empire, beset by massive debts and financial corruption collapsing within days.

In hindsight, it is easy to see that the optimism and security felt by the Imperial Citizens of the time were a superficial coating of progress with a perilously-rotted and unstable core lying beneath it. But, as remarkable as it now seems, no one at the time foresaw the inevitable crash.

1992

This was the year that some problems long considered “solved” within the Empire returned to haunt the administrators of the Throne, as racial issues dominated events. The year began with The Empire continuing its hypocrisy, simultaneously recognizing the independence of Croatia and Slovenia while denying Ireland the same treatment. Those setting policy were accused of bending over so far in the name of political correctness that it had become reverse discrimination – the Baltic Nations were granted recognition while the Irish were not because one population was of Slavic stock and the other was White. Although this accusation was strenuously denied, it was becoming apparent that this was a de-facto policy brought about because no-one was willing to risk appearing politically incorrect.

South Africa continued to march toward the abolition of the racial division that had marred its political landscape for decades, a referendum giving the Prime Minister of the beleaguered nation overwhelming backing for his plans to dismantle the Apartheid policies, while a Trade Embargo was imposed on the rogue state of Libya in an attempt to force it to hand over suspected terrorists.

The race riots of 1992 had an eerily-familiar feeling to those who remembered the Watts Riots of the 1960s. This photo from 1965 by New York World-Telegram, declared to be in the Public Domain by the US Government.

The Riots Of Los Angeles

On April 29th, racial issues surged to the forefront, as 5 days of rioting began in Los Angeles following the acquittal of a white policeman for the beating of a black motorist. Throughout the Empire it suddenly became clear that all sorts of racial double-standards remained in effect, regardless of the laws demanding equal treatment. Ethnic stereotyping and the natural congregation of communities of similar ethnicity had combined to overlay separate sub-nations over one another within the same geographic space, within which law, and social perspectives, were perceived differently, and handled differently. No solutions were obvious, and the problems would remain an undercurrent within Imperial society for the next two decades.

The Imperial Racial Divide

Nor was this phenomenon unique to the USK‡; it was simply more pronounced and obvious there. In London, Pakistani and Indian communities who had taken refuge from the invasion and conquest of their homelands by the Chinese developed their own branches of organized crime. In Australia, militant Aboriginal leaders began forging links with radical groups; although they would eventually back away from the terrorism route, the connections forged would eventually prove crucial to the Empire’s ongoing prosperity.

Within virtually every member nation of the Empire, there was an ethnic minority which began to feel oppressed by the majority or their public instruments. It didn’t matter how trivial some of the complaints were; the fact that there was any difference in treatment of ethnic groups at all was sufficient to arouse heated protest. Only one ethnic stock were not permitted to cry “foul” over their treatment – the Caucasian. These social developments virtually assured the introduction of exactly the type of reverse-discrimination that had set off a new wave of violence in Northern Ireland only a few months earlier.

Wanted poster for Serb leaders including Slobodan Milosevic, from the US State Department.

Serbia & Montenegro

So heated were the ethnic divisions in the Baltic regions that by the end of May, the Empire was forced to impose trade sanctions against Serbia and Montenegro following fierce attacks on Sarajevo, and the Imperial Military – hardly recovered from their Middle Eastern excursion – was poised to attack Central Europe. Within the month, diplomatic efforts at finding a resolution had largely been abandoned, and Imperial Troops had captured Sarajevo airport, permitting relief supplies to be airlifted into Bosnia.

For many of those affected, these shipments were the first substantial food they had received in months. As the Imperial hold on the region grew, stories of ethnic cleansing began to emerge which soon lost the Serbs any sympathy or support for their already unstable position. In mid-august, the Empire officially condemned the “ethnic cleansing” in Bosnia and vowed to use force if necessary to deliver humanitarian aid. For a time, the Serbs seemed to back off, but renewed acts of aggression in November caused the imposition of a Naval Blockade. On December 21, Slobodan Milosevic became Prime Minister of Yugoslavia. The Empire’s racial problems were about to get a whole lot worse.

Michael Jackson at the Cannes Film Festival 1997. Photo by Georges Biard.

The Crumbling Of Icons

At the time, the election results were hardly front-page material beyond the local area. Instead, the primary hue and cry in the media at the end of the year was the separation of the Prince and Princess of Wales. The lives of the three “anointed ones” of the 1980s had led all three into hard times by now; Michael Jackson’s popularity had ebbed away as lifestyle choices, innuendo, and a rapacious media left his music less relevant than his existence. He had been forced into a hermit-like lifestyle which was inherently abnormal, and then criticized for the abnormality of that existence. No longer the entertainer who had captivated the world, he was a parody of popularity.

Similarly, Sir Bob Geldof’s musical career had failed to survive the ramifications of Band Aid; no matter what he produced musically thereafter, it was inevitably going to seem shallow in comparison to the weighty issues and the massed icons of popular culture with which he had attacked those issues. His crusade had cost him his marriage, custody of his children, and his career; he would forever live in the shadow of what he had achieved in the mid-1980s.

In comparison, it might seem to the casual historian that the Princess Diana had escaped relatively whole the carnage of the paparazzi. While headlines had screamed of the infidelities of her husband, and the partisan support of the Empress, and the betrayals of personal staff and acquaintances, she had at least managed to retain her dignity and avoid being torn down to the common level. But at last the scandal sheets had real meat to their stories, and what had once been seen as the ultimate expression of hope for the future of the Empire was reduced to an increasingly tawdry divorce proceeding. From December 9th, 1992, the Empire would have to look elsewhere for hope.

The Uffizi Gallery is one of thee oldest and most famous art museums in the world. Photo by Chris Wee showing the gallery restored after the bombing.

1993 – A year of extremism

The Baltic situation continued to be a source of trouble throughout the year. The Imperial War Crimes tribunal was called into session for the first time since 1945 to seek justice for the Bosnian atrocities – when and if the people responsible were captured. Imperial Forces supervised the evacuation of civilians from Srebrenica, hoping to clear the field for combat. In mid-year, six “safe zones” for refugees were created. These immediately became targets for Serbian radicals.

It was also a year of increased terrorist activities elsewhere. A Palestinian extremist bombed the World Trade Centre in New York, killing five people and completing the transition to “mature” terrorism. The extent to which the authorities are still struggling to find a working counter to the threat posed by the new Terrorists is shown by the bungling of a raid by USK police forces on the headquarters of an extremist religious sect in Waco, Texas, after a 51 day siege. 72 people are killed, including many women and children. Just five weeks later, a bomb is detonated outside the Uffizi Gallery in Florence, Italy, killing 6 people. To this day (2055), it is uncertain who was behind the attack and what they hoped to achieve, as several credible groups claimed responsibility.

Smaller Extremism

1993 also saw the emergence of other extremist groups, fighting for smaller, better defined causes rather than one all-encompassing general manifesto. The prototype made its existence known to the world on March 11, when a doctor was murdered by an anti-abortion activist outside a Florida abortion clinic. As people despaired of being able to influence the political policies that controlled their lives, their increased desperation was becoming the breeding ground for more and more extreme positions.

Battle is joined

More than anything else, this showed that despite the Empress’ success in taking back some control over the Civil Servants when it came to major and singular issues, the fundamental apparatus that was the real problem remained whole and intact. It was still the purview of petty bureaucrats and civil servants to interpret and implement government policy in most of the areas that mattered to the ordinary citizen.

To be sure, the principle of the Empress overriding or revising policies in any given case or any specific rule or regulation had been established; but unless a case came to the Empress’ attention, nothing had changed. To some extent, her victory had simply ensured that elements of the Civil Service were now covertly antagonistic to her continued reign.

And of course, they controlled the media, and the media told a significant segment of the population what to think. The peerage/industry machine had taken several months to formulate their response to the increased political control of the Empire by its head of state, but from this year forward, many stories appearing in the mass-media began to have anti-monarch undertones, as the battle lines began to be drawn.

'Internet' Icon from the Tango Project, image by warszawianka. Click on the image to see the terms of use & learn more about the Tango Project. Image from the Open Clipart Library http://openclipart.org/

Birth of The Internet

But this was also the year in which the tools which would ultimately lead to the overthrow of the civil service and the reinvigoration of the Empire by its citizens began to achieve popular acceptance. There was a new conduit for information coming into existence – one which linked people together directly, and which bypassed the media barons’ spin doctoring. Although it would take many years to mature, the age of communications had achieved its ultimate expression: The Internet.

Cropped Photograph of Srebrenica in August 2004 by Samum. At first glance, an idyllic setting, but note the missing roofs and damaged buildings in the foreground. Click on the thumbnail to see a full-sized, unedited image.

1994

It seemed to many that one troubled region (the Middle East) had been calmed only for the Baltic Regions to take their place. Ongoing unrest in Bosnia dominated many of the 1994 headlines. In February, a mortar attack on a marketplace in the Capital, Sarajevo, killed 68 and wounded over 200, and in March, Serbian forces bombed the “Safe Zones” of Gorazde and Srebrenica. In April, the Imperial military retaliated with Air Strikes against the Serb forces at Gorazde, and the conflict resumed a slow boil. In December, a cease-fire accord was finally reached.

In general, it’s fair to say that the conflict had little impact on events in the Empire overall. Fighting in a relative backwater of little strategic importance was not something to overly disturb the daily routines of a culture that vast.

The key phrase, according to later criticism, was “of little strategic importance”; the Empire, they would accuse, had grown so unmanageable that there was no capacity left to fight for the ordinary citizen, only the larger ideological conflicts. This criticism overlooks the obvious – that any conflict in which there was a confluence of interests would naturally receive greater support from individuals who had that vested interest. Thus, the peerage (whose economic alliances were threatened by any disruption of oil supplies) would enthusiastically support any action aimed at achieving stability in the Middle East but such support would be far more lukewarm when confronted with an issue of relatively pure morality, with no greater economic impacts in prospect. No conspiracy is necessary when there is an overlapping of purposes.

Peace at last?

But in one particular part of the world, also renounced for it’s ongoing violence, the pointlessness of the unrest was observed by a few unexpected observers. The year seemed like any other in the Middle East from early on; in late February, over 50 Palestinians were killed in a Hebron mosque when an Israeli settler opened fire with an automatic weapon. A week later, six Israelis were killed by a Palestinian sniper. It all seemed so pointless to both sides, and was attacked by both as ‘unproductive’, a refreshing change of perspective. On May 4th, Israel and the PLO signed an agreement giving Palestine self-rule in the Gaza Strip and Jericho.

Former nuclear test site Maralinga, South Australia. Photo by Wayne England, who participated in the clean-up, April 2007. Note: the colors are correct. Click on the thumbnail for the full-sized image.

The Thorny Issue Of Reparations

1994 saw developments in the racial issues which had been edging toward a resolution for some time. In South Africa, the African National Congress won the first free elections. The transition to black self-rule was now complete.

The Australian Government agreed to pay £7,000,000 to South Australian aborigines displaced by the nuclear tests of the 1950s and 60s, and the following week, the New Zealand government offered £400,000,000 compensation to the Maori Tribes displaced by the arrival of European Settlers.

These were a disquieting development to many, opening the door to lawsuits for compensation by many others, even as it addressed passionately-held sources of tension. Most fervently opposed to such measures were the USK, who knew that any serious attempt at reparations to its Native American and African-American populations would bankrupt not only the country but the entire Empire.

The contentious question remained, how much discounting of any reparations should take place for the acknowledged benefits and participation in the benefits of modern society? How much should be discounted because the events in question took place in a different time, when different behavior and practices were deemed acceptable and right, and to which a more modern standard of morality could not be applied? How responsible were modern generations for the failures in the past of those who could not know “better”? In other words, how much of the demand for reparations was the result of greed alloyed with hindsight?

Those who opposed reparations, in principle, were always going to struggle to persuade others of their position, since the events of the past few years made it clear that Racial Equality was not a “solved” problem, but those who supported it, even in principle, had an even more difficult struggle to overcome: the limits of practicality. Realism dictated that some compromise would have to be reached, a compromise that neither side was willing to contemplate.

One proposed solution placed a statute of limitations on the offences, but no agreement could be found on where the dividing line should be located. Another proposal applied a fixed discounting rate to each year since the act of inequality was committed, on the basis that ongoing processes of social reform provided a larger share of any compensation owed; since this applied the greatest discounting to the most expensive claims, it met with considerable private support, but since it would fail to achieve any of the social ambitions of the most vocal and aggressive reformers, it failed to get traction amongst the “victims”.

In truth, neither side was willing to abandon the pulpits of exorbitant rhetoric that the issue provided. Any solution would have to be imposed from higher up the policy food-chain, but the leaders of the Imperial Government had more than enough to contend with in the modern day problems of the Empire.

The Rise Of The Internet

Communications technology continued to grow apace; by the end of the year over 15 million people were connected to the internet. From this year forwards it would be considered a mass medium. With it came a surge of excitement in the business world, as any business connected with the internet seemed to be poised for mammoth profits.

It was quite literally possible for a startup with nothing more than an idea to make its founder a multimillionaire overnight. The IT sector was exploding.

But 1994 was not without its warning bells in this area; for this was the first year in which a new subject was mentioned in the specialty press, a subject that would end the century on everyone’s lips: the millennium bug.

1995

Conflict resumed in Bosnia as the cease-fire agreement broke down. After months of pointless bloodshed an agreement was reached for the partitioning of Bosnia-Herzegovina into separate nations for Muslim Croats and Serbs respectively. This was the only practical solution, but at the same time it aggravated the minority populations in both of the resulting nations, who felt disenfranchised as a result.

The New Terrorism

The transition to “the new terrorism” was completed, as exemplified by the only three serious terrorist incidents to occur in the course of the year. In the first, a religious fringe group conducted a nerve gas attack on a crowded Los Angeles subway, killing 10 and injuring thousands. Three months later, the leader of the Supreme Truth cult would be arrested for masterminding the attack. A month later, a terrorist bomb in Oklahoma City killed 158 and injured hundreds. The third, coming late in the year, involved a radical right-wing Zionist sect which carried out a surgical strike on the Israeli cabinet. The Prime Minister and a number of aides were killed, hundreds more were injured.

The Price Of Profit

Even more significant, though less dramatic, were the consequences of a decade’s gradual transition in Economic Circles. Traditional businesses had been forced to react to the depredations of the “New Entrepreneurs” by adopting many of the same philosophies and practices.

In particular, many had converted from a policy of growth through capital acquisition and expanding customer base into a practice of considering these as mere seed capital for investments, which would then generate incredible profits for the owners and shareholders. In the process, many of the traditional values like customer service of these corporations had been thrown aside as “uncompetitive business practices”. The credo had become “profit at any expense”. As the need for ever-increasing profits became unrealistic expectations and greed and overconfidence, it was inevitable that someone would go too far.

There had been a number of near-misses, covered up by higher authorities lest confidence in the economic system be eroded, producing a depression; but in 1995 there occurred a loss so vast that it could not be concealed. The result was the total collapse of the Barings Bank after losses by trader Nick Leeson of more than £800 million. Tragically, this warning sign was misinterpreted by the management of other corporations as a breakdown of the audit systems, the procedures that were supposed to ensure accountability and limits of losses. The corporate culture which caused the breakdown would not be examined until it was far too late.

The Privatization Fallacy

The profits to be made by listing companies on the stock exchanges were so vast that even governments had gotten into the act, Privatizing many essential industries, in a direct reversal of policies that had been in place and considered incontrovertible only two decades earlier.

Industries that had been nationalized, because their continued functioning was deemed essential to society, were now privatized for vast sums to retire national debt that had accumulated over decades.

Of course, as soon as they were in Private hands, the same credo – “Profits at any cost” – came into operation, and with it, the vulnerability to all manner of economic ills. On the surface, the economy was stronger than ever; driven by booming technology stocks, it was growing at unprecedented rates – 1000% a month was not unheard of in extreme cases and sectors – but at its core, the economy was rotten, and the first stiff wind would result in the loss of branches – if not the collapse of the whole. And hurricane season was fast approaching.

The beautiful Lagoon at Mururoa Atoll, scene of a series of French Nuclear Weapons tests in the 20th century. Photo by Georges Martin, 10 May, 1972. Click on the thumbnail for the full-sized image.

1996 – collapse of the house of sticks

Politically, the slow boil within the Empire continued, as Chechen Rebels seized 3,000 hostages in the Russian town of Kizlyar.

France continued a series of tests of Nuclear Weapons in the Pacific aimed at giving them a Nuclear Arsenal independent of Imperial control.

The IRA called off the cease-fire that had endured for 17 months, just as the rest of the world perceived genuine hope for a peaceful resolution of the ongoing conflict.

A series of rapid-fire suicide bombings in Israel killed 31 people and injured over 100. Following the fourth attack within the fortnight, Israel announced that all peace agreements with Palestine had been abrogated by the Palestinians, and invaded to reclaim the territories to which they had granted independence. Within a month, an Israeli rocket airstrikes had hit an Imperial base in Lebanon killing 105 civilians, and turning the political clock back to the darkest days of the 1960s.

1996 was also the year that the Prince and Princess of Wales petitioned the courts for divorce.

Emerging Social Trends

A number of trends that had been building for years made public debuts in the course of the year. Legislation aimed at controlling the Internet began to appear, but these were national laws – or worse yet, local laws – and as such, completely unenforceable. These were the first indications of one of the dominant themes of a new era in Imperial History (and as such, will be discussed more fully in a subsequent chapter of this history): the rise of internationalism.

The quality-of-life debates that had been growing in intensity for decades came to a head as the Northern Territory of Australia passed legislation permitting terminally-ill patients to instruct their doctors to end their lives, despite Imperial and National laws against assisted suicide. Significant not only in the quality of life domain, this was a further manifestation of the theme of the years to come, as the interaction of laws at different hierarchic levels within the Empire came into question, and some of the fundamental assumptions of the Empire were called into question.

Resistant Diseases

But the biggest themes of the news year were medical developments. The warning by the Imperial Health Authority of an imminent potential plague of antibiotic-resistant strains of tuberculosis called into question 60 years of accepted medical practices. By the years end, resistant strains of many other diseases would also be generating headlines, as would the arrival of new, more persistent strains of diseases long considered to be of minor importance, in particular Legionnaires disease.

Up to 10 million sheep, pigs, and cows were destroyed in Britain before the Foot-and-mouth 'epidemic' was brought under control. Similar scenes took place in most of the Empire. Photo provided by Lawrence Livermore National Laboritories, USA.

The Mad Cow Nightmare

This followed the announcement in January of an outbreak of foot-and-mouth disease in Britain and Europe, and the admission by the Imperial Health Authority in March that the “Mad Cow Disease” could be transmitted to humans through eating contaminated beef products.

This, for the first time in history, raised the specter of an epidemic that took advantage of the existence of the Empire. While there were customs laws and inspections when shipping goods from one country to another, the fact that they were all members of an active and overriding political organization meant that these were far less stringent and restrictive than would otherwise have been the case. A massive programme of testing every herd in the Empire would be announced in December; but by then, France, Germany, Spain, Portugal, Austria, Denmark, Norway, Switzerland, South Africa, Tanzania, Zanzibar, Argentina, Brazil, Mexico, and Canada would all have confirmed outbreaks.

Mercifully, thus far, Israel, the US, Australia, and New Zealand, all appeared to be free of infection; and immediate bans on the import of beef, beef products, and fodder were put in place to keep them that way, while harsh countermeasures were undertaken that had been derived from long-standing policies on Anthrax infections. A single instance was determined to be sufficient cause for the slaughter and incineration of the entire herd.

Beef prices throughout the majority of the Empire collapsed, and untainted beef became a luxury commodity. The USK reserved the bulk of its beef production for domestic usage, over considerable protest; exports from Israel were limited by both political concerns and practical difficulties; and that left the antipodean supply as the only save source, a fact that the national governments immediately began to take advantage of. Short-sightedness squandered what could have been a huge windfall, however, when additional export charges failed to distinguish between live cattle and cattle for slaughter; some of the herds reaching affected ports were immediately diverted from the slaughterhouses to usage as breeding stock. It would take a decade, but eventually the domestic herds would be repopulated from uncontaminated sources, and – aside from an occasional isolated outbreak – would eventually be rebuilt.

It did not happen before public dietary patterns had been fundamentally changed, however. Lamb and Sheep production had grown quickly to occupy much of the gap left by the virtually-vanished beef industry, and Mutton and Chicken would be the dominant meat source for most of the Empire for decades to come.

Mad Cow and the USK

Willy Nelson, one of the primary organizers of the original Farm Aid benefit concert. Photo by Larry Philpot of www.soundstagephotography.com

The Agricultural sector of the USK economy, despite being the largest employer in the nation, had been struggling for more than a decade. It is not insignificant that one of the first imitators of “Live Aid” had been the rather more topically-focused “Farm Aid”, targeting support for family farmers in the USK in danger of losing their farms through Mortgage debt. A concert was organized for Sept 22, 1985, less than a year after the event that was its direct inspiration, and quickly evolved into an annual event (missing 1988 and 1991).

Responses to the Mad Cow crisis in 1996 were consequently more varied than might be expected. Some primary producers saw the event as a vindication of the superiority of the USK over the rest of the world, and argued against any increase of exports, a view that – when stripped of the excessively-nationalistic rhetoric – would ultimately prevail. Others wanted to trade uncontaminated beef for political concessions at the Imperial Scale, while some wanted to impose additional taxes on beef exports to raise funding to be spread as relief payments throughout the agricultural sector.

Nervous commodity markets immediately discounted the value of Beef stocks, which in itself imposed new economic pressures on the farmers, but which did not go as far as the near-total collapse of prices in the realms to both the north and south of the USK, where outbreaks had been confirmed.

This immediately produced a black market in cheap – questionable – beef shipments into the US. Rumors of these shipments began circulating almost immediately, further depressing an already deflated market and further lowering public confidence in the beef industry. It was concern that inflating the value of beef further would only encourage these unsafe practices that ultimately killed any prospects of the USK using the international demand for beef to solve its domestic agricultural problems.

1997

The “Mad Cow” catastrophe went from bad to worse as it was discovered that the soil itself could harbor the infectious agent. This discovery was made as farmers attempted to replace their herds, only to have the disease re-emerge in cattle who had been tested and certified “clean”. It was clearly necessary to not only slaughter an entire affected herd, but to sterilize the soil on which they had grazed and to quarantine the affected farm for a period of 6 months – draconian measures that aroused storms of unrest amongst the public.

Despite the produce only coming from farms tested and declared free of the disease, the domestic beef market in much of the Empire collapsed to such an extent that it would be a decade before it had fully recovered. But with these harsh measures stringently applied, the threat posed by the disease was clearly receding by mid-year.

Only then did the government begin to examine closely the causes of the original problem, seeking answers to the questions of where had the infection come from, and what could be done to ensure that it never happened again? The answers would not be as forthcoming as Imperial analysts expected, and would not become public for years.

The sea of flowers left at the gate of Buckingham Palace in memorial to Diana, former Princess of Wales, speaks to the affection in which she was held. Photo by Maxwell Hamilton. Click on the thumbmail for the full-sized image.

The final bloom of the ‘English Rose’

This was the year in which the fairytales came to an end. Following her divorce of a year earlier, the former Princess of Wales began keeping company with Dodi Fayed, the son of the owner of Harrods (and many other businesses), and the shy smile that had captured the sympathies of millions returned with increasing frequency.

But the divorce had left her vulnerable to the predations of the paparazzi, and increasingly desperate measures were necessary to maintain the couple’s privacy. One rainy night in August, after the couple had been drinking at a restaurant, the press again caught up with them; the couple drove off at high speed, pursued by the reporters and photographers. On a road made greasy by the rain, the powerful BMW lost control and hit a tunnel upright; the pair were killed instantly.

This was one of the critical moments in history; Diana died before the press were able to tear her reputation down for the sake of headlines, despite their best efforts; and in her passing, she was anointed a saint by the public. For the second time in the century, the Empire stopped for a few hours; in 1969 it had been the first landing on the moon, in 1997 it was for the funeral of the embodiment of the promised future. Even those who felt distant from the monarchy found in those days that the world was a sadder, greyer, place.

The Crown In Crisis

Had they behaved differently, the outpoured support might have shored up the rule of the Empress Elizabeth, or even that of the future monarch of the country, Charles; but it was widely held that they were responsible for the circumstances that led to Diana’s death, and instead found that popular support for their rule was markedly declining.

In part, this was driven by a hostile press, who were willing to attack anyone for headlines; in part it was driven by hostile media owners, who had come under attack by the Empress; and in part, it was fully deserved.

The Empress had been so busy focusing on the ongoing battles with Government, and Peerage, and emergencies, and Civil Service, that she had lost touch with her subjects. A blinkered view of the deteriorating relationship between the Imperial Family and Diana and an old-school perspective that told her to keep her feelings private had gradually led her to lose touch with what modern citizens expected of their rulers and public figures. This, more than anything, had been at the heart of many of the conflicts between her and Diana; she had considered the Princess’ behavior to be excessively demonstrative, consistently outrageous, and perpetually verging on the exhibitionist.

These problems were compounded by a situation in which it was not considered etiquette for her servants to correct or even advise her; on the contrary, she was supposed to advise them. It took someone who was not afraid to be critical of the Imperial Family, even to discard protocol completely, to correct the situation.

Prime Minister Tony Blair at the White House, 2001. Photograph by Paule Morse, made available by the Executive Office of the President Of The United States

An unlikely savior

Fortunately, there was such a person at hand – the newly-elected Prime Minister of England, Tony Blair, who had in the past been highly critical of the role of the Imperial Family. It was Blair who explained to the Empress his ‘theory’ that Princess Diana had been so popular with the public because she enabled them to identify with her; distance and forced deference were barriers that had been erected between the Imperial Family and the public, but that if she desired to do so, there was an opportunity to use the current climate of discontent to reconnect with them; all that was necessary was to discard an outmoded policy of presenting herself as an impersonal throne and embrace a policy of letting them see the Monarch as a person. “Of course,” he is reported to have said, “I am sure that this is nothing that has not occurred to Her Majesty,” covering the breach of protocol. Prior to this moment, the generational gap had been the cause of considerable disrespect toward Blair behind the scenes within Buckingham Palace; at this moment, that barrier fell away, and the two entered into a new and more cooperative relationship, one that would reinvigorate the connection between Ruler and Ruled.

This was a policy that Prince Charles had also been advocating, something that he, ironically, had learned from Diana herself. But it forced on the Empress a very hard choice: she could rehabilitate the Monarchy’s image by humanizing herself, but in doing so, she would entrench the public perception of her son as unworthy to inherit, a view deriving from the tawdry infidelities that had caused the marriage to Diana to break down in the first place; or she could attempt to rehabilitate his image despite this additional handicap, risking the Empire itself should they fail to win back the support of the people.

The decision of destiny

By the end of the year, it was clear to the Empress that should Charles ever succeed her, he would preside over a hollow shell of what had been. She had originally intended to retire in favor of her son on her 60th birthday; but circumstances left her no option but to continue beyond that date, until her grandson, Prince William, had reached his age of majority. On William’s 21st Birthday, he would be crowned Emperor of Greater Britain.

It was Prince Charles who had made the decision for her – pointing out that if he abdicated his right to inherit, he could follow his heart and marry for the love he felt for Camilla Parker-Bowles, a decision with which he would be more than satisfied. Only when the Empress’ memoires were published in 2025 would the world learn that she had already decided to act as she subsequently did. Given a choice between the two, he would choose happiness over the throne – a choice that Elizabeth herself might have made, but one that she had never had the opportunity to explore.

So ended the Age of the three anointed saints of the late 20th century. What had started as an absurdly popular recording of dance music had ended in the disinheriting of the heir to the British Throne.

At my workplace there’s a poker group. At my previous job there was a group. Players in my Riddleport campaign play in such groups. Games are even broadcast during prime time TV. When did this game become so popular?

At my workplace there’s a poker group. At my previous job there was a group. Players in my Riddleport campaign play in such groups. Games are even broadcast during prime time TV. When did this game become so popular?