A word of advice: Each part of the series builds heavily on the content from the previous one. While you may be able to get relevant information without doing so, to get the most of out of each, you should have read the preceding article.

Welcome & General Introduction

So, here we are – the six parts that have preceded this one have delivered us to an era that can generally be considered now. I’ll go into the specifics of what changed and how it affected the economy in the next part – because, at last, we’re up to the blog post within this series that I originally intended to write.

You see, a while back I started seeing a lot of ill-informed vitriolic posts on social media about the US Economy, specifically the interest rates and the terrible job that President Biden was doing with the economy.

At much the same time, Interest Rates were experiencing sustained and regular rises in England, where similar rhetoric was on display – just a little toned down.

And Australia was also experiencing a similar phenomenon, creating what’s being described as a “Cost Of Living Crisis”. But our interest rate rises were more cautious about potentially creating a recession, and so – while in the US they have now moderated to something America can live with, and the rhetoric has shifted focus – Interest Rates remain high here – and the opposition have tried to employ similar rhetoric (but it hasn’t worked).

As I was reading the umpteenth variation on the theme, it was becoming more and more apparent that a lot of people have no idea how and why interest rates are set, and how economies worked. And I thought to myself, “What a pity that it’s not suitable for a Campaign Mastery post that could explain it all – more or less.”

And then I realized that every modern game out there has, or should have, some form of economy, and that GMs should have at least a basic idea on how they worked, and so it was a suitable topic, after all.

But I thought that it needed context to make it relevant to non-modern RPGs, and so the rest of the series was born.

A disclaimer: I am not an economist and I’m not trying to turn anyone else into an economist. An awful lot of this content will be simplified, possibly even oversimplified. Bear that in mind as you read.

A second disclaimer: I’m Australian with a working understanding, however imperfect and incomplete, of how the US Economy works, and an even more marginal understanding of how the UK economy works (especially in the post-Brexit era). Most of my readers are from the US, and number two are Brits. Canadians and Australians fight over third place on pretty even terms, so those are the contexts in which what I write will be interpreted. And that means that the imperfection can become an issue.

Any commentary that I make comes from my personal perspective. That’s important to remember. Now, sometimes an outside perspective helps see something that’s not obvious to those who are enmeshed in a system, and sometimes it can mean that you aren’t as clued-in as you should be. So I’ll apologize in advance for any errors or offense.

I’ll repeat these disclaimers at the top of each part in this series.

Related articles

This series joins the many other articles on world-building that have been offered here through the years. Part one contained an extremely abbreviated list of these. There are far too many to list here individually; instead check out

the Campaign Creation page of the Blogdex,

especially the sections on

- Divine Power, Religion, & Theology

- Magic, Sorcery, & The Arcane

- Money & Wealth

- Cities & Architecture

- Politics

- Societies & Nations, and

- Organizations, and

- Races.

Where We’re At – repeated from Part 3

Along the way, a number of important principles have been established.

- Society drives economics – which is perfectly obvious when you think about it, because social patterns and structures define who can earn wealth, the nature of that wealth, and what they can spend it on – and those, by definition, are the fundamentals of an economy.

- Economics pressure Societies to evolve – economic activity encourages some social behaviors and inhibits others, producing the trends that cause societies to evolve. Again, perfectly obvious in hindsight, but not at all obvious at first glance – largely because the changes in society obscure and alter the driving forces and consequences of (1).

- Existing economic and social trends develop in the context of new developments – this point is a little more subtle and obscure. Another way of looking at it is that the existing social patterns define the initial impact that new developments can have on society, and the results tend to be definitive of the new era.

- New developments drive new patterns in both economic and social behavior but it takes time for the dominoes to fall – Just because some consequences get a head start, and are more readily assimilated into the society in general, that does not make them the most profound influences; those may take time to develop, but can be so transformative that they define a new social / political / economic / historic era.

- Each society and its economic infrastructure contains the foundations of the next significant era – this is an obvious consequence of the previous point. But spelling it out like this defines two or perhaps three phases of development, all contained within the envelope of a given social era:

- There’s the initial phase, in which some arbitrary dividing line demarks transition from one social era to another. Economic development and social change is driven exclusively by existing trends.

- There’s the secondary phase, in which new conditions derive from the driving social forces that define the era begin to infiltrate and manifest within the scope permitted by the results of the initial phase.

- Each of the trends in the secondary phase can have an immediate impact or a delayed impact. The first become a part of the unique set of conditions that define the current era, while the second become the seeds of the next social era. There is always a continuity, and you can never really analyze a particular period in history without understanding the foundations that were laid in the preceding era.

The general principles contained within these bullet points are important enough that I’m going to be repeating them in the ‘opening salvos’ of the remaining articles in the series.

1. Inflation & The Economic Thermometer

Simply put, Inflation measures how much faster an economy is growing. In a system tied to a standard, like gold or silver, the number is relative to expectations, which define how much currency needs to be printed and put into circulation.

In economies with a floating currency, if you print too little, it just means that the value of the currency rises to compensate, and vice-versa – but that affects all sorts of other things if you buy or sell anything internationally, so a controlled inflation rate remains absolutely critical to keeping an economy stable.

Negative inflation rates – deflation – are undesirable. It’s the same thing (essentially) as setting interest rates too low, which I’ll get to a little later.

Pandemic

That’s what happened in the pandemic – people weren’t going out, lots of businesses were closed, money wasn’t being spent, and the economy started to crash.

Stimulus

To fight that, governments issued economic stimulus in various forms. This artificially stimulates the economy, but it increases the total purchasing power of the sum of all the money in the economy. That’s inflationary – a good thing in this scenario – but once the cause of the slowdown ends (lockdowns, in this case), all that extra money starts to circulate, and the economy starts to overheat.

Post-Pandemic Inflation

The law of supply and demand means that this expenditure is also inflationary, so inflation spiked, all over the western world – especially as supply chains had been disrupted and would take a while to get back to normal. That meant that supply was down just as demand was going up, and that results in higher prices – which cause inflation.

I’ll cover a whole truckload of economic vulnerabilities a little later – this is just supposed to be a general introduction to the subject.

So, economic managers – whatever their job title – within any given government will make it their business to know what the economy is doing, so that they can intervene if necessary.

.

2. What’s More Important than the current Inflation Rate? Tomorrow’s Inflation Rate!

To be honest, what the inflation rate is now is not all that important. What matters is what it’s going to be – assuming that you do nothing – and how that forecast is affected by economic management (i.e. doing something).

You see, the ‘current’ interest rate is the result of what has already happened within the economy. It’s too late to change it. What matters is how you respond to it.

Because deflation is so nasty, the usual practice is to aim for a modest, consistent, economic growth, and matching low levels of inflation – generally, 2-4%. If your inflation rate is higher than this, you’re in an economic boom – and those are usually caused by an economic “bubble” of some sort that will eventually burst. And that’s bad.

To forecast what Inflation is going to be, you need to identify a trend. And a good starting point is the change in the inflation rate now compared to what it was, the last time you measured it.

But it’s not that simple.

3. Crystal-ball-gazing, Economic Style

Measuring Inflation is never as easy as it is made to sound on the TV News. Predicting what it will be is even harder.

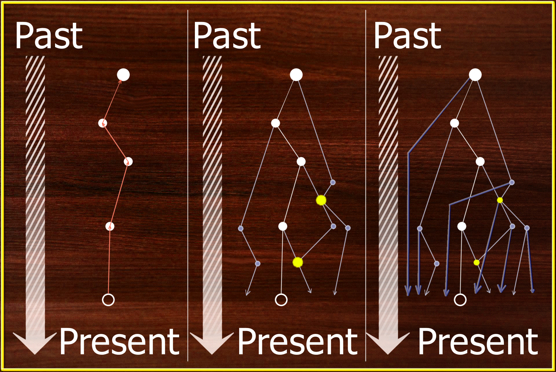

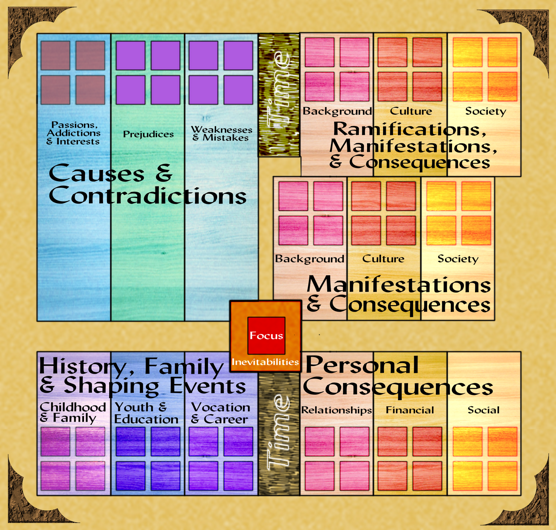

Here’s a set of charts that I put together to illustrate the problems.

Figure 1 is the ideal situation. It shows three measurements of inflation – one very good figure, and two with a margin of error.

You usually get a very good number once a year, or once a quarter, and two less reliable figures in the months that follow.

But the core numbers are solid and show that in period 1, inflation rose by a small amount, and in period 2, it went up by a lesser amount. It then projects that last measured change forward to what it will be at the end of the year/quarter, if it keeps changing at the last measured rate of change.

Figure 2 allows for a margin of error upwards. Figure 3 adds a margin of error downwards. The bar on the right shows the level of fuzziness this introduces in the forecast. Based on this, you’d probably not see an intervention as warranted.

But there’s that pesky margin of error. In figure 4, I project the results if the current number is at the extreme low of the margin of error, and the previous result was at the extreme low, at the estimate, and at the extreme high. And it paints a very grim picture.

Figure 5 does the same thing based on the true value currently being at the top of the margin of error. And it’s results are equally nasty.

But those are unlikely extremes. If you put the two together, you can actually map out a bell curve, just like you would get with (say) 3d6. The center of the curve is right at the point of our original estimate, and spreading out to to either side by the original fuzziness. based on that, you still probably wouldn’t intervene.

But how do you know? Well, you can compare the fuzzy figure at your next measurement to the current one, and it will indicate the current trend. If it’s a, the end-of-period number will be the alarming a1. If it’s b, the forecast will be the still-serious b1. If it’s c, the forecast will be the alarm-bell-ringing c1.

But it’s still not that simple. Numbers need to be adjusted for seasonal variations, which means taking whatever your forecast says and guessing what it will be once those only-fuzzily-known errors are taken into account.

And, on top of that, the reason that there’s a margin of error in the first place is that a lot of the contributing economic factors aren’t precisely measured until the end of the period. Until then, all you’ve got are estimates and indicators, producing an estimated number..

4. Underlying Causes and Trends

The obvious thing to do is to look at the constituents, and use the estimates and indicators to track trend lines in those economic variables, and from that, try to work out what part of that bell curve you are likely to be heading for.

They never quite match up with reality. Think about those 3d6 again, and pretend that each d6 represents one of these underlying constituents. The average of a d6 is 3.5. So tell me then, how many of those d6 are actually going to roll 3.5?

That’s right – none of them. The best that you can hope for is that if one comes out particularly low, another will be high, and the whole thing will balance out in the end.

These contributing trends are called “underlying causes of inflation” by economists. If the underlying trends are fairly stable, so will the inflation rate be fairly stable, and that nice, simple forecast from figure 1 is probably going to be fairly close.

In actual fact, there are eight underlying values or trends that go into a normal inflation forecast.

5. Entwined Correlations & Vicious Circles

But it’s STILL not that simple. Lots of these underlying values are entwined and interconnected – and these secondary impacts often have delayed impacts as well as immediate ones – and not all of the eight factors are ever equal in their contributions; some are bigger, some or smaller. And these scaling factors can vary with the size of the underlying trend.

Let’s take a fairly realistic example: The price of crude oil gets put up by OPEC, as recently happened.

Gas stations raise their prices. Distribution costs of goods goes up.

It costs more to farm. Food prices go up.

It costs more for your employees to get to work. They start pushing for a pay rise, and because their food prices have gone up, they want it to be a healthy increase.

Oil prices eventually feed into the cost of power, which your employees use at home to run their refrigerators and TVs and whatnot – like cooking food. Their demands get larger and louder.

Meanwhile, the cost of electricity also means that it costs more to keep your lights on and your machinery operating. In some cases, this costs a bit; in others, like aluminum manufacturing, it costs a huge amount. So you have to put your prices up, and the employees get at least some of the pay rise.

(Not all industries and businesses are going to be affected the same way or by the same amount, by the way).

But if you and everybody else puts their prices up, it now costs more to buy everything. So the employees want another pay rise.

In the meantime, the extra spending power that they now have gets spent, and as a result, there’s more money in the economy. That’s inflation.

It can all end in a vicious circle in which the necessary response to inflation creates still more inflation.

To put it in a nutshell, the more overheated the economy, the more unstable it is.

6. Hyperinflation, Recession, and Depression: Why Inflation Matters

While the causes of these phenomena are quite different, a lot of the symptoms of the economic disruption are the same.

When one of these positive feedback loops goes completely out of control, you can end up with hyperinflation. Anything more than about 20% inflation is considered hyperinflation.

Germany experienced Hyperinflation between the world wars; people had to literally cart wheelbarrows full of notes to the store to buy bread, which cost a ridiculous amount, and which was increasing in price daily.

Imagine buying a dozen eggs one day for $2.50. The next day, those eggs cost $3.00, the day after, $4, then $7, then $12, followed by $16 and $25. A week after this hyperinflation started, you can no longer buy eggs by the dozen; it’s one egg at a time, for $5 each – then $7, $10, $16, $22, $40, $70, and $120. With prices going up like this, in a few weeks you will be talking about thousands of dollars – for an egg.

Pay rates can’t keep up. No-one can afford to buy eggs (or anything else) – so they steal them. Black markets start dealing in basic (stolen) produce, and people buy from them because they have no other choice but to starve. Riots and looting ensue. The shopkeeper doesn’t have the income to keep his shop open, so he closes it and joins the rioters – and so do anyone who used to work there. If this is restricted to one country, anyone who could has fled to somewhere else long ago – but hundreds of thousands more are following. Instant Dystopia.

Employment rates are a function of supply and demand, too. In a hyper-inflation situation, the demand vanishes, and there are hundreds of people for every vacancy.

Of course, everyone’s withdrawn what money they have saved long before, so the banks have collapsed, too.

Recessions

A contracting economy is not an improvement on this situation.

Recessions happen when there’s not enough wealth being generated by an economy to sustain spending. You can hide one by going into debt, borrowing money from somewhere else – for as long as they will lend it.

In a recession, expenses go up, profit margins go down, and businesses start to collapse. This drives a spike in the unemployment rate and pretty soon there are dozens of applicants for every job. When I first entered the job market, there was a small recession, with 10-12% unemployment. That’s only happened once or twice, since.

Depressions

If you get two quarter-years (usually abbreviated quarters) of economic contraction in a row, a Recession becomes a Depression.

You might think that there’s not much difference, things just don’t improve – but that assumes that a new equilibrium is reached in the economy, and things stabilize, albeit at a level that contains a lot of suffering.

Unfortunately, that’s not the case. Some businesses are ‘primary arteries’ within the ‘body’ of the economy, essential elements of the economy itself. In a Depression, these start to fail. The electric company. Major supermarket chains. Banks.

Domino effects follow, causing the closure of many businesses that might have survived, and massive downsizing of those that attempt to weather the storm.

The greater significance within the economy that these institutions represent makes a Depression slightly different in kind, and not just in degree.

A recession is the economy experiencing sudden, acute, alarming chest pains. A Depression is a full-blown heart attack. A prolonged depression is a stroke on top of the heart attack.

And depressions generally last a long time. It’s that much harder to rebuild the economy without those arterial components; it can, and has, taken years. It’s like a flood caused by the sudden collapse of a dam, a flood that wipes out the only available suppliers of steel and concrete (which you need in order to rebuild the dam). Before you can rebuild the dam, you need to replace those suppliers; before you can replace those suppliers, the flood waters need to recede; and even accomplishing all this still leaves a mess to repair.

7. Interest Rates & Inflation

The most effective tool to use against inflation in general is interest rates. You lower them to boost the economy and avoid (or mitigate) a recession; you raise them to suck money out of the economy when it starts to overheat.

If you rely on today’s inflation rate, you will always do too little, too late. You need to forecast what the inflation rate is going to be and set your interest rates accordingly.

Increasing interest rates has a whole slew of effects. It makes it more expensive to borrow, so people can’t spend as much on housing. The same effect makes it harder to keep a business operating (almost all businesses are built on debt, the infrastructure needed for the business to operate is too expensive).

So businesses need to put prices up, and that means that people won’t get as much to spend on luxuries. Rents go up, and so do power prices. Banks need to raise the interest rates they pay for savings in order to maintain liquidity.

Businesses also look to lower what costs they can – and that often means doing without as many staff. So employment goes down, and unemployment goes up. Pay rates may be cut – just when people are demanding that they go up.

And so on. But that brings me to a second wild-card when it comes to forecasting inflation rates – not all these effects migrate through the economy at the same speed, or with the same intensity. Some of them are immediate; others are a ticking time bomb.

Economists in Australia are forecasting a gradual but steepening fiscal cliff as people’s mortgages go from fixed (low) rates, set before the current financial problems arose, onto variable rates (set according to the current economic conditions), for example.

Just in case it works differently, I should explain that in Australia, ‘fixed rate loans’ on housing are for a limited period – sometimes one year, sometimes two, occasionally five – after which, the loans revert to variable rates. A lot of people refinance their mortgages when that happens, for obvious reasons – a great fixed rate often means a more draconian variable rate.

On top of that, some of the effects of an interest rate increase are persistent, they linger – just as hitting the interest rate brakes don’t slow the inflation trajectory all at once, so taking your foot off the brake has both immediate effects and slower, longer term ones.

Our electricity regulator has fixed prices for the remainder of the year at a rate commensurate with the high costs experienced earlier in the year (when it looked like those prices were going to go up by another 20%, it must be admitted) – which means that they will stay high even though the wholesale cost of generating and distributing the power has now started to fall.

There will be no relief on electricity and gas rates until January next year. The Reserve Bank (which sets interest rates here) could drop rates tomorrow, and they would effectively stay ‘locked in’ at the higher rate so far as power costs are concerned, at least until then.

8. Putting The Cart Before The Horse

So, not only is forecasting the inflation rate much more difficult than it might initially have seemed, it’s also far more important to get right – because that determines the interest rate settings, and those have a profound impact on the economy.

One of the ways economists counter the difficulty in predicting inflation rates is to put the cart before the horse. Instead of trying to measure the effects driving inflation, they measure the consequences of inflation on a given key element and use that to infer what the impact on inflation was over the period in question.

You can get an idea of how much more or less people have to spend by looking at house prices, and at retail spending – and at what people are spending money on. This uses the consequences of the inflation that has already been experienced to deduce the trend, the impact that the resulting patterns of expenditure will have on the future inflation rate.

It makes the guesses more informed, in other words.

Unemployment Rates

Another key example is the current unemployment rate, because that is driven by the inflation that was. If unemployment is too high, it’s a sign that the economy may be heading into a recession; if it’s too low, it means that demand for workers remains high, and that means that businesses have the money to spend on hiring more workers – and that’s a sign that the economy might be overheating.

In any given jobs market, there is a given percentage of the workforce that are going to be in the process of changing employers. The technical term, believe it or not, is “slosh’. It’s usually around 2-4%.

When there is a strong jobs market, and unemployment is low, employees feel more confident in their ability to get a job if their current one isn’t satisfactory for any reason – so slosh can actually go up, even though the overall unemployment rate is down. That confidence can flow through to other indicators, like retail spending, as well.

When there’s a lot of unemployment, businesses work extra hard to keep their best staff but treat everyone else as disposable parts – because there are multiple applicants for every vacancy, and they can pick and choose amongst them. People are less confident in their job security, and less inclined to risk changing jobs unless they have to.

So the unemployment rate is not only a key indicator in its own right, it also provides valuable context for the interpretation of other values. If only it weren’t always 4-6 weeks out of date by the time it gets measured…

9. Surfing the crest of a placid wave

Economists love it when things are nice and calm, when all the indicators are that inflation is stable at a couple of percent or so, unemployment is 50-75% churn (around 4-4.5%), and so on. When that happens, they can set Interest rates to a nice stable 4% that doesn’t put significant price pressure on wages or anything else, and they can continually surf the crest of a very placid wave.

That rarely happens. So many things feed into an inflation rate that there’s always something that’s too high or too low or too unstable and needs to be monitored. If it’s only one or two variables, that can usually be managed – though the reporting delays create considerable discomfort – but when persistent, nerves grow.

10. The Blunt Weapon

Part of the problem is that Interest rates are very much a blunt weapon.

Let’s say that there’s low unemployment, and housing prices are setting new records, and power prices are high, and OPEC have just reduced global supplies to increase the price of Oil. Those are all indicators that inflation is high, and needs action.

But at the same time, there’s high levels of mortgage stress, and there’s a mortgage cliff approaching, and families are under considerable price stress that is impacting retail sales and travel is down, and food prices are too high, creating considerable pressure for wages growth – those are all signs that interest rates are too high, and raising them further will only make things worse.

That’s the situation right now in Australia – about half the indicators say inflation is too high, and half say that interest rates are too high, and you can’t have it both ways.

It takes nerves of steel not to jump, one way or the other. What it probably means is that interest rates are too high, but have to stay that way until at least one more of those high-inflation indicators comes down. That will happen as people start falling off that mortgage cliff; the trick is to help retail and tourism businesses, and those individuals living at lower economic levels, survive until interest rates can be safely cut – without pumping more money into the economy, which will delay that fall.

At any given point in time, there are ten economic vulnerabilities that can massively disturb that placid wave. Governments usually have no control over these; they simply have to deal with the consequences. One is usually not enough to create an economic panic – but two or more can combine at any time.

11. Vulnerability 1: The Price Of Oil

The first vulnerability is the one to which everyone is most vulnerable, even the oil-producing nations.

That might not be immediately obvious to readers. Let’s say that another country restricts oil supplies (hello, OPEC); that has no bearing on how much it costs to produce your oil, so – in theory – the price of oil doesn’t go up in your country. Except that the oil producers are private companies who – like all such – are required by their boards an\d shareholders to make profits, and so they will restrict the amount of oil they sell domestically to increase what they can supply to countries where the price is higher. In effect, in order to be competitive, your country has to raise its domestic oil price to the international value, or close enough to it.

This is the vulnerability that completely blindsided everyone in the 1970s, bringing an end to the Pre-Digital Tech Age, as discussed in the previous part of this series. Simply put, no-one really recognized that it was a vulnerability until then; it was simply taken for granted.

The price of Oil impacts delivery and transport costs, so everything goes up in price. There are a huge number of products that are petroleum-based, especially plastics, so packaging for a lot of things goes up, too. It impacts the cost of consumer travel, so people have less money to spend on other things. It affects the cost of power, which takes longer to work its way through the system but adds an unwelcome second kick to all three effects.

And it’s completely out of your control; or more accurately, it’s subject to the whims of the least-stable oil-producing nation. Everyone – including the oft-maligned Saudis (at least in this space) – is hostage to the price of oil..

12. Vulnerability 2: The Price Of Power

Everything uses power, even oil refining. Manufacturing, packaging, transportation, distribution, communications, retail, domestic consumption – you name it.

Even today, when they should know better, economists frequently underestimate the impact of rising power prices, getting unpleasant surprises as a result.

Some industries and operations are more vulnerable than others; leading this pack are aluminum refining, who pass these costs downstream to every product that uses Aluminum components, or uses machines that contain aluminum components (thankfully, there aren’t so many of those).

Right behind them are anything that has to be stored in a refrigerated state. And that’s more foodstuffs than anyone realizes.

Anything that impacts the fundamentals of food prices has a disproportionate impact on consumer spending, consumer confidence, and consumer demand for better wages – and so rapid is this response that it can often outpace the spread of its causal impact through an economy.

Third in line – nominally – is anything that gets transported by road (or by rail, in many cases). Guess what the two primary distribution methods are? This effect is all about street lighting.

Domestic cooling and heating are in fourth place except every summer and winter, when they vault into third place. That’s because these are fundamentally inefficient processes. But this includes the cost of heating or cooling workplaces and office spaces, so it affects virtually everything.

Even seemingly unrelated industrial processes still rely on electricity to make their conveyor belts and other machinery function.

The price of power is in an especially precarious state at the moment. Already vulnerable due to labor costs and long-standing neglect of distribution infrastructure – substations, poles, and wires – environmental reality is mandating a switch from coal- and oil-powered power generation to more ecologically-friendly sources. This shifts power generation from instantaneous demand responses to longer-term supplies – and that demands new storage technologies that are inherently expensive, adding further cost pressures to the electricity price. In time, those will moderate and the system will stabilize, but its currently precarious.

Even the cost of steel is impacted by the price of electricity and gas.

13. Vulnerability 3: The Price Of Materials

Which brings us to the bogeyman that arose as a consequence of the oil shock in the 1970s – the shift in thinking from an unlimited- resources perspective to a limited-resources perspective.

Ironically, this seems to have coincided with a rise in consumer consumption of disposable products – many are no longer designed to last as long as possible, but incorporate planned obsolescence, designed to increase profits for the manufacturers and sellers.

The truth is that many of the commodities deemed most greatly at risk have proven more resilient in supply than was dreamed of, back in the 1950s and 60s when the first warnings were being sounded on the subject (and falling on deaf ears). It’s materials that were largely unnoticed back then, like lithium, and rare-earth metals that are critical commodities these days – and part of that is due to supply-chain issues and politics.

Rare Earth Metals

In particular, China is the world’s #1 source – one-hundredfold or more – of these commodities, upon which all domestic computers and mobile phones and other smart electronic devices depend. That’s more than 100-fold compared to the rest of the world combined.

Rubber

There was a time in which there was not enough rubber being produced to meet demand. This would have been the first great Materials Shock (predating the Oil Shock by decades), but an artificial supply was devised in the nick of time.

Helium

Let’s talk for a moment about another material resource that doesn’t get enough attention: Helium. Modern disk drives rely on Helium to achieve their astonishing capacities and life-spans. Without Helium, you’re back at the 1 terabyte bricks of yesteryear – at best. Laptops and smartphones are suitcase-sized, and heavy. Helium and Hydrogen are the only suitable gasses – the next lightest gasses are Oxygen and Nitrogen, which won’t work for obvious reasons; you may as well use air. Hydrogen doesn’t work because the atoms are so small that they escape too quickly. The only satisfactory compromise is Helium, and for that reason, it’s now designated a Strategic Material by the US Government.

Yet, we throw it away on party balloons. The only reason this isn’t stopped is because it is feared a public panic would result – and it’s a hard sell persuading people that the second most-common substance in the universe is in critically-short supply.

Silicon

One more, for the sake of completeness: Sand is everywhere, and its the source of Silicon, which are fundamental to all sorts of computer chips. Not something you would ever expect to be in short supply. But it is.

The reason is that modern computer chips require exceptionally-pure silicon, and that only comes from exceptionally-pure sands, and that – in turn – is in comparatively short supply. The only reason this is not as dire a situation as the others mentioned is that there are expectations that ways can be found of ‘purifying’ lower-quality sources – though these are likely to double or triple the prices of electronics virtually overnight, if (when?) they are ever needed..

Food Sources

An afterthought, but an important one. How much agricultural land in the US is actively farmed, do you think?

About 16.8% of the US is considered arable – that is, capable of producing crops. This number is rising as we get better at farming – it was reported to be 17.24% in 2020.

Of that percentage, 52% is actually used for agriculture. Some of it is reserved for forests, for example.

Because of subsidies, the most profitable crop is corn, so that uses up more than half of the land actually farmed. By area, that’s roughly the size of California.

The rest – roughly the size of Indiana – is where the rest of the American food supply comes from.

Because farms need to make profits, too, there’s an incentive for corn syrup to be put into almost everything. This contributes massively to the health problems in the US – about 42 pounds of the stuff per head, each and every year. For comparison, the average American also consumes about 110 pounds of red meat a year.

It’s too late to change – farming anything else would require a multi-generational investment in different farm machinery that would send farmers broke long before they got there.

Everyone needs to eat – so anything that affects food prices (like, say, an invasion of the #1 grain-producing nation on earth, Ukraine) is going to affect everyone and everything.

14. Vulnerability 4: The Price Of Labor

By and large, this is a negligible factor unless inflation is already running rampant, but labor costs are extremely sensitive to other primary vulnerabilities. This has enabled past state governments in the US to pass laws limiting – almost eliminating – employee rights. Nevertheless, as the current Hollywood strikes reveal, if everyone pulls together, massive disruptions can still occur – employees can only be pushed so far before they will revolt.

Unions, by giving employees some negotiating power, can actually decrease the level of industrial disruption – at the price of increasing its frequency.

What’s less harmful? The occasional catastrophic meltdown, or smaller but more frequent temporary disruptions? I don’t pretend to know the answer, but lean toward the latter.

Nothing happens without workers somewhere in the process. So everything is equally vulnerable to general increases in the cost of Labor. But, at the same time, some growth in this area is essential or you get those catastrophic meltdowns, no matter what interventions may attempt to prevent them.

The cost of labor is a key ingredient in everything from mining to power production. This ubiquity means that labor cost increases have delayed impacts on every other critical vulnerability, capable of sending them over the edge.

Minimum Wage

So the answer is to suppress the fundamental cost of labor as much as possible, right? No, no, no! Every time a general increase in minimum wages is mooted here in Australia, the conservative side of politics sound alarm bells about businesses collapsing, and I have no doubt that it’s the same everywhere else in the world.

If the minimum wage is too low, people have to work multiple jobs to make ends meet – and exhaustion means they will be less productive at all of them. There was a time- back in the 50s and 60s – when a family could live comfortably off a single wage; that’s no longer the case.

Two-Income Families

Economics driving social changes which in turn drive economic changes – this is something I’ve tried to show in this series as a significant pillar of reality. In this case, consumer habits adapted to twin-incomes, and businesses evolved to satisfy those consumer habits, and before you know it, you need a two-person income to achieve a satisfactory standard of living.

Which leaves single-income households in a very difficult position. There are only two solutions without raising wages – government support, or second jobs. So the one person is now ‘consuming’ two or more job vacancies, at the expense of someone else who wants a job. The end result is an increase in the unemployment rate, and a more profound increase in the long-term unemployment rate.

This suits businesses, however, because it keeps their labor costs down, enabling more income to be characterized as profits.

Doubling the minimum wage

I remember clearly a doubling of the minimum wage in the US being mooted by some as proposed policy in the 2016 US elections, and on first glance, it would do a lot of good. But…

Does anyone have any doubts that doubling the minimum wage would flow through to increases in all other wage levels? I don’t. The effect might be attenuated for those already on good money, so it wouldn’t end up doubling the entire wage levels of a country, but it would be a significant increase. Conservatives are right about that.

Aside from this, what would happen if such a policy was enacted? Well, people would no longer need a second job, assuming that nothing went up in price. That means that they would achieve a better work-life balance – good for mental health and general happiness.

To replace them, employers would have to recruit more workers. So unemployment would go down, and things would stabilize at a new normal – ironically, one closer to the much-lauded 1950s idolized by the MAGA-crowd.

But, if labor costs go up, businesses will lose profits – and that’s unacceptable. There would be an immediate increase in prices, which would start to erode those wage gains. On top of that, there would be massive short-term economic disruption, with some businesses not raising prices enough and some going too far.

You can only raise prices so far before consumers stop buying. So there are limits to the wages growth that business can absorb, ones imposed by public opinion. So some businesses will get their adjustments wrong, and lose profitability, and go out of business. And some will make the calculations correctly, and conclude that they won’t stay profitable because they can’t raise profits enough, and close.

Doubling the minimum wage will have all sorts of short-term economic and social benefits – before plunging an economy into a Recession or a Depression. Not good.

Equally severe problems result when this picture is used to suppress growth in the minimum wage. They are just less overt and obvious. When this happens, the only solution is to raise pay scales – and if you suppress wages growth too much, for too long, you eventually reach the point where you need a doubling just to get to where your wage-rates should have been.

Theoretical Solutions?

So, short-term, what you need is a graduated rise in minimum wage, rather than trying to do it all in one hit, with a defined end-point.

Once you get there, indexing the minimum wage to Inflation seems the obvious long-term solution.

It’s not that simple, because wage increases are, in and of themselves, inflationary. This is a positive-feedback loop – another one – that will regularly send the economy surging out of control.

Businesses thrive on stability, and this is anything but stable. As with many other things in life and politics, there are no easy answers, and anyone who tells you there are is attempting to pull the wool over your eyes.

15. Vulnerability 5: Economic Disparity

While I’m in the vicinity, I should talk about this momentarily. Income Disparity, also described as Income Inequality, is the uneven distribution of total income throughout a population.

In modern times, it means too much money going to executives and shareholders and not enough being fed into the pay packets of the people actually generating the income.

This is an obvious result of pushing labor costs down too much. Those who stand to gain – the wealthy and well-paid – are obviously in favor of it, for purely selfish reasons. This leads them to donate heavily to political groups who promise to cut their taxes or keep wages “under control”.

Some are smart enough to recognize these as short-term gains leading to long-term pain, but many are not. This problem produces a hidden instability within the economy but competitive pressures – pay scales at the top end necessary to recruit good employees – restrict action to deal with the problem.

Solutions need to affect everyone all at the same time, whether they like it or not, and that makes them the responsibility of the highest levels of government.

In theory, higher tax rates help to balance the pressures – but greater access to tax avoidance mechanisms minimize this effect.

The only solution that I can see is some sort of imposed regulatory mechanism which fixes the wages of a supervisor at (say) 2.5 times the average scale of pay of those supervised. But that contains inherent inefficiencies, encouraging poor business structures, and I doubt that any political party espousing it could ever get elected, so it’s neither effective enough nor ever going to be implemented. No easy answers – again.

16. Vulnerability 6: The Price Of Land

Land is sometimes said to be the safest investment, because – in the long run – land values always go up, not down. Oh, dear, sounds inflationary, doesn’t it?

This is one of those statements that’s both usually true and misleading at the same time. It depends on how volatile the housing market is in a given region, and how long “in the long run” is, and all sorts of other factors.

If you buy property in a small town, and the rail line to that town closes, you’d better believe that the bottom will fall out of the property market – you may have to wait a century or two for land prices to get back to where they were.

Buying property that’s in demand because workers in a nearby factory need residences, if that factory contaminates the water, your property will become worthless overnight. If you’re lucky, the government will forcibly buy you out – for 1 tenth what you paid for the land, which – effectively – will never go back to being worth what you paid for it..

Here in Australia, the big stink is about insurers who will no longer cover communities for flood damage because once-in-a-century floods have struck twice in the last two years. This devalues the property concerned so significantly that the government is contemplating relocating entire towns to higher ground, and confuses probabilities with predictions – but that’s a bitter debate for another day and maybe a different venue.

Part of the problem is that there is no formula for working out what a piece of land is worth; there hasn’t even been a proper analysis to identify the factors and their relative strengths. Instead, the ‘formula’ is to look at what properties in the area went for recently, how this property compares with those, what’s changed economically in the area since then, add a gut-instinct variation to that baseline for these factors, plus 5% and inflation, then list it and wait to see if anyone bites.

The inherent volatility of Auctions don’t help matters any, either.

I remember when the first property in Sydney sold for more than a million dollars – a mansion with estate grounds in the most affluent part of town, overlooking the world-famous Sydney Harbour.

These days, the average 2-BR house sells for over a million, and despite an initial dip when those who saw the interest rate writing on the wall got out while the getting was (relatively) good, prices are still surging.

The number of sales per month is expected to triple or quadruple over the next 4 months, as more people fall off the mortgage cliff already described and either sell up (accepting a loss) or get foreclosed by the banks. Around Christmas, it will be a buyers’ market – if you’ve got the money to invest.

The value of property feeds into inflation in a number of ways – first, mortgages eat into disposable income, in exactly the same way as high inflation does; and second, rents always go up when property does, and that means that business premises cost more, an expense that gets passed on as higher prices for goods.

This can be viewed as the tail wagging the dog – by mimicking the consequences of a rise in inflation, the price of land creates a rise in inflation. Cause-and-effect are all messed up in economics – at least, when it comes to land prices.

A sudden spike in the value of land tends to be a relatively local thing, and so you get pro-inflation hot-spots breaking out here and there all the time. That’s happening right now to land around the still-under-construction second International Airport for Sydney – except where the land lies under the flight path of jet aircraft, where values have crashed. Those affected get only a modicum of sympathy from me, it’s only been on-again-off-again for three decades now; they knew the risks when they bought there.

Most of the time, those hot-spots get ‘leveled out’ to a large extent by the far greater lands where the prices are relatively stable, or even declining. Take a look at photos of the urban decay in Detroit sometime, and think about the property values. There are locations where the cost of demolition of decrepit structure are more than the land is worth as an empty lot.

But these hot-spots arise as a confluence of random and non-random events – and, just as you can roll four sixes on 4d6 three times in a row if you roll for long enough, sooner or later those random hot-spots can combine into a national housing value boom. Once they start, there’s nothing that can be done about these, because house and land prices are as much about perception as they are any concrete, justifiable, valuations. All that can be done is to ride the whirlwind and wait for the inevitable crash.

Supply and demand also factor into the land-value equation; if something happens to limit supply, or greatly increase demand, inflation, and inflation of land values, are the inevitable results.

17. Vulnerability 7: The Price Of Goods

I’ve already touched on this, under the heading of the cost of materials. Consider these formulas:

- Wholesale Price of Goods x Units produced = (cost of materials + manufacturing + labor + packaging + distribution + marketing + overheads + loan repayments + savings) x (1 + profit margin/100) x (1 + inflation/100).

- Retail price of goods x units purchased = (cost of sales + labor + warehousing + sub-distribution + marketing + overheads + loan repayments + savings) x (1 + profit margin / 100) x (1 + inflation/100).

There are so many inputs into the final cost that the customer pays for his goods that anything and everything makes them go up. If any factor ever causes them to go down, that can be taken as additional profits or hidden as a ‘sales price’ because it will only be temporary.

And, again, that all sounds very inflationary, doesn’t it?

If modest increases happen in several – or all – of these factors, even though they are not of concern individually, they can aggregate and then amplify into out-of-the-blue spikes in retail prices. I’m talking rises of 30-50% in one step.

Most of the time, that’s just a blip, and while market share may be impacted, for the most part, things go on as usual.

Every now and then, however, some critical commodity will be impacted – be it ball bearings or electrical switches, and there’s an unexpected snowballing in the price of goods that are deemed essential by the consumer.

Most of the time, these impacts are just noise within the system, with a small overall upward trend. Like rolling 6d6-4d6 repeatedly.

Image generated (very quickly) using Anydice. The peak of the curve, as you would expect, is at 7.

Although most of the results will be scattered between, say, 2 and 12, and the occasional oddity might be between -1 and 15 but not in that core region, every now and then, you’ll hit the jackpot.

Inflation can come out of nowhere, when the stars align.

18. Vulnerability 8: The Price Of Services

The same thing can be said for the price of services. But there’s a bias that is often not taken into account.

In Australia, there was a time when a University Education was free; it was felt that the contributions an educated workforce would make to the economy would more than repay the investment in the future of our society.

Successive governments first undermined this system (because it was expensive) and then made a concerted effort to underwrite it by introducing university fees, something similar to US-style college fees, except that the up-front costs were paid by the government who then forced repayment of the resulting debt by the student. There are some protections for the students – you only have to start repayments once your income hits a certain level, for example – but the fees have simply grown and grown since.

These costs obviously flow directly into the fees charged by these graduates for their services. The whole thing is directly inflationary, and at the same time, a drag on economic growth – a contradiction in terms that only resolves when you realize that we’re talking about different time scales.

University administrators love the scheme; by putting a flat fee on the price of a degree, they can charge international students up-front, easing their running costs. A Bachelor’s Degree is costs AU$15K-33K per year, usually for 3 or 4 years. A Masters is two more years at AU$14K-37K a year, a Doctorate is a couple more at the same rate. Medical and Veterinary degrees are up to twice this amount. An MBA can cost as much as AU$121K per year.

As of 23 June this year – about two weeks ago – it was estimated that there was a total of AU$74.4 billion owed by about 3 million Australians, an average of A$24,700 each. Any outstanding debt is indexed every year against inflation – they went up 6.6% this past June 1. This encourages students to repay their debt before the threshold is reached.

The problem here is that incomes have not risen by anywhere close to the inflation rate – so ex-students are suddenly falling deeper and deeper into debt. In effect, 7-14 years of student productivity is lost to the economy repaying this debt.

The more an ex-student can charge for their services, the more quickly they can discharge their debts. This is keeping graduates from home ownership, for example, and increasing the stress they experience, increasing drop-out rates in various professions, while at the same time pushing the services in question further out of reach of lower-income clients. The full social and economic impact has yet to be discovered, but the indications are not good.

The college system in the US sucks this money out of the parents’ finances, so the economic impact starts immediately instead of being deferred.

In effect, this is acting as an amplifier to inflation rates in a limited range of areas. It means that any sudden increase (however temporary) in inflation rate gets an additional boost. What may have been a manageable 4-5% inflation has the effects of a 6-7% rise.

It doesn’t matter what the cause is – if the cost of plumbers and electricians and doctors and teachers and the like go up, it drives inflation beyond the increase itself.

19. Vulnerability 9: Corporate Greed

A favorite bogeyman of the left, and with some justification. It doesn’t matter if 99 out of 100 corporate citizens behave responsibly; that 1-in-100 can and usually does have a disproportionate impact.

Greed basically siphons money out of the economy and parks it where the greedy individual can profit from it but no-one else. Effectively, this is the same as not printing enough currency, which in turn raises the value of the currency – which, at first, might seem to be a good thing for reducing inflation.

The problem is that every dollar (or peso or whatever) that gets spent anywhere else in the economy is therefore essentially spending more than the face value – and that is inflationary.

On top of that, even the hint of an allegation of corporate greed is guaranteed to get workers agitating for a pay rise, for their fair share. Since the mid-90s, governments have linked productivity gains (which boost the economy) to wage rises; the problem is that since 1994-5, every 1% productivity gain has only earned a 0.8% pay rise. That other 0.2% has gone to business owners and shareholders.

I have seen arguments that this is entirely justified – “After all, its their business and they deserve to get more if the business becomes more profitable.” The flaw in this line of argument is that they would get more anyway.

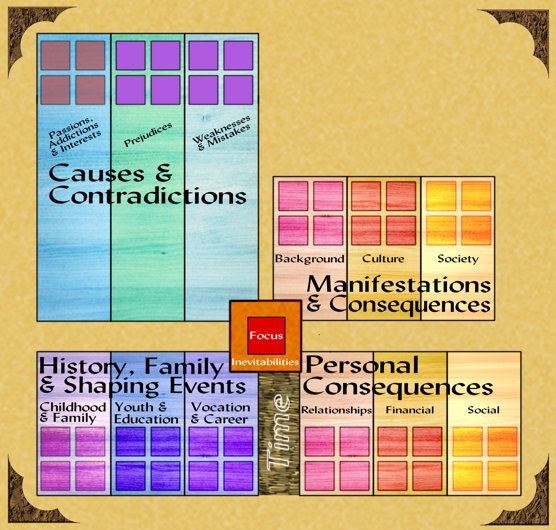

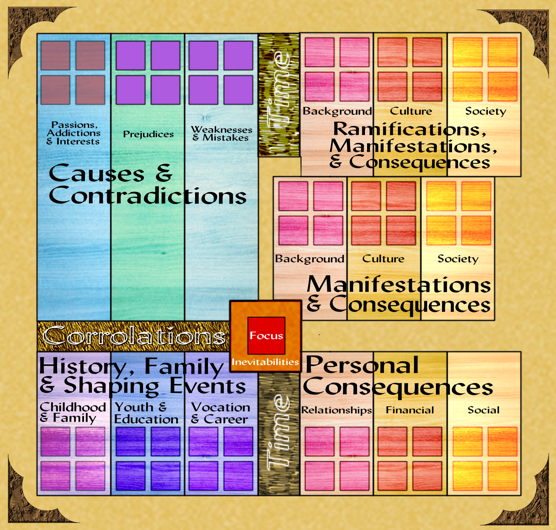

I’ll be the first to admit that the diagram above is exaggerated – it’s intended to illustrate the principle, not the reality.

The White bars are the total income of the business, adjusted for inflation. Unrealistically, it’s the same throughout. This is distributed into three areas – Profits, Wages, and Costs, colored yellow, Green, and Red, respectively. In figure 1, these are roughly equal (again, improbably).

Row two illustrates a 30% productivity gain, over several years.

Row three shows that if the proceeds of the productivity gain are shared equally between owners (profits) and workers (wages), everyone is equally better off. Of course, profits may be divided amongst one or many, but it’s unlikely to be as many as the wages are divided amongst, so an individual will get less in dollar terms – but everyone gets 15%. At least in theory.

Row four, completely unrealistically, shows all 30% of the productivity gain being diverted into profits; the wages segment is the same size as it was in 1 and 2.

The reality is that we are 1/5th of the way between 3 and 4. As I said, this is exaggerated for illustrative purposes.

It’s critical to realize that industrial relations are as much to do with perceptions and mindset as they are economic realities. What that one-in-100 business is doing affects that mindset, and that spreads far beyond that one business, creating an us-vs-them mentality.

If this sort of thing was the result of one decision, it would be bad, and the results would be ugly. Because it has been the result of many wages decisions and agreements over many years, it has largely sailed under the radar, and there was not a lot of outrage – until the current ‘cost of living crisis’ made people search for every last cent they could find.

Those in other countries may have escaped that crisis – certainly, despite a lot of noise, the US economy seems to be loping along quite comfortably at the moment – but there remains plenty of evidence of the same problems there. Explosions in this area are only ever deferred, never avoided – and sometimes, are all the worse when they do finally explode because of the additional buildup of pressure.

20. Vulnerability 10: The Banking Sector

It’s an old story, best elucidated in the movie Sneakers.

“Posit: Someone starts a rumor that a bank is financially shaky. Consequence: people withdraw their money, and pretty soon, it IS financially shaky.” — from memory, so don’t be surprised if this is slightly paraphrased.

Banks are businesses like any other, and like other businesses, they can fail. The difference is that when banks fail, they take other businesses with them. Too many bank failures, or banks of a certain scale failing, can rot an economy from the inside.

And all that is before the impact on public confidence is taken into account – even though that can be the most damaging of all.

It’s fair to say that the biggest crisis in the US economy of late has been the failure of three banks in close succession. In each case, there was ‘good’ reason for the failure*, and the system that has been built up since the GFC did what it was supposed to do, protecting the consumers who banked with those institutions.

* – well, in two cases there were good reasons. The third case was different, and more a manifestation of perception over reality, magnifying a short-term problem into a terminal ailment. Which goes back to the opening comments of this section, I guess.

The banks are the heart of the economy, pumping money around. Once, there was some cushion – physical cheques in the mail – but these days its all done electronically, for the greater convenience for customers. That takes away a safety net, but so far, that defensive mechanism hasn’t been needed.

Nevertheless, any failure – perceived or real – of the banking infrastructure can place an economy on life-support, no matter how robust it might otherwise be.

21. The eleventh vulnerability

Let’s talk about the stock market for a minute, even though most of my knowledge of it comes from Trading Places.

In essence, it’s all about confidence. When people aren’t sure how much a stock will be worth tomorrow, they sell it – and usually put their money in something they think more secure. The more at-risk the stock, the greater the potential gains, but most such bets don’t pay off; the safer and more stodgy a stock, the less likely it is to pay a big return (but the more likely it is to pay that smaller return reliably).

When things look they are going well, in economic terms, the stock market becomes bullish, and the traders who buy and sell stocks more inclined to take a risk; when things look precarious, the opposite happens.

‘Gold Fever’ is a real phenomenon, and it has its modern-day equivalent on the stock market floor. If convinced that a company will deliver in the long run, investors are quite capable of throwing good money after bad – regardless of the reality. Equally, doubt and a lack of confidence can be contagious, driving down the value of perfectly acceptable companies.

Postulate a company, XYZ Tech (I hope it’s not a real one!). For the last three years, it’s traded steadily at $1 a share. But there are rumors of a big contract being negotiated, so today, it’s trading at $2.50 a share, and rising. There are two schools of thought: buy now, because it’s still rising; or don’t buy, because most of the good profit has already gone out of the transaction, instead focusing on the businesses that will go higher if the rumors are true – and some that will rise if they aren’t, depending on how much stock you put into the rumors.

Tomorrow, the news breaks that the negotiations have failed, and the stock plunges to 60 cents a share. Those who bought at $2.50 lose a lot of money, that being the risk of the gamble. There are, once again, two schools of thought: one is that you should under no circumstances buy, because there’s no evidence that the slide is over, or that the company can even survive this roller-coaster. The other looks at those years of stable trading, and decides that the likelihood is that the stock will eventually stabilize at something close to that original $1 a share – buying now will not only shore up the stock price, making that a more likely outcome, it will come close to doubling the money of anyone investing in those shares.

Context is obviously all-important in choosing between these different perspectives. What else is going on that could impact share values, and shares of this sector and this business in particular?

The stock market thus provides a direct connection between confidence in the economy and decision-makers, albeit one that is hopelessly drowning in noise. Nevertheless, the long-term trend of stock markets is normally upwards (beware of the rare exceptions, however!). So it’s that whole 6d6-4d6 thing all over again.

There’s not much of a direct link between a stock market and economic health; it reacts, it doesn’t drive. But what it reacts to DOES impact inflation, and interest rates – by affecting profitability – DO impact on the share market, driving some shares higher and some lower.

Viewed from another perspective, a stock market always reacts to events and to the perception of events, with foresight if at all possible. And that makes it a valuable guide to what an interest rate decision needs to be, in order to manipulate both the economic factors that are directly affected and the public confidence and perceptions that stem from them.

To what extent is the Great Depression directly attributable to the Stock Market Crash? And to what extent were both driven by other factors that they had in common, or domino-consequences of those factors? You can spend years unpicking the minutia and still not be sure, because the ultimate connecting tissue is the attitude and psychology of the time.

Which raises the question, “What were you Thinking?” to a whole new level.

22. Impossible Predictions?

By now, it should be easy to see why accurate predictions of inflation, and hence of interest rate needs, are all but impossible. The best that can be hoped for is to get an educated best-guess that’s not too far wide of the mark – and to shade your guesses based on recent events and underlying trends. “If in doubt, do what you did last month” is trite – but not as far wide of the mark as people might think.

The RPG Perspective

There are so many inputs into inflation and interest rate decisions that a GM can plausibly make these (and other economic indicators) do anything they want, within reason (I’ll come back to that caveat in a moment).

Understanding what the vulnerabilities of an economy are, and what the underlying contributing factors are, means that you can sound credible when asserting those decisions “made in-game by NPCs with the authority to do so”. This understanding also prepares the GM for handling any PC engagement with the economy – whether that’s building a castle or persuading the government to fund your Yiddish Space Laser Defenses.

But here’s the rub: these announcements don’t come at random intervals. There’s always a key reporting date, and a key meeting by the central bank or whoever it is that makes the decisions. Plausibility demands that the relevant trends, and their consequences, manifest a month or months earlier, even if you don’t draw attention to them. And it’s important to note any remedial action that will logically take place in consequence, too, and any direct consequences of such actions.

The in-game economy is completely in your hands. What you do with it is up to you – but before you start playing with the controls, it might be worth taking a moment to skim the instruction manual.

That’s what this post is: a generic user manual for modern economic conditions. Use it in good wealth!

In part 1:

- Introduction

- General Concepts and A Model Economy

- The Economics of an Absolute Monarchy (The Early Medieval)

In part 2:

- The Economics of Limited Monarchies (The Later Medieval & Renaissance)

- In-Game Economics: Fantasy Games

In Part 3:

- The Renaissance, revisited

- Pre-Industrial Economics I: The Age of Exploration

- Pre-Industrial Economics II: The Age of Sail

In Part 4:

- Industrial Economies I: The Age Of Steam

- In-game Economics: Gaslight-era

In Part 5, Chapter 1:

- Industrial Economics II: The Age Of Electrification & Motoring

In Part 5, Chapter 2:

- Industrial Economics III: War & Depression

- In-Game Economics: Pulp

- In-Game Economics: Sci-fi

- In-Game Economics: Steampunk

In Part 6, Chapter 1:

- The Pre-Digital Tech Age

- World War 2

- Post-war & Cold War

In Part 6, Chapter 2:

- Government For The People

- Aviation

In Part 6, Chapter 3:

- The Space Race

- Tech Briefing: Miniaturization

- Behemoths Of Blind Logic (early computers)

- The Promise Of Atomics

- A Default Economy

In this Part:

- Economic Realities (Inflation & Interest Rates)

Planned for parts 8+:

- Digital Economics

- Post-Pandemic Economics

- In-Game Economics: Modern

- Future Economics I: Dystopian

- In-Game Economics: Dystopian Futures

- Future Economics I: Utopian

- In-Game Economics: Utopian Futures

- In-Game Economics: Space Opera