A process for designing & constructing big or complex things, from spells to magic items, castles to space stations, industrial processes to political campaigns, new chemistries to better TVs and AC systems, using something every RPG already has.

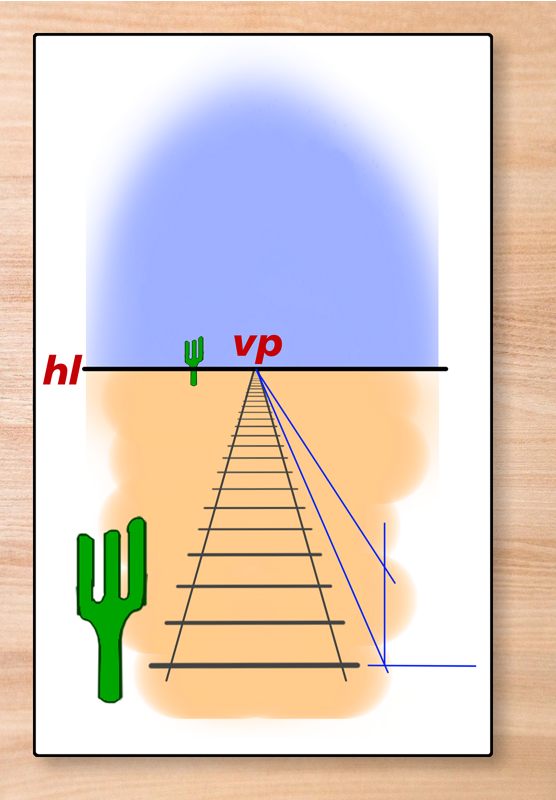

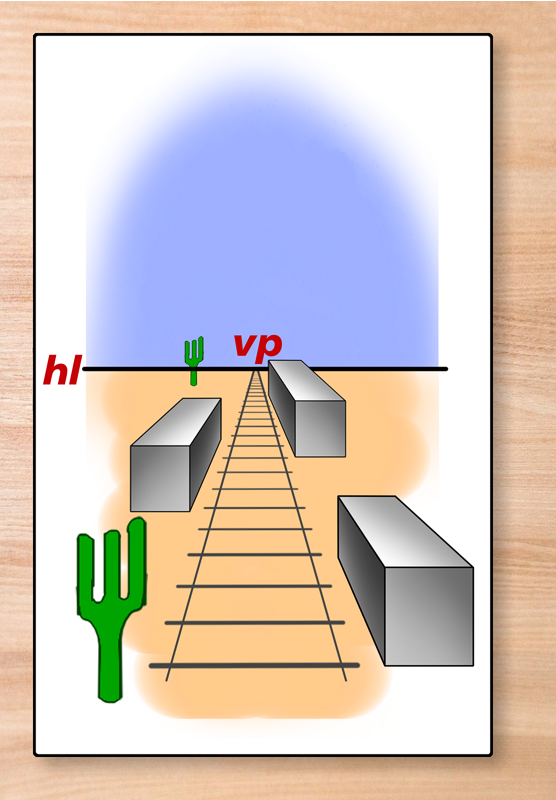

It’s not often that I have a clear idea of what I want in a featured image before I go looking, but this time I did – and couldn’t find it, and didn’t have time to put it together for myself. So I threw this together, instead, adding some color to the original image by Gordon Johnson from Pixabay

The real world caught up with me somewhat in the course of writing this article – I had hoped to publish it Monday (everything but the examples were finished Sunday night, so that was a realistic ambition) but things just didn’t work out that way. I also had no idea that it was going to turn into this 17K+ behemoth; the rules are described in their source location in just 1200 words. This was supposed to be a quick article to let me turn my attention to other things; instead, it has consumed my week.

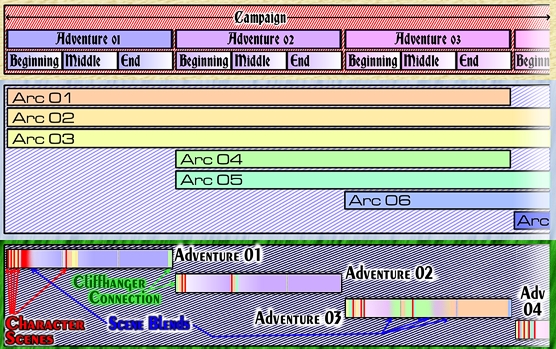

Background: The evolution of a plotline

The Zenith-3 campaign is transitioning, in the course of the current adventure, from a phase in which a lot of roleplay is handwaved to full operation, following its long shutdown. There have been whole weeks of game time in which lots of little things happened but no major decisions that impacted the team’s overall mission, so it seemed like a functional approach.

For the one or two major decisions that did have to be made, I stepped outside the deliver-events-as-narrative approach and let the players roleplay, having covered all the options and their consequences in the adventure text.

Over The Christmas Break

When you have multiple events per day over multiple weeks, you need ideas – a lot of them. During the Christmas break, I jotted down 14 of them to slot in as they matched up to the narrative. The intention was for full gameplay to restart with Wednesday, Day 53, when the action would segue into… more of the same, but building in intensity as the main mission of the adventure sequence proceeded.

One of those ideas was an all-PCs disaster story. It was summarized in eight lines (four times as many as most of the ideas), and scheduled for Tuesday, Day 52.

Of course, to describe the events within one of these seed ideas, they had to be expanded, in the manner I’ve described in several past posts. How did the story start, Where, How did the participating PCs (usually not all of the team) get involved, What setback(s) were encountered, How were they overcome, and How did the story resolve?

At one sentence or a short paragraph each, that takes a 1-2 line encounter idea and turns it into a 6-12 sentence / paragraph short-short-story. Where it was natural to do so, I indulged in a little more world-building, exactly as I would have done if these had been played out fully. Special attention was paid to the characterization given each PC by the player and each established NPC. All this added a few more – sometimes many more – paragraphs to the story, but it was all kept as concise as possible. I wanted this to serve as a reminder of the tone and style of the campaign, making it easier for everyone to step back into character when the time came.

Because of its length as an idea, the ‘wildfire’ plot idea – and I’m being circumspect because it hasn’t started in play yet – was always likely to be 3-4 times the length of these smaller encounters. Part of the story involved a new creation, a species the PCs hadn’t encountered before. I had vague ideas regarding the morphology, abilities, and persona of this encounter, nothing more.

January 6th

So that was the state of development when I got back to work on the campaign on January 6th. Step one, initial ideas, done. Step four, making the situation and its differences from normality seem both more visceral and more credible, was accomplished by having the team rescue some trapped firefighters.

Step three, better delineation of the threat, followed. That lead straight into Step five, a complete description of the new life-form. As it developed, it became clear that if I delivered it all as a single text block, it would be overwhelming. Too big an infodump. So the overall shape of the story had to change; half of the PCs would not participate in the rescue, while the other half played detective and churned out parts of the infodump.

They needed somewhere to be while that was happening, so that led back into a complete revision of Step two, the ‘how the PCs get involved’ sequence. And then another one. And critical decisions started mounting up. Some could be resolved in narrative, because there was only one logical solution to the problems at hand. Verisimilitude demanded the introduction of additional NPCs, and interactions with those NPCs.

It became clear, after about 9 days of development, that this needed to be more of a full adventure with roleplay of at least the critical moments, and that led to further changes, both in the work done already, and in the work still being planned.

The important bit – the end result

As it currently stands, the eight-line idea has become 98,650 words plus 13,475 words in notes still to be integrated into the text. This is spread amongst 282 scenes, most of which will never see play – there are 28 different pathways through the adventure, based on PC decisions, skill rolls, etc. Some of those decisions are absolutely critical and could have impacts long outside this single adventure, and the adventure itself is going to cast a longer shadow, too.

The adventure will push the PCs into areas they haven?t had to explore for a while, if at all. And it’s had to push the rules system into areas it has never covered before, too. And one of those is the subject of today’s article.

New Rules for the construction of complexity

In one (or more) turns of events, a PC has to create a new chemical. I’m not going to get into purposes or reasons. So I wrote some rules for that, and carefully built in limitations and restrictions to keep this from overpowering the campaign while maintaining verisimilitude.

And, at another point in the narrative, another character has to create a complicated device with a simple function. And I found that the same rules worked for that, too.

And then I realized that there was a way of simplifying that task to make it more manageable, by having another PC use a power in way they had never done before. And the process of doing so could also be described and managed with the same rules.

These rules are nothing like anything I’ve seen in any other RPG. That always meant that they were slated to eventually become an article here at CM. But because I knew that at this point in time, I couldn’t quote the actual usage in the adventure to which those rules have been put, I either had to delay the article until that wasn’t a problem, or offer some examples from outside the adventure.

So I started thinking of some, and ended up with a list sufficiently long that I would never cover them all in just one article. With some additional ideas on the side to throw out there for people to use as they see fit.

I’ll develop one or two of these examples in full, touch on some highlights exemplified by the rest, and call it a day.

The mechanics

The mechanics of this process are really simple, but at the same time, quite elegant and capable of deep levels of richness and complexity – if the game system permits it.

They are capable of simulating the design of a new product, a new technology, a new magic item, a new spell, a new chemical, a website, a computer program, you name it..Anything that requires some form of design.

Conceptual & Functional Elements

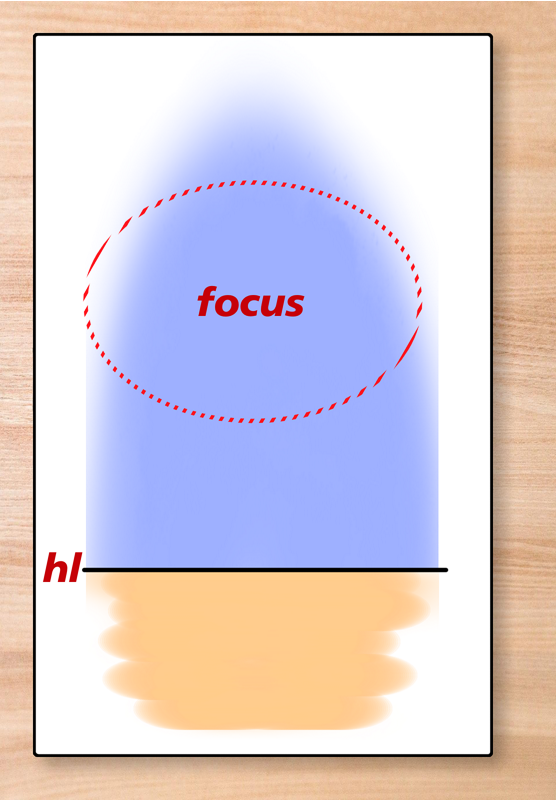

To make the system work, you need to have a clear and detailed design objective, because that’s what the process simulates making.

The functional requirements and possibly their underlying concepts need to be listed. That’s step one of the process. It doesn’t happen instantaneously; the GM should decide how long it takes, based on the character’s expertise in the most relevant skill.

Skill Foundation

But what is the most relevant skill? That’s for the GM to specify, based on the desired end product and the level of specificity of the game system.

It’s even possible that there may be a number of different skills required.

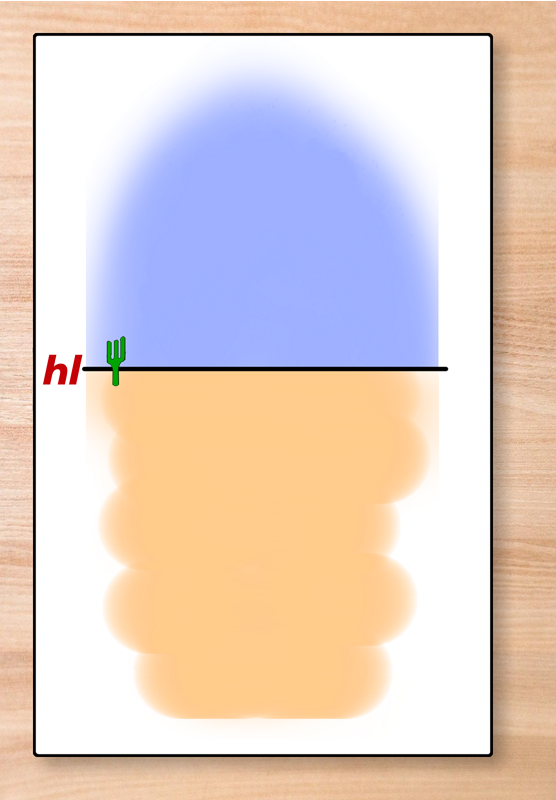

Interval

Incorporating each element of what the end product can / will achieve takes time. The time required is standardized and is referred to as the design interval. This is also specified by the GM based on the desired end result and the expertise of the character. It could be seconds, minutes, hours, days, weeks, or years.

A preliminary discussion of interval selection

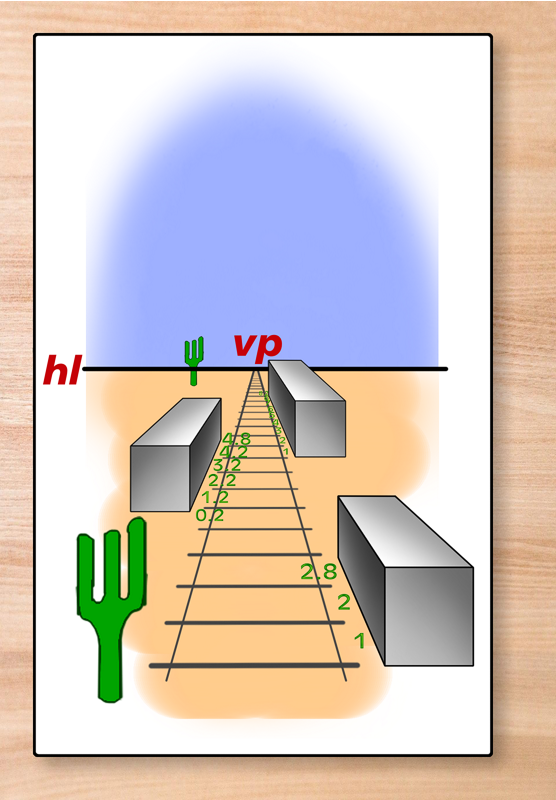

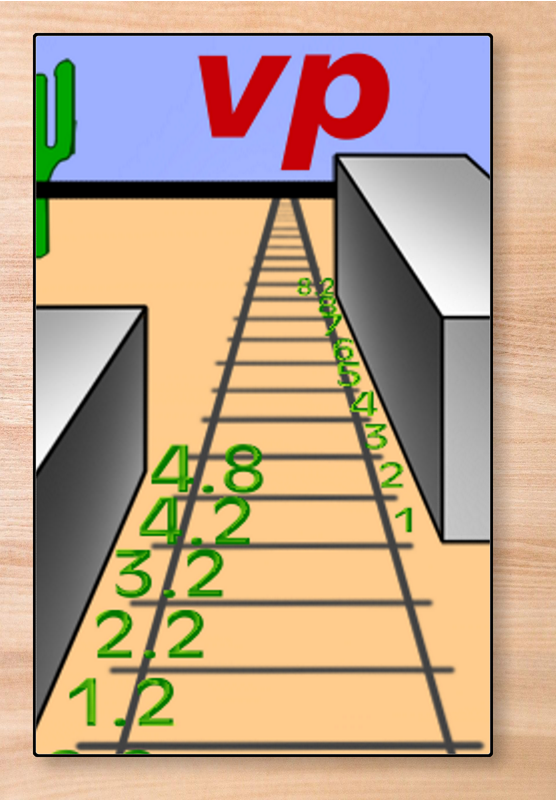

Interval multiplied by the total number of specifications gives the minimum time to complete a project. The actual time spent incorporating any given specification is quite a bit more variable, but under most circumstances, it will be either the minimum or something more. When thinking about intervals, I suggest using the following scales:

Tactical: seconds – Jury rigging a door, a simple emergency repair, etc.)

Positioning: minutes – solving a critical problem of some sort when time is critical.

Professional: hours/days – writing code, crafting a new Grimoire spell

Strategic: days/months/years – developing a plan to achieve a shift in a strategic balance (or imbalance), a campaign to change public perceptions, a new National Constitution, a plan to end national or city-scale dependence on a particular industry.

Industrial: minutes/hours/days/weeks – Developing a prototype or one-off gadget. The wide range is sub-selected by the purpose of the project.

Industrial II: weeks/months – City planning, a new product or system design (eg a new Engine), designing a building or a space station.

Major: months/years/decades – We Choose To Go To The Moon (even if we don’t currently know how), Terraforming, Inventing something current game physics doesn’t permit eg FTL, Time Travel. The latter devolve into Industrial II if there is a working model whose principles are understood, and may devolve further if the technology is commonplace.

Specifications

This is the GM’s opportunity to add to the list of functional elements. Some may be implied foundational steps ruled necessary in order to achieve a functional element already listed, some may be necessary to convert a prototype into a manufacturing process, and some may simply be parameters that the player hasn’t thought to list. There will almost always be something that the character hasn’t thought to include.

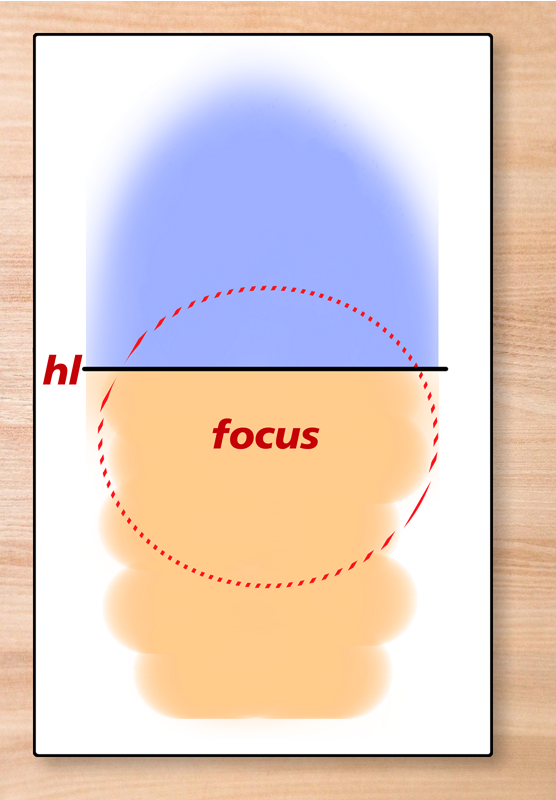

One question that the GM will eventually face is the difference between global effect and specificity. In most cases, specificity is easier to achieve than global effectiveness. It’s a lot easier to find a cure or treatment for one specific cancer than it is to find a universal treatment, simply because “cancer” is a generic label applied to many different diseases with some common elements.

But sometimes, where you don’t want to affect everything, specificity can be just as hard to achieve. If you are designing a new chemical, for example, there may be things that you specifically DON’T want it to react to, and engineering that can be a lot harder than letting things happen – because, if you don’t specify it as a requirement, the GM is free to have the combination have any effect that HE wants.

The scope of the purpose is all-important in this context. A one-off solution to a specific problem is always to be easier than a global cure-all or even a general solution to a specific type of problem, simply because those reduce the GM’s scope for storytelling. Yes, this is blatant metagaming – so what? It’s in the best interests of the long-term campaign, so that’s fine in my book. It would be worse metagaming if the GM made a blanket ruling of ‘you can’t do that’, in my book.

There is also ‘the rule of cool’ to consider. This is a character doing something extraordinary, and bringing abilities to bear on the problem that rarely get shown off. They are character-building.

And, finally, there’s the spotlight issue – does this solve the problem all on its own, or does it require deployment in a specific way; can it in fact require the efforts of the whole team of PCs just to get the solution into the right place at the right time? The first is only satisfactory if this is a last-ditch Hail Mary after the other characters have had their shot at glory and failed; the second makes this a group victory, which inherently creates more opportunities for the action to be tense and dramatic. Both can be good, but the first can also be bad, making the other PCs fifth wheels. This consideration can go in all sorts of directions depending on the circumstances and the purpose of the creation effort. Imagine a situation in which the other PCs have to face certain defeat and possibly death, just to buy the one character with a shot at winning the time that they need?

If it makes for a good story, that’s a big tick. If it will make similar stories harder to tell in the future, that’s a down-check. Neither is the totality of consideration, but either can tip the balance one way or another.

If the GM thinks there are too many Specifications, there are ways that he can conflate two or more into a single entry on the list, making them two or more aspects of the single requirement – for example, on a space station, there might be ‘functions in an Earth orbital environment’ which covers everything from radiation-proofing to vacuum-seals. Applying this to every room / space so that they are independently safe requires a second specification, though. The GM should take the Specification as it ends up as the basis for determining the difficulty.

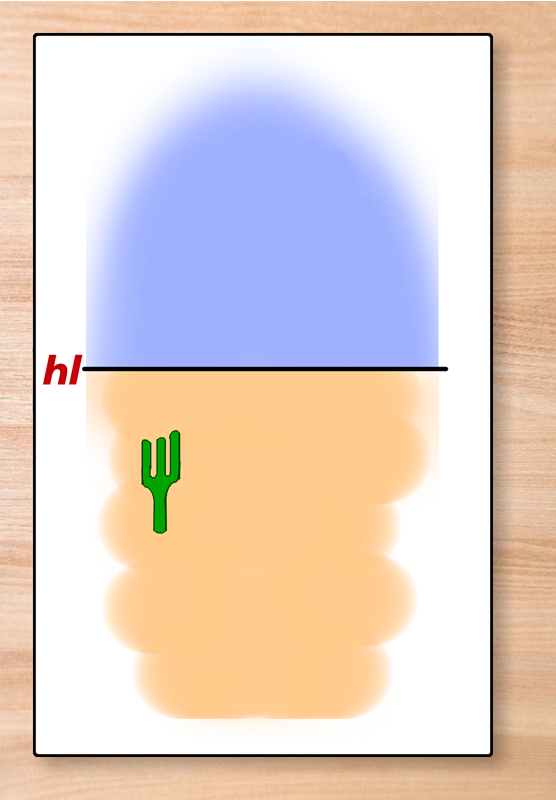

Rolls & Difficulty

Each Specification needs a successful die roll to integrate it into the design. The GM sets the difficulty, aided by a list of successive values of the potential, taking into account the conditions. Each roll consumes interval in time; that requirement has to be met, with the roll coming at the end.

Rolls that fail by a small amount – and the GM can determine how much this is, on a case-by-case, roll-by-roll basis – may, at his discretion, achieve a partial success. If there is no numeric variable involved, the roll is generally all-or-nothing.

If checkpoints are employed (see below), only the final roll ultimately matters; what the intervening checkpoint results reveal is the path taken to get there, one that can be full of ups and downs, but none of them critical to the final result (but also see the section dealing with critical successes and failures).

Barriers / Problems

Rolls can either succeed or fail. And there can be a gray middle ground offered by the GM in terms of partially meeting the requirements. This then bounces the question back to the player of the character – accept the partial solution, or encounter a Barrier / Setback.

A setback is a situation in which the GM feels that the desired functional Specification can be met, it’s just going to take a little more time. There’s a domino effect involved – the character has to go back one, two, or three steps and implement THAT specification in a different way. They can then work their way forwards, with a bonus to the success of the die rolls, until they achieve the required specification.

A barrier is more difficult to overcome; it adds an additional Specification to the list, inserting it just prior to the point of failure.

99%+ of medicines created in the lab never see human trials. Some of them simply don?t work, there’s an error in the theory on which they are based. Some of them have severe side effects, whether or not they work. And some of them are simply too toxic – they might cure whatever condition they are aimed at, but only at the expense of killing the patient. Those are all Barriers to success, and potentially insurmountable ones.

It’s the GM’s decision what sort of barrier the development process encounters, and its a decision based on whether or not they actually want the development process to succeed or fail – which hearkens back to the points made in the previous section.

If you hit a barrier, the time spent has been used discovering that there is a barrier. It does not magically vanish from the clock. The character has pursued a theory to the point of proving that this approach doesn’t work – an essential, if frustrating, part of the real world.

Extra Time

Another option open to the GM in such cases is to apply an ‘extra time’ modifier. This enables them to say, “You succeed but it takes N times as long as you thought it would / should.” You achieve this by looking at the margin of failure and calculating how much extra time is needed to compensate for it. This becomes a little trickier in that chances of success have to be rounded to whole values. The Hero system has the rule that any rounding happens in the character’s favor, and that seems fair enough to me.

It also opens the door for a character to state, “I’m getting close to completion, and have a little time up my sleeve, so I’m going to spend some extra time on each step from here onwards, dotting i’s and crossing t’s. That should improve my chances on each roll.” This is a perfectly legitimate application of the system, but it precludes the GM ‘helping’ the character with extra time – that ‘help’ has already been taken into account.

Extra Time can therefore be used in one of two ways, mutually exclusive. It can be used presumptively by the character to improve their chances of success on what they perceive to be a critical stage, i.e. one in which a partial success isn’t good enough, or it can be used by the GM at his discretion to turn a failure into a partial or complete success. The player’s choice to use extra time actively precludes the GM’s ability to help with extra time. I know I’ve pointed that out before, but reviewers of the draft rule still missed it.

If a player allocates extra time and still fails the roll, it must result in a Setback or a Barrier.

The reason for the exclusion is geometric expansion – two sources of extra time multiply, and the total can escalate out of control too quickly for effective game management. If the player specifies 4x normal time be used proactively, and the GM found that more was needed, you could end up with 4 x 8 = 32 times the normal interval. Neither 4x nor 8x are unreasonable, but the compound of the two takes the system right to the edge. 32 x 32 = 1024 – so if the interval was originally one minute, the player will have spent more than 17 hours getting there.

With intervals of one minute, you can reasonably expect the task to be complete in minutes – anything more than say 90-120 breaks the limit of being ‘reasonable.’ If you had a task that you thought was going to be 1-4 minutes in time, and decided to take the full 4 minutes to do it well, would you be happy pursuing that path for more than 2 hours, or would you stop and look for a faster way? Even if it meant starting over from an earlier point in the process?

I know what my answer would be.

The player can also set a hard limit on the amount of extra time the GM can force them to use before they fall back to an earlier step and try a different approach. Neither the player nor the GM have to actually describe that ‘different approach’ – the rules assume that one exists. They still use the time that they spent chasing down what they now perceive to have been a blind alley.

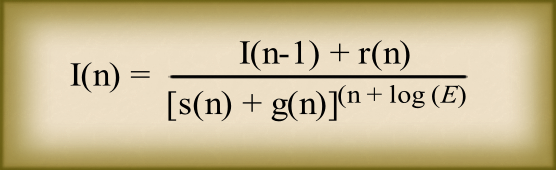

xN time = 5% x log(N)/log(2) is the usual pattern, but the “5%” then has to be modified to fit the mechanics of the roll. For a 3d6-based system, 18 (maximum)-3 (minimum) = 15 (range), and 5% x 15 (range) = 0.75. For a d20, the base number would be 5% of 19, or 0.95. All rounding should be in the character’s favor, i.e. round up.

Time x1 1/3 = +0.3 (3d6) = +0.4 (d20) = +2 (%)

Time x 1.5 = +0.4 (3d6) = ++0.6 (d20) = +2.9 (%)

Time x 2 = +0.75 (3d6) = +1 (d20) = +5 (%)

Time x 3 = +1.2 (3d6) = +1.5 (d20) = +8 (%)

Time x 4 = +1.5 (3d6) = +1.9 (d20) = +10 (%)

Time x 5 = +1.8 (3d6) = +2.2 (d20) = +11.6 (%)

Time x 6 = +2 (3d6) = +2.5 (d20) = +13 (%)

Time x 8 = +2.25 (3d6) = +2.85 (d20) = +15 (%)

Time x 10 = +2.5 (3d6) = +3.2 (d20) = +16.6 (%)

Time x 12 = +2.7 (3d6) = +3.4 (d20) = +18 (%)

Time x 16 = +3 (3d6) = +3.8 (d20) = +20 (%)

Time x 20 = +3.25 (3d6) = +4.1 (d20) = +21.6 (%)

Time x 24 = +3.4 (d%) = +4.4 (d20) = +23 (%)

Time x 30 = +3.7 (3d6) = +4.66 (d20) = +24.5 (%)

Time x 32 = +3.75 (3d6) = +4.75 (d20) + 25 (%)

I would not extend the table further than that without explicit permission from the player – in fact, I would probably get such permission far sooner than the table implies. “You’ve spent 10x as long on this as you thought it would take, and you’re not sure you’re anywhere near a solution. You can either call the attempt a failure and deal with the consequences, or you can keep going in hopes of finding a solution.” And then revisit that question at 20x and 30x. Or do it by eights, or fours. (It can be worthwhile, once the base system is understood by the player, to get an indication from them of what time-checks you want them to use – remembering that this does not intentionally spend extra time on this stage of the design process, it caps the amount of extra time the GM can use before consulting the player).

Extra time applies only to the current Specification; the interval resets for the next one.

A clarifying note

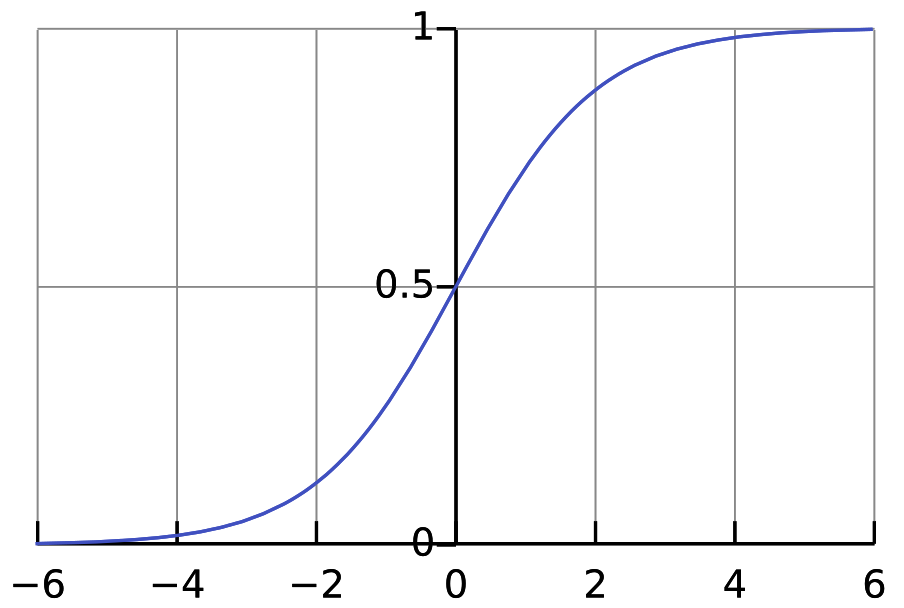

3d6 have a nonlinear probability curve, but the system deliberately ignores this. That has consequences, which in turn have consequences.

The ’round in the character’s favor’ covers a lot of the resulting issues; it causes a flattening of the non-linearity of the probability curve, undervaluing the most probable results and overvaluing the extremes, but not by so much that it can’t be tolerated.

The net effect of this is to make the roll a little more ‘knife edge’, because success by any amount is a success (and so the flattening of the best results can be ignored), while failure is made a little more probable. But this is mitigated by the round-in-favor rule, and the availability of partial successes further soften the impact to produce a system that is intuitive art the game table rather than robustly perfect in its statistical modeling.

A second clarifying note

Rounding for a die roll always results in integer values – you can’t roll “3.6” on 3d6, it always collapses into a 3 or a 4 – and the round-in-favor rule makes this explicitly a 4.

Equally, a 3.2 is actually a 4.

Rounding errors are a fact of life. Compression of 5% of a 1-100 range to a 3-15 range (3d6) or 1-20 range (d20) is always going to introduce them anyway. In fact, they are so ubiquitous that their absence is the exception, not the expectation. Don’t stress about it; there are far greater sources of error that can and will drown this out, even in the course of a single project.

A third clarifying note

To be statistically robust, the table only needed entries for 2^N x Time – 2, 4, 8, 16, 32. I’ve included selected other values because they are likely to occur in the real world (x3, multiples of x5), and because they help players and GMs visualize the curve, ie the relationships between values.

There are enough results on a d% to make the curve appear smooth despite the rounding. That’s not the case with other dice structures.

There’s no real need for a Time x 14 entry, for example – so none was included.

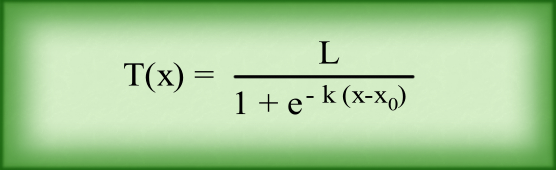

Metaspecifications

I can only think of one of these, but I’m making it a general category in case GMs find others.

“I want / need to complete this project in half the usual time”.

Okay, so halve the interval, and then think of the downsides.

If extra time gives a bonus to success, less time should give a penalty to all rolls.

Multiply 32 x (1 minus the fraction of time) and look up / calculate the result of the resulting ‘extra time’.Double the resulting modifier and make it bad instead of good.

The following are intended to show how it’s done (and provide a bit of a cheat sheet), not to be a comprehensive table of results.

9% Time Reduction = Time / 1.1 = 32 x (1 – 1 / 1.1) = 3 = -7.9%

17% Time Reduction = Time / 1.2 = 32 x (1 – 1 / 1.2) = 5 = -11.6%

23% Time Reduction = Time / 1.3 = 32 x (1 – 1 / 1.3) = 7 = -14%

29% Time Reduction = Time / 1.4 = 32 x (1 – 1 / 1.4) = 9 = -15.85%

33% Time Reduction = Time / 1.5 = 32 x (1 – 1 / 1.5) = 10 = -16.6%

37.5% Time Reduction = Time / 1.6 = 32 x (1 – 1 / 1.6) = 12 = -17.9%

42% Time Reduction = Time / 1.7 = 32 x (1 – 1 / 1.7) = 13 = -18.5%

44% Time Reduction = Time / 1.8 = 32 x (1 – 1 / 1.8) = 14 = -19%

48% Time Reduction = Time / 1.9 = 32 x (1 – 1 / 1.9) = 15 = -19.5%

5% Time Reduction = 95% Time Taken = 32 x (1 – 0.95) = 1.6 = -3.4%

25% Time Reduction = 75% Time Taken = 32 x (1 – 0.75) = 8 = -15%

30% Time Reduction = 70% Time Taken = 32 x (1 – 0.7) = 9.6 = -16.3%

Time / 2 = 32 x (1 – 1/2) = 16 = -20%

Time / 3 = 32 x (1 – 1/3) = 21 1//3 = -22%

Time / 4 = 32 x (1 – 1/4) = 24 = -23%

Time / 5 = 32 x (1 – 1/5) = 25.6 = -23.4%

Time / 6 = 32 x (1 – 1/6) = 26 2/3 = -23.6%

Add an extra Specification to the start – “Accelerated Development” – and another to the end, “Minimal Testing”.

The GM gets to add a free “unwanted side effect”.

If the purpose could be described as “Industrial II” or higher, add another “Early Release”, and the GM can add a second free “unwanted side effect” or an “application restriction” – which reduces the effectiveness of the solution but usually doesn’t make it completely useless for the intended purpose. “Takes twice as long to have an effect” is about the softest choice.

If the character fails ANY of the extra specifications, there IS no way to complete the project in the time desired.

The character has two or three options:

1. The GM can rule that some specification carried over from a base model are affected as though it was a Specification that had failed. This option is ONLY available in that specific circumstance.

2. Revert immediately to the standard timeline, crossing out the accelerated development Specifications (but not the consequences of their having been there).

3. Keep the accelerated development timeline but further compromise the effectiveness of the project with partial solutions that are twice as bad as those normally encountered.

Regardless, the time spent trying to fine a method of achieving the accelerated development is gone.

Slices Of Time

If you study the numbers in the table closely, you will see that the arrangement is non-linear (as you would expect with logarithms involved). Four attempts at Time x 8 are 4 attempts at +15. You can’t conflate those into +60, but the combination is obviously going to be somewhere between that value and +15 – you can derive an algebraic expression for this specific series of numbers, but it’s not worth the effort. Spending all that time on one attempt gives a total bonus of +25%, and it’s intuitively likely that this is below the compounded value. That means that it appears worthwhile for the researcher to divide up their time into smaller slices and multiple attempts, trying multiple different paths to success. What’s more, it is also logical to make all those attempts concurrently, so that you achieve a greater likelihood of success in a fraction of the time that margin of success would otherwise incur.

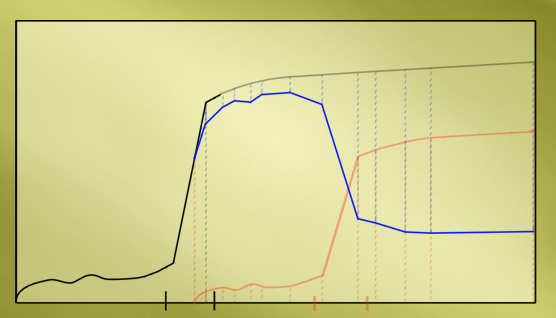

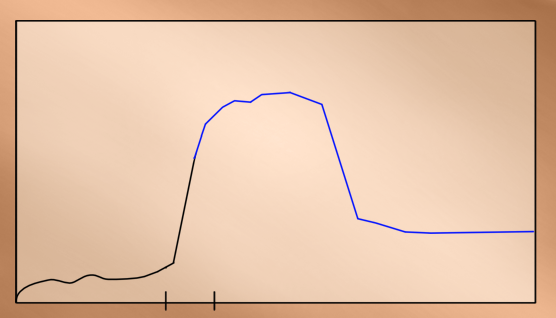

I’ve described this logic in detail because it is in this detail that you discover the flaw in this arrangement – the system assumes that you are doing this already. This ‘suggested’ layering of processes is how you get to the +15% in the first place. So this ‘logic’ is counting the benefits twice. And that’s how you get to a modifier somewhere in the vicinity of +50% when the system dictates +25%. It’s a subtle but definite attempt to cheat the system. Those 4 attempts at +15% simply mark 1/4, 1/2, 3/4, and completion ‘checkpoints’ on the path to a net +25% in the final roll.

Checkpoints can be useful with long intervals, describing the process and it’s progress toward ultimate success or failure in the integration of this specific requirement. The character can make 3 rolls at +15% or it’s system equivalent, which the GM interprets as measuring progress – a success doesn’t get the character all the way to the next step, and a failure doesn’t obstruct forward progress. They then make the fourth roll at the indicated +25% for ultimate success or failure in this step.

Greater verisimilitude comes from the use of cumulative time for these rolls – in the case of this example, 8x, 16x, 24x, and finally 32x. This ‘weights’ the intermediate results to reflect the character discarding paths that seem to be going nowhere and honing in on their ultimate solution and its success or failure.

Critical Successes do nothing but affect character confidence. If a Critical Failure occurs on a checkpoint roll, the GM should invent a number off the top of their head for progress, which the character will know not to be correct – but he doesn’t know how badly incorrect it is. The resulting confusion, uncertainty, and doubt is the consequence of the failure. These interpretations do NOT apply to the final roll needed to complete the Specification’s implementation, they are only about indicating the progress-to-date. But they do serve one additional function: they remind the players that the project is ongoing. The GM should look for opportunities to insert ‘progress text’ and event descriptions, even little roleplay moments, into the ongoing narrative on a regular basis.

Notes regarding Burnout and Fatigue

While ‘burnout’ and ‘compounded fatigue’ are real world phenomena, they are deliberately ignored by the system in favor of game-play.

The potential for large-scale intervals, for designing and constructing a space station for example, implies that characters don’t have to focus continuously on the task, but can interrupt it as necessary; time not spent on the project doesn?t count toward it (though a generous GM might allow that the problems are still ticking over in the back of the character’s mind and permit 10%, 5%, or 1% of such time to contribute to the total.

I thought about excluding sleep time from that, but there are many documented cases of problems being solved ‘on awakening’ which suggests that the subconscious keeps working on problems even while sleeping. That in turn suggests that this would only complicate things as an exclusion or as a separate ‘passive time’ accumulation; it’s a detail that is either unnecessary (interval less than hours) or undesirably complicated (intervals more than minutes).

So they have been left out for cleaner game-play.

Endurance – if you have it

In any system where Endurance gets tracked, skill use – concentration on a task – should cost Endurance. The amount should be determined by the recovery frequency and amount.

In the hero system, Endurance costs are generally determined by dividing the active cost by 5, then applying any modifiers to that. I originally split modifiers into two groups – one that affected END cost and one that didn’t – because it didn’t make sense to me that “reduced END cost” should increase the END cost of using a power, while “increased END cost” decreased the END cost by reducing the Active cost.

In the Hero system, characters will recover 2-4 END or more twice per 12-second turn. That’s 4-8 (plus) in 12 seconds, with the character’s Speed determining how many opportunities they get to act, i.e. to spend that Endurance. It’s geared to relatively low levels of powers – 4-dice attacks costing 4 END each. But it can be made to scale to more epic power levels, and that’s what my original home rules were intended to do. I wanted Superman types who were epic but ran out of steam and had to pause to rest for a while, creating windows for other characters to have the spotlight, and lower power-level characters with low END costs who could act more frequently and more continuously, and all points in between.

If you consider the Purchase price plus improvement cost of skills in the Hero System to be the active cost, then this same division by 5 works perfectly. Skills at very high level (15+) cost 2-3 END, skills at competent levels (10-15) cost 1-2 END, skills at a relatively low level (5-10) cost 1-2 END, and skills at the amateur level (1-5) cost 0-1 points. The bigger the potential game impact, the bigger the END cost.

The current version of the homebrew game system rules is d% based. Many of the stats can range higher – a lot higher – END reserves being one of them. It’s not unusual to have 50-60 END, recover 5-10 per turn, and act just once in a turn. But END costs are also higher, and you can do more in a turn. Skills are purchased with Skill Points, which in turn are paid for with character points, so characters with high capacities for learning skills can get more skill points per character point. Skill points spent on a skill divided by 20 almost works, but penalizes skill-heavy characters; instead, there’s a flat 1, 2, 3, or 4 cost based on skill level.

In implementing the system described in this post, with multiple skill rolls required over a time frame specified by the GM, I would specify a 2-END cost, that cannot be recovered until the end of the process. If there are 8-10 Specifications (not uncommon), that’s 16-20 END, lowering the character’s capacity to act in the meantime without making them completely helpless. That’s within the range of normal people in the system. Furthermore, taking a substantial break (one interval) would deduct END Recovery from that accumulated total.

For the standard Hero System, I would do the same, but price the END cost per Skill Roll at 1.

D&D and Pathfinder don’t track END, but they do have the concept of “Shock Points” – the character gets as many of those as they do hit points, and they go down with Damage just like regular hit points. I would contend that these represent mental fatigue amongst other things, and some attacks do non-lethal Shock damage instead of regular damage. They are normally fully recovered at the end of a character’s turn, or once a minute, or something like that, and – like hit points – the pool can grow quite large at higher levels.

For low-level campaigns, I would use a similar approach to the base Hero system – non-recoverable shock points, 1 per skill roll. For mid-level campaigns, i would make it 2 points, and for high-level campaigns, 3. Note that this doesn’t describe the current levels of the characters, but where those characters are expected to be at the end of the campaign / for the majority of the campaign. This limits the capacity of low-level characters in a higher-level campaign so that characters can feel like they are growing in competence as they progress.

Of course, if a character runs out of END in the process, they suffer from Burnout and need to take a significant break of 1 interval. If the intervals are seconds or minutes, that’s not all that significant; if its hours or days, that’s inconvenient; if it’s weeks, months, or years, that’s a lot more painful. The first interval restores 2 END; if the break is extended another interval, this rises to 4, then 8, 16, 32, and so on, up to the original maximum.

If the system doesn’t have any mechanism for tracking Endurance, just ignore this whole section.

Critical Successes and Failures

These don’t apply to every game system but are generally profound when present.

A critical success halves the interval for that Specification and may optionally reduce the interval for subsequent Specifications, reflecting the “stroke of genius’ or ‘flash of inspiration’ inherent in the concept of a critical success.

Optional: A breakthrough can carry extra momentum into the next stage, worth a +5% or +10% bonus (+1 or +2 in other game systems).

Optional: The GM can rule that the breakthrough makes a future Specification redundant or simplified to the point of being incorporated into this Specification, removing that future item off the list completely.

Optional: sticking with the main proposal, the GM has to decide how much the interval reduces by. I recommend values of 10%, 20%, and 25% be considered, but 15% is a good compromise.

A critical failure should be a failure like any other, but worse, or may be interpreted by the GM as a Barrier to a later step, only discovered when that stage of the process is achieved.

That turns the failure into a time bomb with a hidden clock – the player will know that it’s ticking but not when it will blow up under their feet. For the moment, the character thinks they have succeeded even if the player knows better, and this should be made clear to the player. However, the solution found to that Specification contains a hidden defect that only time will reveal.

The GM should devote some thought to what this hidden defect might be – it shouldn?t be anything that would be obvious in an earlier step, it should be something subtle but catastrophic in terms of the intended purpose. Designing an air-breathing jet engine only to discover that it needs to be kept underwater in operation because parts would otherwise overheat, makes the design worthless. The solution is to replace the effected parts with something more heat-tolerant, or recalculate and remodel the engine to divert the excess heat away from the affected parts. The choice of solution can have a profound impact on the look-and-feel – an experimental jet engine with huge radiator fins is SO steampunk or pulp!

Success at last!

Eventually, the last roll required will be achieved with the last Specification successfully incorporated. The results are now ready for use as specified in the purpose. But here’s where the fun starts – anything NOT specified in the design is free for interpretation by the GM. Side effects are always possible. The goal should never be to make the results useless, but to make the experience interesting. So save these ideas for occasions when the application of the results themselves are less interesting than they should be. Remember both the “Rule of Cool” and that no product is EVER perfect.

Optional Rule, All systems

Sooner or later, a setback will require the character to repeat part of a series of rolls, looking for an alternative route to success (the most obvious approach having failed). That potential is baked into the system, deliberately.

The GM can choose to provide a +5% or +1 modifier to subsequent iterations of a repeated step, reflecting the thought that the character has already put into the integration of that Specification.

This does two things, one more important than the other(okay, maybe three). First, it slightly changes the balance between setbacks and partial solutions in favor of the first because it weakens the penalty involved; but to my mind it doesn’t do so by enough to warrant concern.

Second, it adds a hard limit to the number of times a single step can recur before it becomes an automatic success (critical successes and failures notwithstanding). Automatic success rolls still need to actually rolled to test for critical success or failure, but such rolls take no time. This puts a cap on the process. That’s the most important consequence of this optional rule, I think.

And third, it changes, slightly, the way a player looks at the system in their favor. That can be an important element of the decision-making process when it comes to using this system at all. Given that the system itself enriches the tactical options open to the player and enriches the storytelling at the game table, its existence within the operating rules of the campaign is a benefit to the GM; but a benefit that remains only potential until and unless the rules are actually used. If they then become tedious, they are unlikely to be used again; if they add to the tension and drama, and hence the entertainment value of the game, players are more likely to call upon them again. This is an in-between consequence, starting small and growing with successive uses of the system.

It’s never possible for an Automatic Success result if the system has critical successes and failures, because they override a success if rolled. However, you can approach that point – 17/- on 3d6 is just about there. But there’s always that chance to roll box cars. It doesn’t matter if your change is 28/- (it would never actually get that high) – box cars or their equivalent is ALWAYS a failure.

If you don’t have criticals, then automatic success does become possible, but it never becomes possible to automatically get a critical success because this set of mechanics doesn’t have them.

Before moving on, I want to highlight that there’s at least one other Optional Rule described within the examples, so don’t skip over them too quickly, even if you think you understand the functioning of the system or they are referring to a game system other than the one you’re using.

Selected Full Examples

I kept thinking up new ways of using this system. Too many to offer them all as fully-worked examples. So I have divided them into three categories: a couple of full examples to illustrate the application of the system, a few examples in which some key conceptual element can be brought to light, and which are discussed briefly and perhaps partially worked up in furtherance of that, and a few more that are going to get nothing more than a high-level summary, or perhaps even less.

Using the system to design a new magic spell for the Hero System

Interpreting this system for the base Hero system is conceptually simple – one Specification for the power or skill or ability that’s going to be used to simulate the results from a game mechanics standpoint, or a general conceptual description simplified as much as possible (stripped of anything detailed, in other words), and one Specification per modifier.

Plus one Specification that has to be #2 on the list – Ad-hoc vs Permanent.

Ad-hoc vs Permanent magics

Ad-hoc spells are here-today, gone-tomorrow deals, you get one shot at successfully using them. I highlighted the word successfully, because the GM can arrange circumstances in which the first shot fails – the goop doesn’t hit the target or whatever – and it’s not fair to make the character go through the whole process again. One interval is enough to whip up a whole new batch, if necessary. But when the effect that the character has invested their time in does have the effect specified, the mechanism for delivering that effect fizzles and is gone.

Some circumstances – constructing a new chemical – may seem to preclude this from being reasonable. That’s tough luck for the creator, it still happens. But for a spell, this is entirely reasonable.

Permanent magics are stored or recorded somewhere and can be used again. Depending on how magic is configured in your game mechanics – and there are multiple options – this can usually be regarded as creating a new ability for the character; the required character points are then spent, and it becomes a permanent addition to their character sheet.

Once this Specification is successfully incorporated, the GM can assign a blanket modifier to all the subsequent skill rolls. He should be consistent but open to exceptions. If the GM doesn’t want ad-hoc spell use, apply a -50%. If the GM thinks that permanent magics, with side effects that are tolerable on a recurring basis, should require more effort dotting i’s and crossing t’s, then a -20% for permanent solutions is reasonable. If they want to emphasize the flexibility of magic, they can apply a +25% to ad-hoc spell creation.

Increasing the likelihood of success on the skill rolls shifts the actual time closer to the minimum. Decreasing it adds to the likelihood of some sort of roadblock by making success less likely. This will generally slow the project down.

All this is relative, of course. If you have 8 or less in a skill, a -4 modifier is huge, especially taking into account the non-linear nature of a 3d6 roll. If you have 13/-, that same modifier is significant but not catastrophic; if you have 17/- or 18/-, it’s niggling but little more.

The other way to represent this distinction is with Interval. Almost by definition, the Interval for ad-hoc spell use is seconds; I would set the Interval for the crafting of permanent magics at hours if not days. Given how many seconds there are in a day (86400), that’s a massive ratio. Using the divide-by-5 and convert to log-2 scaling of the Hero System, that’s a little more than +14. But hours or minutes seem too short for realism and game balance to be maintained.

What CAN be done is to impose another blanket modifier to reflect the breakneck pace of development. Not the whole -14, though – that makes the system just about unusable. Maybe half that – a -7 makes ad-hoc more difficult. -7 on a d20 would be equivalent to -35% chances, on a 3d6 it would be more – an eyeball, seat-of-the-pants estimate, the same as I would make when GMing, is between -40% and -45%, and probably at the lower end of that range.

Bearing in mind that there might already be a blanket modifier for ad-hoc use, this can either make the process almost untenable, or can moderate the net impact back toward neutral.

The Example: Eyes Of Hodur

I’m going to lift a spell from the Grimoire of one of the characters in my Zenith-3 campaign. Credit to Nick Deane for the source (and the character). The numbers for the modifiers might not be (almost certainly won’t be) the same as in the official material but it will be close enough.

Skill: Spell Use

Value: 13/-

Interval: Seconds (ad-hoc spell)

Blanket Modifier: -25% = -25/5 * 0.75 (from earlier in the system description) = -3.75, round to -4. Net roll: 9/-.

Conditions: Magic Workshop +2, significant past experience at crafting spells +2. Net roll: 13/-.

Spell Description:Eyes of Hoder

School: Mind Magic

Effect: 2d6 Flash vs entire sight group + Range Modifier x2 (10 pts)

Modifiers:

Skill Roll Required -1,

Incantation -1,

Gestures -1,

Linked -1 GM’s Note: Linked to what? Assumed valid

Can Use Normal Mana or Mana Battery + 1/4,

Extended Duration: 30 minutes per point of success +3,

Only Affects One Target /4

Modifiers’ Totals: -4 +3 Œ /4

Base Cost: 30

Active Cost: 25

Net Cost: 5

Mana Cost: 1

END Cost: 5

Range: 30′ (Flash has a range of 5″ for every 10 active points, round down – power description) GM’s Note: Range miscalculated, from the rule cited should be 25/10 x 5 = 2.5 x 5 = 12.5″ = 24m when rounded down.

In the base Hero system, there is no division between types of modifiers, they are all + or -, with the + all counting toward active cost and the – not. So the modifier totals become +3 1/4, -8. This impacts the results:

Base Cost: 30

Active Cost: 30 x (1 + 3 1/4) = 127.5 = 127

Net Cost: 127 / (1 + 8) = 14

Mana Cost: n/a

END Cost: 127/5 = 25

Range: 127/10 = 12? = 24m

Clearly, the spell would not be designed quite this way using the base Hero system, but this is good enough for example purposes.

Specifications:

1. Flash

2. Ad-hoc Spell.

3. 2d6

4. Skill Roll Required -1,

5. Incantation Required -1,

6. Gestures Required -1,

7. Linked -1

8. Can Use Normal Mana or Mana Battery + 1/4,

9. Extended Duration: 30 minutes per point of success +3,

10. Only Affects One Target /4

1 sec, Roll #1, Specification 1: 13/- -> 7, success

2 sec, Roll #2: Specification 2: 13/- -> 11, success

3 sec, Roll #3: Specification 3: 13 +2 modifier = 15/- -> 17, failure

GM applies extra time, x4 intervals = +2 = success

6 sec, Roll #4: Specification 4: 13/- +2 modifier = 15/- -> 7, success

7 sec, Roll #5: Specification 5: 13/- +2 modifier = 15/ -> 12, success

8 sec, Roll #6: Specification 6: 13/ +2 modifier = 15/- -> 9, success

9 sec, Roll #7: Specification 7: 13/- -4 modifier = 9/ -> 15, failure

The GM, having used extra time once, could do so again, but the modifier required to get from 9/- to 15/- is huge and probably beyond the limits of that capability. But he imposes a partial extra time adjustment of x4 time for this step anyway, because reducing the gap from +6 required to +4 required lets him be a little more generous with his partial solution offer.

The player can choose the GM’s offer of a partial success, “Link takes 2 rounds to establish each time”, or can choose a block/setback.

This offer is very nuanced. If it were 1 round each time, the offer would almost certainly be accepted, because it doesn’t compromise the spell’s function very much. If it were 3 rounds each time, the offer would almost certainly be rejected. 2 rounds is the sweet spot at which the character might be tempted if he needed the spell in a hurry – which he does, it’s an ad-hoc spell.

The GM warns the player that the magnitude of the failure means that the setback will be substantial, and gives him one last chance to change his mind, but the player feels that a few extra seconds is tolerable.

The GM returns the clock back to the start of the “Links to” Requirements (Specification 4), explaining that the simple method of making the spell restricted is being overridden by the link. The details beyond that don’t matter.

So that last entry now reads,

9 sec, Roll #7: Specification 7: 13/- -4 modifier = 9/- -> 15, failure

x4 Extra Time -> 11/- still failure

…and the process continues from there.

12 sec, Roll #8, Specification 4: 13/- +2 modifier = 15/- -> 12, success

The GM notes that a full turn has passed and lets everyone else act.

13 sec, Roll #9, Specification 5: 13/- +2 modifier = 15/- -> 11, success

14 sec, Roll #10, Specification 6: 13/- +2 modifier = 15/- -> 17, failure

x 4 Extra Time – 17/-, success

15 sec, Roll #11, Specification 7: 13/- -2 modifier = 9/- -> 9, success

The revised approach solves the problem, but could easily have failed again.

16 sec, Roll #12, Specification 8: 13/- +2 modifier = 15/- -> 15, success

17 sec, Roll #13, Specification 9: 13/- -4 modifier = 9/- -> 9, success

This is the second potential ‘choke point’ identified by the GM when considering the list of specifications. He really wanted to be able to ‘force’ the spell effects down to 30 or even 15 seconds per point of success, because 30 minutes of blindness is massive, in tactical terms.

He then realizes that the design fails to specify ‘success on [what]’. He can define it as ‘success on the skill roll’ (6 x 1/2 = approx 3 hrs of blindness, maximum) or ‘points of flash rolled in excess of defense’ (ave 3.5 x 2d6 = 7- flash defense of 0-to-5 for a net 1-3.5 hours of blindness resulting). He doesn’t know what the character intended when designing the spell parameters and not seeking clarification on this point leaves him the latitude to decide. He chooses the latter option, but deliberately doesn’t tell the player – he will find out when he casts the spell. I’ve considered labeling this an “Ambiguity Tax” but “Ambiguity In, Ambiguity Out” probably comes closer.

Note, too, that the player hasn’t specified the skill, so that reverts to the system default of the most appropriate skill, “Magic Use, 13/-“. If the player wanted to employ something other than the system standard, he would have had to Specify that, and justify it to the GM.

18 sec, Roll #14, Specification 10: 13/- -2 modifier = 11/- -> 13, failure

The GM notes that the player hasn’t specified casting time, and therefore also expects that to default to the system standard of 1 round activation time. He could ‘solve’ this failure with additional time, but really wants to reduce the effectiveness of this spell a bit, so he takes advantage of the players’ assumption to add an 11th Specification, x2 casting time.

The player’s choice not to list this variable was not unreasonable; the GM’s use of the added Specification overriding the system standard is exactly what the player could have done originally, but he couldn’t have specified the base standard, it would have to incur a modifier. It’s even possible that the player deliberately left this open for the GM to exploit, confident that the GM wouldn’t be unfair.

This changes the failure into a success:

18 sec, Roll #14, Specification 10: 13/- -2 modifier +2 additional specification = 13/- -> 13, success

19 sec, Roll #15, Specification 11: 13/- -> 11 success.

The modified spell is now complete and ready to use. It has taken 19 seconds to craft, and the spell itself has changed slightly in the construction process thanks to the x2 Casting Time added at the end. So the ‘stat block’ describing the spell would have to be recalculated to accommodate the additional parameter.

The GM can now append special / side effects and a description of the spell when it is cast. He notes that there is not a ‘no attack roll’ parameter specified, so the character still has to make one when using the spell (system default) but he adds an additional element: if the attack roll fails, the spell affects the nearest character, with a preference for anyone between the target and the caster for tie-breaking purposes. Assuming that the target is being attacked by the other characters, that almost certainly means a team-mate. He describes the spell as a ball of light the size of a fist that streaks from caster to target and wraps around his eyes for the duration of the spell’s effects. This is not at all what the player was expecting; Holdur was a blind deity in the Norse Mythos, he expected the effect to be one of simply denying the target the ability to see. But if he wanted ‘no visible effects’ he should have Specified that and incorporated the resulting modifier; he didn’t, and the system default is for a visible effect; the GM simply drew inspiration for the nature of that effect from the power, “Flash,” on which the spell is based.

Using the system to design a new magic spell for the D&D System

I know I said that there would be a second complete example, but I completely ran out of time after discussing the translation mechanics. Sorry!

This is equally straightforward, at least conceptually. Each spell has a stat block that describes most of its specifics; each one is the basis of a Specification. In each case, the GM has to determine whether the numbers are a ‘per level’ or absolute number. Some spells have additional numeric descriptors in the spell descriptions, so these should also be scoured.

On top of that, in some versions of the D&D system, Metamagics can be used to adjust these values, but no Metamagic can be incorporated directly into a base spell without GM approval. Such approval still doesn’t incorporate the metamagic, but it does simulate it and does require an additional Specification for each increase, for example “x2 Range”.

One-off vs Spellbook storage

The exception to that statement would be if the GM adopted some variant of the “ad-hoc” spellcasting concept. Ad-hoc spells always have to build their spell variations directly into the spell construction and that includes metamagics, because those metamagics can’t be tacked on after the fact – ad hoc spells are always cast ‘as is’.

In addition, I would consider the following as a House Rule within that new subsystem: Multiply each spell level by the number of spells within that spell level that the caster can memorize / use, daily, and divide by 3; the results are a ‘Spell Creation END pool’. This limits the ad-hoc elements of the spell to manageable levels. It should not be used outside of this system, it’s not sufficiently robustly-developed for that.

All that said, D&D does in fact, offer a form of one-off ad-hoc spell variants, permitting these to be captured in either potion or scroll form. Of these two, Potions are the better choice from the GM’s perspective, because they can’t then be transferred into a character’s spellbook for free (in terms of this system).

To offset the benefits of attempting to rort the system in this way, all scrolls generated using this system should have the property “Fragile” appended to their descriptions by the GM; this means that there is a 75-80% chance that any attempted transfer into a spellbook fails because the scroll self-destructs prematurely, requiring the whole creation process to be repeated. This applies ONLY to spells created using this process; the GM is free to set whatever rules he wants regarding “normal” scrolls and their fragility. And it only applies in situations where a character is trying to cheat the system.

For such repetition, I would normally grant a +1 modifier because its been done by the character before, but in this instance, forget it. The cosmic halo of Mana has shifted or changed character or something, the ‘weave’ has been stretched by the failure, or whatever. You don’t earn a GM’s goodwill by attempting to cheat the system.

On the other hand, creating a ‘permanent’ magic item (see below), with its attendant difficulties, is going about this honestly, and no such penalty or ill-will should result. But note that the penalties for creating a spell this way are already greater than those involved in crafting a permanent spell.

Magic Items

You can use this system for the crafting of a magic item. It is the GM’s option whether or not to use it for ‘standard’ items, but my recommendation would be not to. However, using this approach to get an estimate for the crafting time of a “variant item with no significant variations”” is perfectly valid. The GM should also estimate the crafting cost of magic items using standard items as a guide.

Start with the name of the item. “Sword of,” “Armor of,” etc are your cues to the first type of specification, the Form.

There is a hierarchy to these things, providing a scale for partial successes like any other. That hierarchy is:

Consumables:

Potions (n x s / m / h)

Scrolls (h)

Wands (h / d)

Arrows / Other (d)

Permanent:

Miscellaneous Minor (d)

Daggers & Arrows (d)

Miscellaneous Medium (n x d)

Shields (w)

Rods & Staves (n x w)

Miscellaneous Greater (n x w)

Other Weapons (n x w)

Swords (n x w)

Armors (n x w / mo)

Minor Artifacts (n x mo / y)

Major Artifacts (n x y)

The higher up the scale you go, the greater the global penalty to rolls. I would set the zero point to be Miscellaneous Minor; Forms lower in the ranking get a +2 bonus per step, forms higher in the ranking get -1 per step.

Artifacts of any kind should get an additional global penalty.

Note the codes next to the forms – these are the recommended intervals (s = seconds, m = minutes, h = hours, d = days, w = weeks, mo = months, y = years). If a recommended interval is preceded by an (n x ), it means that the intervals should be multiplied by n, which is an integer from 2 to 6. Where the magic item has a plus associated with it, n should normally be ‘plus’+1, but I would make exceptions for consumable items falling into this category.

But you don’t know what the ‘plus’ is yet?

That’s the next Specification. Each magical ‘plus’ gives a -1 modifier to the roll for this Specification.

Each ‘plus’ permits (but doesn’t require) the incorporation of one spell-like effect. If this effect is already extant within the rules, a single Specification is needed for each. The GM should use modifiers to adjust for more powerful effects at his discretion.

The first Power incorporated gets a +5 modifier, the second a +4, and so on. These are not fully universal, but they apply to everything related to that Power.

What’s more, if the designer voluntarily gives up some of these Power Slots, he gets a bonus to the rest – +2 for each slot ‘locked out’. This enables larger-plus equipment that is conceptually focused to have bigger abilities than one that is all over the shop.

If the effect is not normal, but is described by a spell that the character knows, it requires two Specifications: Spell-like effect is one, and the spell name and Character Level it is set to is the other. The higher the ‘effective’ character level, the worse the modifier.

If the effect is a customized version of the spell, it should be listed as both it’s original form (one Specification) by name, and followed by each of the modifications.

If the effect is a completely new spell, even one derived from a Reference Spell, the whole spell design process has to be incorporated. That means that you can save a lot of work if you create you new spells in permanent form, and only then commence enchanting the item.

Most spell-like effects are then followed by either ‘permanent’, ‘at will’ or ‘X times a day’, describing how often the effect can be used. This is a separate Specification, but there’s such a big gap between even “5 times a day” and the firs two that some special handling is needed.

X times per day is handled as a single Specification, with a penalty increasing as X increases, the amount of which is left to the GM to determine. But there are already a lot of penalties, so I recommend -X, which is therefore a peak modifier of -5.

‘Permanent’ and ‘At Will’ also have a -5 modifier, but they confer an additional -1 modifier on all other Specifications related to this specific ability. That gets fairly significant when there are a lot of rolls, as with incorporating a custom spell.

For wands and their equivalents, these have a different Specification at this point; every spell in them is the same, but the number of copies of a given spell that can be included per ‘plus-equivalent’ is 2, 4, 6, 8, or 10. The lowest value gives a +6 modifier, then +4, +2, +0, and -2.

Potions can usually only contain 1 ‘charge’ by default, a second and third Specification have to be included for a second and then a third ‘charge’. But all of these have the same modifier. In general, this makes potions more compatible with experimental spell design in the form of one-off spells.

After the first power, you have the second, and so on.

There are a lot of negative modifiers, so expect low net rolls and lots of failures. Almost every non-standard magic item is compromised in some way. I strongly recommend the optional rule that gives bonuses for repeated efforts.

Exotic Materials

Some materials are more easily enchanted than others. These materials give a blanket modifier to certain types of uses. I want to discuss two of them, and then mention a couple of others in more general form.

Adamantine is the ultimate recipient for Dwarven magics in the form of weapons and armor. It grants a blanket +8 to all rolls subsequent to this Specification. The Specification “Adamantium” (or “Adamantine”) does not receive this bonus, because it is such a difficult material to work with.

Mithril is the ultimate receptical for Elven magics in any non-martial form, giving +6 to all rolls subsequent to its Specification. It’s not quite as difficult as Adamantine to work with, so it also gives a +2 to it’s own Specification.

Other materials should be assessed by their purity. Steel that is forged and folded multiple times becomes more pure, and so do most other materials – enough to make this a general rule. Some other materials can can also be considered effectively ‘pure’ like gold, silver, platinum, ebony, ivory, gemstones, etc. The most pure give a +5 modifier to the first 3 specifications in each powers slot, then +4 to the first 3, +3 to the first 4, +2 to the first four, and +1 to the first 5.

Herbs and woods are the weakest of the lot. They give +1 to the first 2.

Back to crafting a unique spell!

Wow, that side-trip into magic item design was a lot more extensive in the end than I originally had planned!

Reference Spell & Modifier Levels

Each spell should also list a ‘reference spell’ that is used to guide the GM in assessing the Specifications. This doesn’t have to be a spell that the character has access to, and it’s more powerful within the ad-hoc system if the character doesn’t; it serves purely to set a baseline for the GM evaluation of differences relative to the chosen Specifications.

Each numeric Specification is a point on a continuum, and can be moved up or down by discrete steps (being careful to avoid using the term ‘intervals’ because that already has a specific meaning in this set of rules). It’s up to the GM how large these steps are, but they should make most desirable values a small integer number of steps away.

Range might be “20′ per level” based on the Reference Spell; steps down from that (making the spell easier to craft) might be “15′ per level”, “10′ per level”, “5′ per level”, “1′ per level”, and “touch” – with “touch” being a floor to that particular Specification. Similarly, you can’t have a fraction of “Instantaneous,” so that’s a floor on Casting Time. Each of these steps down would add +2 to the roll for that specific Specification (but note the optional rule below).

Increasing the Range Specification from a Reference level of “20′ per level” would yield values of “25′ per level,” “30 feet per level,” and so on, and these give a -2 modifier to the skill roll for incorporating that Specification into the design.

This approach is especially advantageous because it builds a scale for the formulation of “partial solutions” directly into the system. However, if necessary, the GM can use different step values for partial solutions.

Let’s say the player was aiming for a range of 30′ per level against a reference of 20′ per level. He flubs the roll by 3. Using the standard intervals of ±5′ per level, there isn’t a whole lot of room tom maneuver, there’s just one intermediate step. If the GM chooses each unit of failure to represent 2′ per level difference, that failure by 3 can be interpreted as being 6′ per level away from what the player was aiming for, or “24′ per level”. The GM can then offer this as an option to the character.

Optional Rule (all systems): Transfer Of Bonuses

I noted the GM’s determination of “Choke Points” in the first example. There’s nothing stopping the player from making the same assessment and ‘banking’ modifiers from what he regards as ‘easy steps’ later in the process to boost their chances of success on the earlier steps.

Sometimes, the player’s assessment will be correct, and this can cushion the process in their favor. And sometimes, the GM will have thought of a reason why a different step is a / the Choke Point in the process, and the player will have made a hard roll worse. The better the player understands the game world and its internal physics, and the GM and his way of thinking, the more accurately he will make this assessment; and when those understandings are more limited, this can provide a direct access to that knowledge.

Modifiers can only be transferred from Specifications yet to be rolled. Once the roll is made, only the specified modes of variation are permitted; player modifiers of this type become fixed, as does any matching penalty.

Players can decide that they are about to roll an easily-successful step, and take a penalty on it to make a later, more difficult, step, easier to complete. That’s fine. But they can’t look at a bonus after the roll and decide that the bonus was unused; they aren’t allowed to bank it for later.

This makes each Specification and its roll a more dynamic process, and boosts player interaction with the system and its mechanics – not a bad thing. But it does reduce the GMs ability to modify the outcomes, either in the character’s favor or against it, and that can be a bad consequence, especially from the GM’s perspective.

My own thoughts are balanced 50-50 on the question of whether or not to implement this; I can see both benefits and liabilities. That’s why it’s an optional rule. I would probably give the system a couple of opportunities to establish itself without the optional rule, and then introduce it on a trial basis. Or run a couple of “solo playtests” to see how big a difference it made to the ‘look and feel’ of the system mechanics. Or both. But that’s me; every GM is different and has different underlying philosophies to their GMing style, so you do you.

If there’s no one right answer, there are no completely wrong answers, either.

Spell Variants

Oftentimes, the goal isn’t an entirely new spell, it’s a variation on an existing one, which therefore becomes the Reference Spell. If the character already knows or has access to the Reference Spell, this confers a +5 advantage when crafting an ad-hoc variant and a +2 advantage when crafting a permanent addition to a Spellbook or Grimoire.

Intelligent Items

To craft an intelligent, sentient item is HARD.

They start with a seed of intelligence taken from the caster. This can be as large as the caster wants, so long as it is 1 point or better, but his own intelligence goes down by the amount of the seed, so they tend to favor fairly small ones. This point cannot be recovered by any means so long as the crafting is underway, and the character suffers from all the attendant consequences of his lower INT.

Each Specification of that seed doubles the resulting INT of the item, or doubles it’s rate of maturity. The INT growth normally takes 32 years to mature, so cutting that down to 6-12 months is highly desirable; most mages would prefer to take it further, but each such doubling also adds -1 modifier to the subsequent Specifications of this type.

Int Seed 1, doubles to 2, doubles again to 4, doubles again to 8, doubles again to 16, matures in 32, halves to 16, halves to 8, halves to 4, halves to 2, halves to 1 – that’s a total of -9 – four doublings and five halvings.

Int Seed 2, doubles to 4, doubles again to 8, matures in 32, halves to 16, halves to 8, halves to 4, halves to 2, halves to 1, halves to 6 months – that’s a total of -8.

Int Seed 3, doubles to 6, doubles again to 12, doubles again to 24, matures in 32, halves to 16, halves to 8, halves to 4, halves to 2, halves to 1, halves to 6 months, halves to 3 months, halves to 1 1/2 months, halves to 3/4 months (about 22 days) – that’s a total of -12.

The creator gets to specify one personality trait, the GM can add 3 more, or one more and add some words before or after the player-specified trait to modify it’s meaning. These must be specified secretly and i writing; only the GM is permitted to know everything. The other players at the table then supply (secretly and in writing) a single word each, which the GM has to arrange into 1-3 additional personality traits. To do so, he can transform any noun into adjective or verb form, or substitute a quality especially associated with the word, or attach any emotional state to a noun. Any unused words get discarded. The personality emerges as the item matures; only at the end of that process does the GM reveal the substance of the personality summary.

EG: The creator supplies the one word, “Loyal””. The GM adds “to himself” and after contemplating “puppy-dog eager” as a second personality trait adds the tried-and-true “Manipulative” instead. Player #2 offers “Lemon”, #3 provides “Eccentric”, #4 suggests “Affectionate”, and #5 gives “Gleams”. The GM transforms these into “Affectionate About Lemons” and “Gleams Eccentrically” (Eccentric to adjective).

So this is a magic item that is self-centered to the point of potential disloyalty, who likes to manipulate others to protect itself, who loves everything about lemons from their color to their scent to being bathed in Lemon Juice, and which somehow twists the light striking it’s surface to reflect that light in unusual and unexpected directions, a peculiar expression of vanity.

The GM could also have transformed “Lemon” into “sour”, and using it as a standalone personality trait. But he decided not to be that mean.

The item has all its powers while maturing, but is not able to apply as much intelligence to such use. If employed in this time, it might well make mistakes – serious ones.

If the final integration roll fails, does the caster get their INT seed back? – no, because failure isn’t necessarily the end of the story. The GM can apply extra time modifiers that turn the failure into an eventual success. He can impose a Block or a Setback, forcing the process to retrace some of its steps, or navigate an additional Specification; either of these choices keep the chance of success alive. Or the character can wait an interval and just try again. And again. And again.

It’s perfectly legitimate for the GM to rule that the final integration can only take place under certain conditions – “inside a magic circle on the night of a full moon” for example. However, he should ensure that the character at least hears hints as to such requirements long before he actually reaches this point in the casting. If he doesn’t follow up on this information, that’s on him. Me, I would use this as the trigger to a whole adventure – the character has to steal into the tower of a bunch of evil wizards and ransack their library for the information he needs, that sort of thing. And, should the character be discovered by the Wizards (he will be), he’ll need the other PCs to help him escape!

Selected other examples

There are three other examples of using this system that I want to highlight because they show off some aspect of what can be done with these mechanics. Like the spells (and magic item) example above, these will be more ‘how-to’s’ than full examples.

Using this system to design a better television set for mass production

To design and create a better TV set (or any other industrial gadget) you need to first define its fundamental properties. I picked this as an example because I think every reader will know what a TV set is and what it does..And for that reason, I think we cam define a TV set as, well, a TV set. So that’s the first Specification: “Prototype Television Set”.

What are the fundamental characteristics of a TV set? What do you look at when considering a purchase?

Price

The first item is retail price, but that gets a little complicated because the price is relative to the value of the currency. In general, it takes the form of a range from “0.5 x X” to “1.5 x X”, where X is the median price range. But you can’t define a median price range unless you’re comparing like with like. This is the Target Retail Price. It guides later design choices in ways that are too complicated to map out in a general form, and may or may not be achieved at the end of the process.

Screen Size & Function

Which brings us to the second item: Screen Size. These are defined as a basic shape (square, letterbox, cinema) and the size of the screen from corner to corner, and these are traditionally measured in inches long after every other measurement has been converted, in those counties that have switched to the metric system – but sooner or later, these measurements will also make the switch.

10, 20, 30, 40, 50 – those are the sizes in cm of small, portable units, divide by 2 to get inches. Choosing one of these adds the third item to the list, portable, which implies a weight range.

60, 80, 100, 120, 150 – these are the sizes of smaller domestic units in cm. Again, divide by 2 to get inches. Third Specification is ‘domestic, small’.

180, 220, 260, 300, 400 – those are medium modern domestic units in cm. Divide by 2 for inches. Third Specification is “domestic, medium”.

500, 800, 1000, 1200, 1500 – those are large domestic units in cm. Third specification: “Domestic, large”.

1600, 2000, 2500, 3000, 4000 – these are the “Home Cinema” sizes in cm, giving the third specification accordingly. Only the first two are common, but the next two are around – my nephew has a set that’s somewhere in the 3000 range, it takes up an entire wall.

5K, 10K, 20K, 40K, 60K, Special – those sizes are starting to reach the point where people have trouble grasping the size, so let’s switch the units up – meters and feet or yards: 50, 100, 200, 400, 600 meters, Special, multiply by 3.3 to get feet or 1.1 to get yards. The latter conversion is so simple it can be done in your head, so let’s use it: 55, 110, 220, 440, 660 yards, Special. 660 yards is around 1/3 of a mile. To the best of my knowledge, none of these sizes are in actual production – it would be more common to have a bank of smaller sets.

Note that anything in this final size group adds a penalty to the Reliability testing later in the process. These should be -10%, -20%, -30%, -40%, -60%, and -80%, respectively, or their game system equivalents.

These are the “fantasy” set-sizes, and I don’t think we need to go much further. Admittedly, my Dr Who campaign featured a spacecraft recently whose cylindrical body was a TV “set” more than a km in length, but it’s display was segmented into different levels within the spacecraft:

I’ve included the size category “Special” for such purposes.

Once you know the size category and associated X-value you’re talking about, you can go back to the actual size and fill that in, and that will inform the typical price point for that size of set.

EG:

X=2000

Size = 1000-3000

Price: $2500 AUD

Resolution

Each of these carries an implied resolution, an expectation of display resolution. In the old TV world of cathode-ray tube displays, these were measured vertically in “lines”, in the modern, wide-screen world, they are pixels across the top.

Old-style sets: square display area (more or less, they were actually 4:3 ratio). Later versions offered a “letterbox” format for showing widescreen images. You can still find sets in the smallest screen sizes that preserve this arrangement, if you search hard enough, but they are increasingly rare. 60, 80, 100, or 120 lines were on offer in portable, domestic small, and the lower sizes of domestic medium – which were considered large sets back in the day – but the real standards were 480 or 576 lines